The tone of compliance conversations around artificial intelligence has shifted this year. Even a year ago, many organizations still spoke about AI governance as if it were a planning exercise: write a policy, assemble a committee, run a few internal reviews, and keep one eye on Brussels, Washington, or whichever market seemed most likely to move first. That mood has changed. In 2026, the issue is no longer whether AI governance matters. The issue now is whether an organization has a serious operating layer capable of handling it at scale.

The pressure is coming from several directions at once. In Europe, the regulatory calendar is now close enough that legal teams, product leaders, and risk officers can no longer treat the EU AI Act as distant architecture.[3] Key obligations under the EU AI Act are moving from legal interpretation into operational preparation, especially as organizations get closer to the implementation stages that affect transparency, documentation, and high-risk system governance. Across markets more broadly, the bigger problem is fragmentation. Rules are not developing in a single line. They are multiplying across sectors, jurisdictions, procurement frameworks, supervisory expectations, and contract chains. That fragmentation has made the old compliance model feel too static for the systems it is supposed to govern.

What breaks first under that kind of pressure is usually not intent. Most enterprises already know how to write principles. What they struggle to do is prove, continuously and under scrutiny, that an AI system remains within acceptable boundaries after deployment, after retraining, after vendor changes, after model substitution, after a business unit modifies a workflow, or after a new disclosure rule becomes enforceable in a market they cannot afford to leave. The difficulty is not philosophical. It is operational.

That is why 2026 feels like an important dividing line for regulatory technology. RegTech is no longer being discussed only in the familiar financial-crime or KYC context. It is increasingly being positioned as a practical operational layer for AI governance[4]: the software and system infrastructure that helps translate legal obligations, internal controls, documentation requirements, monitoring expectations, and evidence capture into something repeatable. In practice, it comes down to the difference between having a policy about responsible AI and having a technical environment that can show who approved what, which model was in production, what data it used, how risk was classified, what changed, what was logged, and whether the system still complies after conditions shift.

The numbers behind that shift are hard to ignore. Grand View Research estimates the global RegTech market at $24.34 billion in 2025 and $29.27 billion in 2026, with a projected rise to $112.10 billion by 2033 at a 21.1% compound annual growth rate. Gartner, looking more specifically at AI governance platforms, expects spending in that segment to reach $492 million in 2026 and to surpass $1 billion by 2030. Those are not small movements at the edges of enterprise software. They are signs of a category becoming structural[1][2].

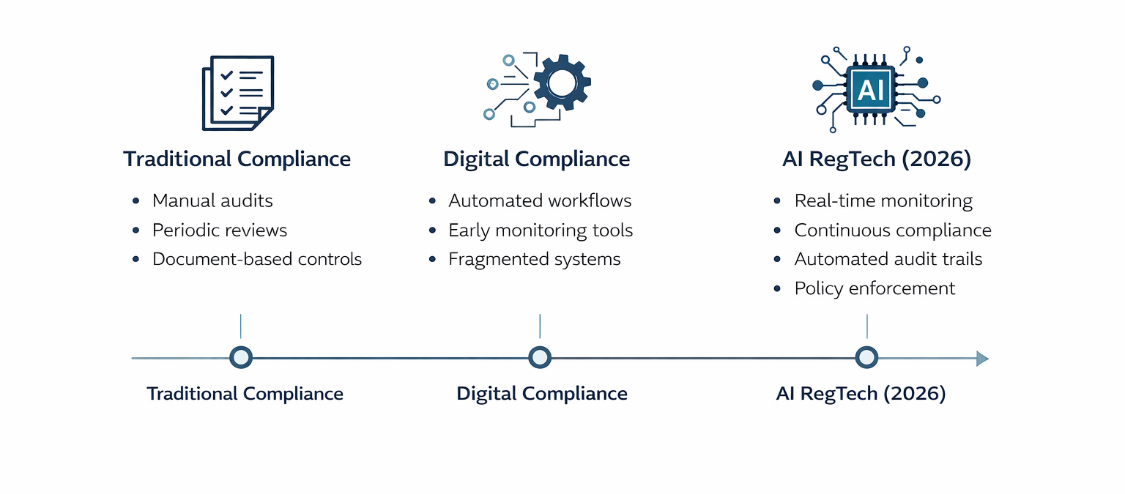

The reason traditional governance, risk, and compliance tooling has started to look inadequate in this environment is fairly straightforward. Conventional GRC systems were built for controlled review cycles, document collection, attestations, and periodic assurance processes. AI systems do not behave like a quarterly control checklist. They are dynamic, dependent on data flows, often reliant on third-party models or embedded services, and increasingly capable of generating outputs that create regulatory exposure in real time. A point-in-time review can still matter, but it does not carry the same weight when the system itself keeps moving.

That gap has created room for a more specialized class of compliance technology. Some of it sits inside established risk platforms. Some of it comes from AI governance specialists. Some of it is appearing through cloud alliances, monitoring layers, model inventory systems, policy engines, workflow orchestration tools, and automated evidence pipelines. These systems are not built for elegance. They are built to hold up under real operational pressure. They exist to help organizations move from reactive compliance, which usually arrives late and costs more, toward continuous compliance, where the burden of proof is built into the system rather than reconstructed after the fact.

There is also a quieter reason this shift matters. RegTech changes the economics of AI governance. Once compliance work becomes partly machine-readable and partly automatable, the discussion inside an organization changes. The burden stops looking like an endless cost center and starts looking more like a design problem with efficiency upside. Conformity assessments can be structured earlier. Risk classification can be standardized. Audit trails can be captured without weeks of manual reconstruction. Policy changes can be mapped across internal controls faster than a human-only team could reasonably manage. In a regulatory climate where speed and trust now travel together, that matters.

I do not think the market has fully settled on the final shape of this layer yet. Some vendors are clearly ahead in specific functions, but 2026 still feels like a formative year rather than a finished one. The terminology is still uneven. Some firms call the category AI governance. Others frame it as compliance orchestration, responsible AI operations, model risk infrastructure, or next-generation GRC. Beneath those labels, though, the same pattern keeps appearing: regulatory complexity is forcing compliance technology closer to the core of AI deployment.

That is the landscape worth examining carefully. The most important story in AI governance this year is not simply that regulation is expanding. It is that a new generation of regulatory technology is evolving in response, trying to make AI compliance less manual, less episodic, and less fragile. The organizations paying attention are not doing so because RegTech sounds fashionable. They are doing so because they can already see what happens when governance remains mostly theoretical while the underlying systems keep operating at production speed.

Note: all market sizes and spending figures cited in this article reflect reports and public releases available as of March 2026. Because this is an active market, organizations should cross-check later quarterly updates before making budget or procurement decisions.

Why 2026 Feels Different

There is a practical reason the atmosphere changed so sharply. The AI governance debate has moved out of the abstract. Boards are asking harder questions. Legal teams want evidence, not slogans. Procurement teams increasingly want to know whether a vendor can document model lineage, logging, human oversight pathways, and regulatory responsibilities before contracts are signed. Large customers, especially in regulated sectors, have become less patient with broad statements about “responsible AI” that cannot be translated into artifacts, controls, and monitoring records.

The EU has played an important role in accelerating that reality, not only because of the size of its market but because the AI Act has given organizations something concrete to organize around. Even where firms disagree on implementation timelines, standards readiness, or supervisory interpretation, the broader effect is unmistakable: AI governance is no longer a soft internal discipline. It is becoming a formal operational function with real documentation, real technical consequences, and real cost exposure for organizations that delay too long.

The more interesting development, however, is that this pressure is not staying inside legal departments. It is forcing closer contact between compliance, engineering, security, data governance, procurement, and product operations. That is exactly where RegTech begins to matter. Once an organization reaches the point where legal obligations must be translated into inventories, workflow triggers, model controls, escalation routes, logs, dashboards, attestations, and defensible evidence packs, software stops being optional. Manual coordination can carry a company only so far before the process becomes too slow, too expensive, or too brittle to survive regulatory scrutiny.

That is where the real discussion starts — not with generic claims about trustworthy AI, but with the technologies now being built and bought to make AI governance operational under real-world pressure.

From Compliance Function to System Architecture: Where RegTech Actually Lives

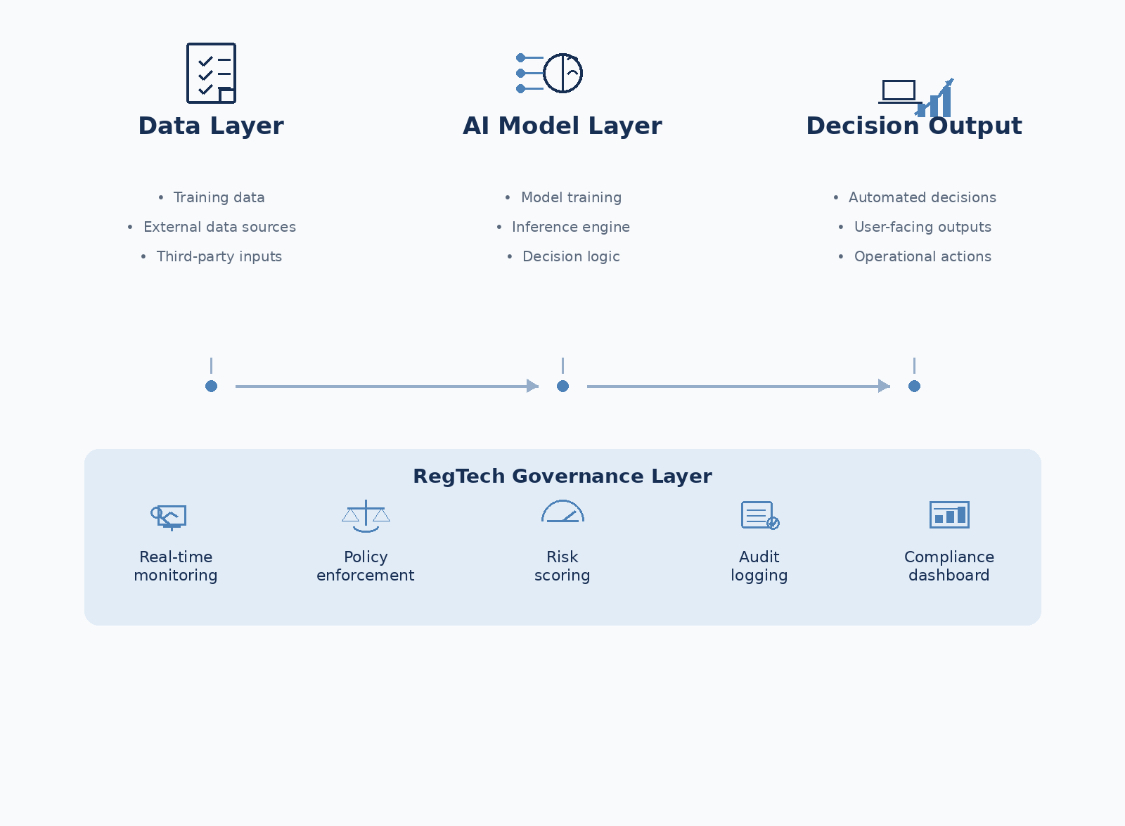

One of the more revealing shifts in how organizations are approaching AI governance in 2026 is where compliance actually sits. In earlier models, governance was treated as a function. It lived inside legal, risk, or audit teams, and its influence was often exercised through documentation, reviews, and approval gates. That structure made sense when systems changed slowly and when regulatory exposure could be assessed at defined intervals. It becomes less effective when the system itself is continuously producing decisions.

RegTech changes that placement. It does not remove the role of legal or risk teams, but it redistributes where control is exercised. Instead of existing primarily as a layer of oversight, governance begins to appear inside the system architecture itself. Controls are no longer only written down; they are encoded. Monitoring is not something that happens after deployment; it is embedded in the runtime environment. Evidence is not assembled at the end of a cycle; it is generated as a byproduct of normal operation.

This shift is subtle but important. When governance becomes part of system design, the conversation inside organizations changes. Engineering teams are no longer building first and handing systems over for review. They are building within constraints that are already defined. Product teams are not only thinking about functionality; they are considering how decisions will be logged, how risk classifications will be maintained, and how regulatory expectations will be met across different jurisdictions. Compliance becomes something that shapes design choices rather than something that reacts to them.

It also explains why traditional GRC tooling has struggled to keep up. Those systems were not designed to operate at the same level as the systems they are meant to govern. They collect information about processes, but they are not always connected to the processes themselves. RegTech, by contrast, is increasingly built to sit closer to data pipelines, model operations, and decision flows. It is less about documenting what should happen and more about ensuring that what is happening can be observed, controlled, and explained.

That distinction helps clarify why RegTech is expanding so quickly into AI governance. The problem it solves is not only regulatory complexity. It is architectural misalignment. As long as governance remains outside the system, it will struggle to keep pace with systems that operate continuously. Bringing it inside does not remove risk, but it changes how risk is managed. It becomes part of the system’s behavior rather than something applied from the outside.

Key Emerging RegTech Trends in 2026

The shape of RegTech in 2026 is not defined by a single breakthrough. It is defined by a convergence of capabilities that are beginning to look less like optional enhancements and more like required infrastructure. When I look across deployments, vendor strategies, and internal enterprise builds, the same pattern appears repeatedly: compliance is being pushed closer to runtime, closer to data flows, and closer to the systems that actually produce risk rather than the documents that describe it.

That shift has produced a set of trends that are not isolated from one another. They overlap, reinforce each other, and in some cases depend on one another to function properly. It is difficult, for example, to talk about continuous compliance without also talking about automated monitoring, or to discuss policy mapping without acknowledging the role of generative systems in interpreting regulatory change at scale. This is not a checklist. It is a closer look at how these capabilities are evolving in practice.

1. AI-Driven Real-Time Monitoring and Anomaly Detection

One of the clearest pressure points in AI governance right now is the inability of traditional compliance systems to observe behavior as it happens. Most legacy approaches rely on sampling, periodic reviews, or post-event investigations. That model was already strained in financial compliance. It becomes far more fragile when applied to AI systems that can generate thousands or millions of decisions in a short time window.

RegTech platforms in 2026 are responding by embedding monitoring layers directly into operational workflows. These systems do not wait for quarterly reviews. They watch model outputs, data inputs, and decision pathways in real time, looking for deviations that may signal regulatory exposure, model drift, misuse, or unexpected behavior under changing conditions.

A useful example is the expansion of network-based intelligence platforms such as NICE Actimize’s Insights Network, introduced in early 2026. The system aggregates signals across institutions to detect counterparty risk patterns that would be difficult to identify in isolation. While the original framing sits within financial crime, the underlying architecture is increasingly relevant to AI governance: shared intelligence, cross-system monitoring, and near real-time anomaly detection.

What matters here is not just speed. It is the shift in posture. Instead of reconstructing what happened after a system produces a problematic outcome, organizations are starting to detect early signals that something is moving outside expected boundaries. In practice, this can reduce investigation workloads and improve response efficiency. Industry analyses suggest that automated monitoring layers can significantly reduce manual compliance investigation effort[5], particularly when monitoring, alerting, and evidence capture are integrated into operational workflows, although the exact impact depends on implementation maturity and sector context.

The more subtle benefit is consistency. Real-time monitoring enforces the same level of scrutiny across all transactions or outputs, rather than relying on selective review. In regulatory environments where selective visibility can become a liability, that consistency becomes part of the control framework itself.

| Capability | Traditional Approach | 2026 RegTech Approach |

|---|---|---|

| Monitoring | Periodic sampling | Continuous, real-time observation |

| Anomaly Detection | Post-event analysis | Live signal detection across workflows |

| Investigation Effort | Manual reconstruction | Automated triage and alert prioritization |

2. Generative and Agentic AI for Regulatory Change Mapping

Another issue that has become hard to ignore this year is the volume of regulatory change itself. Organizations are not dealing with a single rulebook. They are dealing with overlapping obligations across jurisdictions, sector regulators, procurement requirements, and evolving interpretations of existing laws. Keeping track of those changes manually has always been difficult. Doing so at the pace required in 2026 is becoming unrealistic.

This is where generative and increasingly agentic AI systems are being deployed inside RegTech environments. Their role is not to replace legal interpretation but to accelerate the mapping process between external regulatory text and internal control structures. These systems can ingest large volumes of regulatory updates, identify relevant clauses, map them to existing policies or controls, and highlight gaps that require attention.

In practice, that changes how organizations respond to regulatory movement. Instead of waiting for periodic reviews or external audits to reveal misalignment, compliance teams can see where obligations are not yet covered, or where existing controls no longer match updated requirements. The phrase that appears frequently in vendor positioning and analyst discussions is “test once, comply many.” The idea is that once a control framework is structured in a machine-readable way, it can be reused across multiple regulatory contexts with less duplication of effort.

Industry practitioners have started to describe 2026 as the point where these capabilities are moving beyond pilot deployments. Supradeep Appikonda of 4CRisk.ai, for example, has pointed out that organizations are beginning to measure success not by whether AI is used in compliance, but by whether it improves response time to regulatory change and increases confidence in audit readiness. That is a different metric from experimentation. It is closer to operational dependency.

There is also a structural effect here. Once regulatory interpretation becomes partially automated, the bottleneck shifts. The challenge is no longer just understanding what the regulation says. It becomes ensuring that internal systems, workflows, and controls can adapt quickly enough to reflect that understanding. RegTech platforms that integrate policy mapping with workflow execution are starting to address that gap.

3. Specialized AI Governance Platforms and Continuous Compliance

One of the more important distinctions emerging in 2026 is the difference between general-purpose compliance systems and platforms designed specifically for AI governance. The latter are being built around the assumption that compliance cannot be assessed at a single point in time. It has to be maintained continuously as systems evolve.

These platforms typically include several core components: centralized inventories of AI systems, classification frameworks that align models with regulatory risk categories, policy engines that define acceptable use conditions, monitoring layers that observe system behavior, and evidence pipelines that capture logs, decisions, and changes in a way that can be audited later.

The market signal behind this category is becoming clearer. Gartner has highlighted that growing regulatory pressure is accelerating the adoption of dedicated AI governance platforms[6], with organizations increasingly treating them as a core capability rather than a supporting tool. The same research suggests that well-implemented platforms can reduce regulatory costs by around 20%, largely through automation and reduced duplication of effort.

The reasoning is straightforward when you look at how these systems operate. Instead of treating compliance as an external overlay, they integrate governance into the lifecycle of AI systems themselves. A model cannot move into production without classification. Changes trigger updates in documentation. Monitoring data feeds directly into audit records. In some cases, policy violations can trigger automated responses or escalation workflows without waiting for manual intervention.

Lauren Kornutick of Gartner has framed the underlying issue clearly: traditional GRC tools were not designed to handle the dynamic and probabilistic nature of AI systems. That mismatch is now driving demand for platforms that can operate at the same speed and complexity as the systems they are meant to govern.

The practical implication is that compliance is becoming part of system design rather than an afterthought. Organizations that adopt these platforms early tend to discover that governance processes become more predictable, not because the regulatory environment is simpler, but because the internal response to that environment is more structured.

4. Cloud-Native Architecture and Third-Party Risk Management

One of the quieter but decisive shifts in RegTech has been architectural. A growing proportion of deployments are no longer built as isolated, on-premise compliance systems. They are designed as cloud-native environments that can scale across business units, integrate with distributed data sources, and extend governance controls to third-party services that are increasingly part of modern AI systems.

This matters because very few organizations now operate AI systems in isolation. Models are sourced from vendors, fine-tuned using external datasets, embedded within SaaS platforms, or connected to APIs that introduce additional layers of dependency. Each of those connections carries regulatory implications, especially when responsibilities for data handling, model behavior, and decision outcomes are not fully centralized.

RegTech platforms are beginning to address this by extending visibility beyond internal systems. Cloud-native deployment allows governance layers to monitor interactions across environments, track where models are being used, and enforce policy conditions even when parts of the system sit outside direct organizational control. The result is not perfect visibility, but it is a meaningful improvement over fragmented oversight.

The strategic importance of this approach is reflected in a growing number of enterprise partnerships and cloud-based initiatives focused on integrating AI deployment with governance, risk, and compliance capabilities. While alliances like this are partly commercial, they also reflect a broader recognition that compliance cannot be bolted onto distributed systems after deployment. It has to be embedded into the infrastructure where those systems operate.

There is also a jurisdictional dimension to consider. As data sovereignty requirements become more prominent, cloud-native RegTech systems are being designed to respect regional constraints while maintaining a unified governance layer. That balancing act is not trivial. It requires systems that can apply different rules in different contexts without losing coherence at the organizational level.

The challenge, of course, is that extending governance into cloud and third-party environments increases complexity. It introduces questions about responsibility boundaries, contractual obligations, and technical integration. RegTech does not remove those questions, but it provides a framework for managing them more systematically than manual coordination would allow.

5. Model Governance, Explainability, and Auditability Layers

If real-time monitoring addresses visibility and cloud architecture addresses scale, auditability addresses accountability. Regulators are not only interested in whether an AI system behaves correctly. They want to know whether an organization can explain how that behavior was produced, how it was evaluated, and what controls were in place when decisions were made.

That requirement has pushed RegTech platforms toward more sophisticated model governance capabilities. These include tracking model lineage, documenting training data sources, recording configuration changes, capturing decision logs, and linking outputs back to specific system states at a given point in time. The goal is not perfect explainability in a theoretical sense. It is traceability that can withstand external scrutiny.

In practical terms, this often takes the form of layered audit trails. A decision made by an AI system can be tied to a model version, which is tied to a dataset, which is tied to a set of preprocessing steps, which is tied to a governance decision that allowed that configuration to be deployed. When regulators or auditors ask questions, the organization does not need to reconstruct that chain manually. It already exists as part of the system.

This is where RegTech intersects directly with technical requirements emerging from frameworks such as the EU AI Act. While the legal text defines obligations around documentation, risk management, and transparency, it does not prescribe exactly how those obligations should be implemented at a systems level. RegTech platforms are filling that gap by providing the mechanisms through which documentation and traceability can be generated automatically rather than assembled retroactively.

There is an important nuance here. Explainability is often discussed in abstract terms, especially in academic or ethical debates. In operational contexts, the focus is narrower. Organizations are less concerned with providing a perfect human-readable explanation for every model output and more concerned with demonstrating that the system operates within defined parameters, that those parameters are documented, and that deviations can be detected and investigated.

That distinction matters because it shapes how tools are built. Instead of pursuing full interpretability for all models, many RegTech systems prioritize structured logging, reproducibility, and evidence capture. These capabilities may not satisfy every philosophical question about AI, but they address the questions regulators are most likely to ask during enforcement.

| Governance Layer | Purpose | Operational Outcome |

|---|---|---|

| Model Lineage Tracking | Trace model origin and evolution | Clear accountability for system behavior |

| Decision Logging | Capture outputs and conditions | Reconstructable audit trails |

| Data Mapping | Link datasets to model training | Evidence of compliant data usage |

6. Integration with Broader GRC Systems and Predictive Risk Modeling

Another trend that becomes visible when looking across large organizations is the gradual reintegration of specialized AI governance tools back into broader GRC ecosystems. This is not a reversal of specialization. It is an attempt to avoid fragmentation as the number of compliance tools continues to grow.

Estimates suggest that enterprises could be operating an average of ten separate GRC-related solutions by 2028, up from around eight in 2025. Without some level of integration, that expansion risks creating new silos rather than solving existing ones. RegTech vendors are responding by building connectors, shared data layers, and orchestration capabilities that allow AI governance functions to interact with existing risk, compliance, and security systems.

The integration is not purely technical. It also changes how risk is modeled. Instead of treating compliance as a static requirement, organizations are beginning to use predictive analytics to anticipate where breaches or failures might occur. This involves analyzing patterns in system behavior, identifying areas of elevated risk, and adjusting controls before issues materialize.

In AI contexts, predictive risk modeling can include monitoring for model drift, identifying scenarios where training data may no longer reflect current conditions, or detecting usage patterns that fall outside expected norms. When integrated with governance platforms, these signals can trigger adjustments in controls, additional reviews, or temporary restrictions on system behavior.

The benefit is not just reduced risk exposure. It is a shift toward proactive governance. Instead of reacting to incidents, organizations can intervene earlier in the lifecycle, often before a regulatory issue becomes visible externally. That shift is subtle but important. It changes compliance from a reactive discipline into something closer to operational risk management.

7. Expansion into SMEs and Cost-Efficient Automation

RegTech has historically been associated with large financial institutions and heavily regulated industries. That is beginning to change. As AI adoption spreads across sectors, smaller organizations are facing similar regulatory expectations, even if the scale of their operations is different.

Cloud-based deployment models and AI-driven automation are lowering the barriers to entry. Instead of requiring extensive in-house infrastructure or large compliance teams, smaller firms can access governance capabilities through managed platforms, subscription services, or integrated tools within existing software environments. This does not eliminate the need for expertise, but it reduces the cost and complexity of implementing basic compliance structures.

The sectors where this shift is most visible include healthcare, insurance, and energy, where regulatory oversight intersects with increasing use of AI-driven decision systems. In these environments, the ability to demonstrate compliance is becoming a prerequisite for participation rather than a competitive differentiator.

There is also a broader economic implication. As RegTech becomes more accessible, it reduces the gap between organizations that can afford to build sophisticated compliance infrastructure and those that cannot. That does not level the playing field entirely, but it does make it harder for regulatory compliance to be used as a barrier to entry.

At the same time, accessibility introduces new risks. Smaller organizations may rely heavily on vendor-provided solutions without fully understanding their limitations. This creates a different kind of dependency, where compliance is outsourced to technology that may not be fully transparent. RegTech expands capability, but it also requires careful selection and ongoing evaluation.

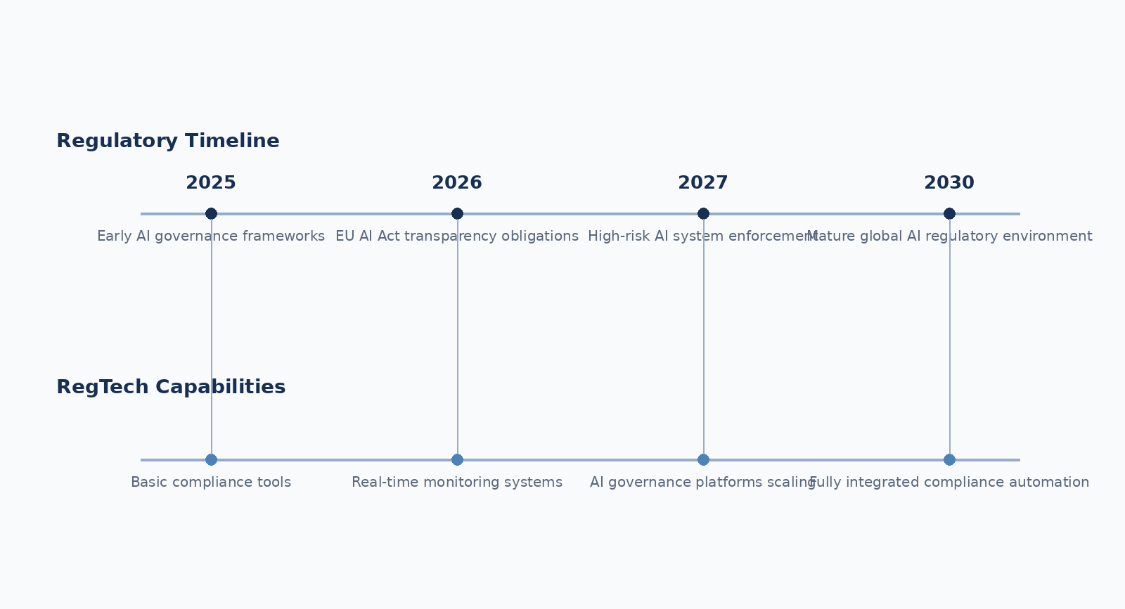

RegTech and the AI Regulatory Timeline in 2026

To understand why these trends are converging now, it helps to place them against the regulatory timeline. The EU AI Act, with its phased implementation, has created a sequence of deadlines that organizations cannot ignore. Key provisions are already beginning to apply, while obligations for high-risk systems are moving into sharper operational focus through 2026 as the broader rollout continues toward full implementation.

RegTech capabilities align closely with these milestones. Real-time monitoring supports ongoing compliance once systems are deployed. Policy mapping tools help organizations interpret new obligations as they emerge. Governance platforms provide the structure needed to document and demonstrate compliance. Cloud-native systems extend that structure across distributed environments.

The alignment is not accidental. Vendors and internal development teams are building toward these regulatory inflection points, knowing that demand for operational compliance tools will increase as enforcement becomes more concrete. The result is a feedback loop: regulation drives demand for RegTech, and RegTech, in turn, shapes how organizations implement regulatory requirements in practice.

Real-World Impact and Measurable Returns in 2026

The conversation around RegTech becomes more grounded when it moves away from capability and into measurable outcomes. For all the discussion about architecture, monitoring, and governance layers, the question that tends to matter most inside organizations is simpler: does any of this actually reduce risk, cost, or operational friction in a way that justifies the investment?

Across sectors, the answer in 2026 is increasingly yes, although the results vary depending on how deeply these systems are integrated. Early adopters that have moved beyond pilot implementations are beginning to report changes that are difficult to ignore. In fintech environments, for example, industry analyses carried forward from 2025 into 2026 suggest that organizations using advanced RegTech solutions have seen regulatory fines decrease by roughly 35%. That figure should not be treated as universal, but it reflects a broader pattern: when compliance processes become more structured and automated, the likelihood of avoidable violations tends to fall.

The same pattern appears in fraud prevention and transaction monitoring. Automated detection systems, particularly those enhanced by machine learning, have been associated with reductions in fraud-related losses of up to 40% in certain contexts. The mechanism is straightforward. Earlier detection leads to earlier intervention, which reduces the scale of exposure. What is less obvious, but equally important, is the reduction in false positives when monitoring systems become more precise. This allows compliance teams to focus on higher-risk cases rather than spending time filtering noise.

Operational efficiency is another area where the impact becomes visible. In digital lending and similar decision-heavy environments, some organizations report processing times improving by as much as 60% when compliance checks are integrated directly into system workflows rather than handled as separate review stages. That improvement is not only about speed. It also reduces friction between compliance and business teams, which is often one of the hidden costs of regulatory oversight.

There is also a less quantifiable but equally important effect on audit readiness. Organizations that have implemented structured governance platforms tend to approach audits differently. Instead of assembling documentation under pressure, they rely on systems that have already captured the necessary evidence as part of normal operations. This changes the internal experience of compliance. It becomes less about preparing for scrutiny and more about maintaining a state of readiness that can be demonstrated at any time.

In practical terms, this has implications for how organizations engage with regulators. When evidence can be produced quickly and consistently, discussions tend to focus less on whether controls exist and more on how they function. That shift can influence both the tone and the outcome of regulatory interactions.

It is worth noting, however, that these outcomes are not automatic. They depend heavily on how RegTech is implemented. Organizations that treat these tools as isolated add-ons often see limited benefits. The more significant gains tend to appear when governance capabilities are integrated into core workflows, supported by clear internal ownership, and aligned with broader risk management strategies.

There is also variation across sectors. Financial institutions, which have longer histories with compliance technology, often achieve results more quickly. Other industries, particularly those earlier in their AI adoption cycle, may take longer to realize similar gains. This does not diminish the potential value. It simply reflects differences in starting point and organizational maturity.

Note: the figures cited above represent industry averages reported across multiple analyses and case studies as of 2025–2026. Actual outcomes vary based on implementation depth, sector, regulatory exposure, and organizational structure.

What RegTech Solves — and Where It Still Falls Short

It is easy to focus on the capabilities RegTech introduces and overlook the problems it is actually designed to address. At a basic level, these systems exist because traditional approaches to compliance are no longer sufficient for the scale and complexity of modern AI systems. The gaps they attempt to close are practical rather than theoretical.

One of the most persistent challenges is fragmentation. Organizations operating across multiple jurisdictions are subject to overlapping regulatory requirements that do not always align neatly. Managing those requirements manually often leads to duplication of effort, inconsistent interpretations, and gaps in coverage. RegTech platforms address this by centralizing regulatory mapping and linking obligations to internal controls in a more structured way.

Another issue is the sheer volume of compliance work. As AI systems become more embedded in business processes, the number of decisions, data flows, and interactions that require oversight increases dramatically. Manual review processes cannot scale to match that growth. Automation becomes less of an optimization and more of a necessity.

Third-party risk adds another layer of complexity. Many AI systems depend on external vendors, whether for models, data, or infrastructure. Each dependency introduces uncertainty around how systems are built, how they behave, and how responsibilities are distributed. RegTech tools provide mechanisms for tracking these relationships, enforcing contractual requirements, and monitoring external components as part of a broader governance framework.

There is also the challenge of opacity. AI systems, particularly those based on complex models, can produce outcomes that are difficult to interpret without additional tooling. While RegTech does not solve the underlying technical opacity, it provides a structure for documenting, logging, and analyzing system behavior in a way that supports accountability.

Despite these strengths, the current generation of RegTech solutions is not without limitations. One concern that appears frequently in analyst reports is the risk of market fragmentation. As more vendors enter the space, organizations may find themselves navigating a landscape of overlapping tools with varying levels of compatibility. Without careful integration, this can recreate the very silos RegTech is meant to eliminate.

Interoperability is another unresolved issue. While many platforms offer integration capabilities, there is still no universally accepted standard for how governance data should be structured or exchanged. This can make it difficult to move information between systems or to build a fully unified compliance environment.

There is also a question of dependency. As organizations rely more heavily on automated compliance systems, they become dependent on the accuracy and reliability of those systems. Errors in configuration, gaps in coverage, or limitations in vendor solutions can introduce new forms of risk. This does not negate the value of RegTech, but it does require ongoing oversight and validation.

Finally, the regulatory environment itself continues to evolve. Standards are still being clarified. Enforcement practices are still developing. RegTech platforms must adapt to these changes, which means that organizations cannot treat implementation as a one-time effort. Governance remains an ongoing process, even when supported by advanced technology.

This section reflects limitations and challenges identified in analyst research and industry discussions as of March 2026. No single solution currently addresses all aspects of AI governance perfectly.

Looking Ahead to 2030: Where RegTech Is Moving

It is still early enough in the lifecycle of AI governance that the current generation of RegTech platforms should be seen as an evolving layer rather than a finished category. That said, the direction of travel is becoming clearer. If 2026 is the year where compliance becomes operational, the next few years are likely to determine how deeply that operational layer is integrated into the way organizations design, deploy, and manage AI systems.

Market projections point toward sustained expansion rather than short-term growth. Estimates that place the RegTech market on a path toward more than $100 billion by the early 2030s are not simply reflections of vendor optimism. They mirror the increasing cost of regulatory complexity and the corresponding demand for systems that can manage that complexity at scale. Within that broader market, AI governance platforms are expected to move from a specialized subset into a standard component of enterprise architecture.

One of the more significant developments to watch is the gradual introduction of more autonomous or agentic capabilities within compliance systems themselves. Early versions of these systems are already capable of mapping regulatory changes, identifying gaps, and suggesting updates. The next stage is likely to involve more direct participation in compliance workflows, where systems not only detect issues but initiate responses, trigger control adjustments, or coordinate remediation actions across different parts of an organization.

This does not mean that compliance becomes fully automated or removed from human oversight. If anything, it introduces new questions about how much autonomy should be delegated to systems responsible for governance. What it does suggest is that the boundary between monitoring and action will become less distinct. Systems that can only observe may no longer be sufficient in environments where decisions and risks emerge continuously.

Another trend that is likely to shape the next phase is interoperability. As organizations accumulate multiple governance tools, the ability of those tools to exchange information and operate as part of a coherent system becomes more important. There are already early efforts to define common structures for governance data, but the landscape remains fragmented. Progress in this area will influence whether RegTech evolves into an integrated layer or remains a collection of partially connected solutions.

There is also a strategic dimension that is beginning to surface. Organizations are starting to recognize that effective governance can function as a competitive advantage rather than simply a compliance requirement. In markets where trust, reliability, and regulatory alignment influence purchasing decisions, the ability to demonstrate robust governance may shape how products are evaluated and adopted.

From that perspective, investment in RegTech is not only about avoiding penalties or meeting minimum standards. It is about building systems that can operate confidently in regulated environments, adapt to changing requirements, and maintain credibility with regulators, partners, and customers. That is a different framing from the one that dominated earlier discussions about compliance.

There are, of course, uncertainties. Regulatory frameworks will continue to evolve. Enforcement practices may vary across jurisdictions. Technical standards may take time to stabilize. These factors make it difficult to predict exactly how the landscape will look in 2030. What is less uncertain is the direction of pressure. It is moving toward greater scrutiny, greater complexity, and greater demand for demonstrable control.

Organizations that treat RegTech as a peripheral concern are likely to find themselves reacting to that pressure. Those that integrate it more deeply into their operational architecture may find that they are better positioned to respond, not only to regulatory demands but also to broader expectations around how AI systems should behave in practice.

Strategic Positioning in a RegTech-Driven Environment

The practical question for organizations is not whether to engage with RegTech, but how to approach it in a way that aligns with long-term operational needs. There is a temptation to focus on individual tools or vendor capabilities, but the more important consideration is how governance functions are structured across the organization.

One pattern that appears consistently among more mature implementations is the presence of a centralized inventory of AI systems. Without a clear view of what exists, where it is deployed, and how it is classified, other governance efforts tend to become fragmented. From that foundation, organizations can begin to layer policy frameworks, monitoring capabilities, and evidence collection processes in a more coordinated way.

Another consideration is the relationship between governance and system design. When compliance is introduced late in the development process, it often leads to rework, delays, or compromises. Integrating governance considerations earlier allows systems to be built with regulatory requirements in mind, reducing friction later in the lifecycle.

Interoperability should also be treated as a strategic priority rather than a technical detail. As the number of tools involved in governance increases, the ability to move data between systems, maintain consistency, and avoid duplication becomes critical. Organizations that plan for integration early are likely to avoid some of the complexity that emerges as environments scale.

Finally, there is a cultural dimension that should not be overlooked. Technology can enable governance, but it does not replace the need for clear accountability, defined responsibilities, and informed decision-making. RegTech works best in environments where governance is understood as part of normal operations rather than an external constraint.

These considerations are not exhaustive, but they reflect a broader shift in how organizations are beginning to think about compliance. It is becoming less about responding to external pressure and more about building systems that can operate effectively within that pressure.

Conclusion

The regulatory environment around artificial intelligence has reached a point where abstraction no longer holds up. The combination of expanding legal frameworks, increasing scrutiny, and the operational complexity of AI systems has created a situation where governance must be implemented, not just described.

Regulatory technology is emerging as the layer that makes that implementation possible. By embedding monitoring, policy enforcement, documentation, and evidence capture into the systems that organizations rely on, RegTech is turning compliance from a periodic activity into a continuous function. That shift does not eliminate the challenges associated with AI governance, but it changes how those challenges are addressed.

The developments observed in 2026 suggest that this transformation is still in progress. The tools are evolving. The standards are not fully settled. The market is still defining itself. Yet the direction is clear enough to draw a conclusion: organizations that invest in making governance operational are likely to find themselves better prepared for the demands ahead, while those that delay may face increasing difficulty as expectations continue to rise.

As AI systems become more capable and more widely deployed, the question of how they are governed will shape not only regulatory outcomes but also trust in the systems themselves. In that context, RegTech is not simply a category of software. It is becoming part of the infrastructure that determines how confidently organizations can operate in an environment where speed, complexity, and accountability are increasingly intertwined.

References

- Grand View Research. RegTech Market Size, Share & Trends Report, 2025–2033.

View Source

↩ - Gartner. “Global AI Regulations Fuel Billion-Dollar Market for AI Governance Platforms,” February 17, 2026.

View Source

↩ - European Commission. “Artificial Intelligence Act (AI Act).”

View Source

↩ - Deloitte. AI Governance and Regulatory Technology Trends, 2025–2026.

View Source

↩ - McKinsey & Company. Risk and Compliance in the Age of AI, 2025.

View Source

↩ - Gartner. Market Guide for AI Governance Platforms, 2026.

View Source

↩

Frequently Asked Questions About AI RegTech and the 2026 Governance Landscape

Why is RegTech becoming critical for AI governance in 2026?

Regulatory expectations have moved from policy-level alignment to operational proof. Organizations are now required to demonstrate how AI systems are monitored, controlled, and documented in real time. RegTech provides the infrastructure to automate these processes, making continuous compliance possible rather than relying on periodic reviews.

How do AI governance platforms differ from traditional GRC systems?

Traditional GRC systems are designed for static controls and periodic audits, while AI governance platforms are built for dynamic systems that evolve over time. They integrate monitoring, model tracking, and policy enforcement directly into operational workflows, allowing compliance to be maintained continuously rather than assessed after the fact.

Can RegTech fully automate AI compliance?

RegTech can automate significant portions of compliance, including monitoring, documentation, and regulatory mapping, but it does not remove the need for human oversight. Interpretation of regulatory requirements, risk judgment, and accountability decisions still require human involvement, especially in complex or high-risk scenarios.

What risks remain even after adopting RegTech solutions?

Key risks include over-reliance on vendor tools, integration gaps between systems, and evolving regulatory standards that may outpace current platform capabilities. Organizations must continuously validate their governance systems and avoid assuming that automation alone guarantees compliance.

How should organizations approach RegTech adoption strategically?

A structured approach typically begins with building a centralized inventory of AI systems, followed by integrating governance controls into system design and ensuring interoperability across tools. The goal is not just tool adoption, but creating a cohesive environment where compliance is embedded into everyday operations.

Covering responsible AI, governance frameworks, policy, ethics, and global regulations shaping the future of artificial intelligence.