Bias has a way of becoming visible at the worst possible moment. Not during procurement decks. Not during internal demos. Usually when an AI system has already been deployed, a pattern has hardened, and somebody outside the product team starts asking a question the organization should have asked much earlier: who is being treated differently, and why? In lending, hiring, healthcare, insurance, fraud detection, and public-sector decision systems, that question does not stay theoretical for long. It turns into regulatory scrutiny, reputational damage, legal exposure, internal distrust, and in some cases a much more uncomfortable realization that the model was working exactly as built, yet still producing outcomes nobody can defend in public.

That is the problem with the lazy language often used around fairness in AI. People talk about bias as if it were an abstract moral defect that can be handled with broad principles, a few model cards, or a statement about responsible innovation. In practice, bias is operational. It sits inside data collection choices, label definitions, proxy variables, feature engineering, evaluation benchmarks, threshold settings, feedback loops, and deployment conditions. It shows up when a model ranks one group differently from another for reasons the team did not test carefully enough, or did test and could not explain honestly. Responsible AI teams know this already. What they usually struggle with is not recognizing that bias matters. The harder part is building a repeatable audit process that is rigorous enough to withstand internal challenge, regulatory inspection, and the far less forgiving test of real-world use.

That is why algorithmic bias auditing matters now in a much more practical sense than it did a few years ago. The conversation has moved past generic commitments to fairness. Teams are being asked to produce evidence. For high-risk systems in particular, the burden is no longer satisfied by saying bias is monitored somewhere in the governance stack. The real question is whether there is a documented process for identifying discriminatory outcomes, measuring disparity, tracing likely causes, applying mitigation, and recording what remains unresolved after intervention. Once AI systems move from experimental use into consequential environments, bias auditing stops being a nice internal discipline and starts looking much more like baseline infrastructure.

I have noticed that many teams still approach audits as one-off exercises, often triggered by procurement pressure, a customer questionnaire, an internal review, or a press cycle that suddenly makes fairness look urgent again. That usually leads to shallow work. A metric is selected because it is easy to calculate, a subgroup comparison is run against incomplete data, a mitigation technique is tried, and the result is packaged into a reassuring slide. Then the model drifts, the user population changes, or a deployment setting introduces a new distortion that was never visible in the lab. The audit exists on paper, but the risk remains in production. A serious bias audit has to resist that pattern. It has to be repeatable, explainable, and connected to the lifecycle of the system rather than treated as a ceremonial checkpoint.

There is also a deeper reason this topic deserves more discipline than it usually gets. Bias auditing is one of the few places in AI governance where technical work and accountability work are forced to meet each other directly. A fairness metric on its own solves nothing. A policy statement on its own solves nothing. The work becomes meaningful only when data scientists, governance leads, compliance teams, product owners, and domain specialists are all willing to examine how a system behaves across groups, what trade-offs are being accepted, which harms remain plausible, and how those decisions will be documented if challenged later. That is why weak auditing tends to fail in predictable ways. It is often either technically narrow and politically naive, or procedurally polished but analytically thin. Good auditing refuses that split.

What follows is based on how teams actually struggle with this in practice, not how it is usually presented in slide decks or policy documents. The focus stays on the parts teams end up needing once things move beyond theory: what kinds of bias need to be distinguished from one another, what regulatory and standards frameworks are pushing teams toward more formal audit processes, how to structure an audit from scoping through reporting, which fairness metrics are useful and when they are misleading, how mitigation should be documented, and what continuous auditing looks like once a model is live. I will also include a practical Bias Audit Procedure and Reporting Template because a lot of teams do not need another abstract essay on fairness; they need a structure they can adapt, defend, and use.

It would be unrealistic to expect bias auditing to eliminate every fairness problem in AI. It cannot. Some trade-offs are real, some datasets are incomplete, some protected attributes are unavailable or legally sensitive, and some harms only become legible after deployment. But that is not an argument for weaker audits. It is an argument for audits that are more honest, more technically grounded, and more tightly integrated with governance. A model does not become trustworthy because a team says it was reviewed. Trust starts to become credible when the review process is specific enough to expose uncomfortable results, disciplined enough to repeat them, and mature enough to record what could not be fixed neatly.

Understanding Algorithmic Bias and Why Auditing Matters

One thing that becomes obvious when you look closely is how loosely the word bias is used inside organizations. What teams call bias usually refers to several different failure modes that can appear at different stages of the AI lifecycle and require different forms of investigation. If those distinctions are blurred too early, the audit becomes messy before it has even started. A dataset imbalance is treated like a model architecture problem. A deployment effect is treated like a labeling issue. A fairness metric is chosen to answer the wrong question. Then the team wonders why the results feel inconclusive.

Historical bias is one of the most familiar forms, but even that label is used too casually. In practice it refers to the way social inequalities, institutional preferences, and discriminatory patterns are already embedded in the data used to train or validate a system. Hiring data that reflects years of skewed recruitment, lending histories shaped by exclusionary practices, or healthcare utilization records that mirror uneven access do not become neutral simply because they are digitized and fed into a model. The system can learn those patterns faithfully and still reproduce outcomes that are unacceptable. Auditing for historical bias therefore cannot stop at checking class distribution. It has to involve a more uncomfortable question about what the dataset is actually a record of.

Measurement bias is different. Here the problem is not necessarily that the world being measured is unfair, but that the variables, labels, or collection methods are poor proxies for what the system claims to represent. In clinical systems, for example, cost or utilization is sometimes mistaken for need. In fraud detection, prior flags may be treated as clean indicators of wrongdoing when they actually reflect uneven enforcement attention. In education or employment settings, performance labels may hide subjective judgments that vary by evaluator or group. If the target itself is distorted, highly accurate modeling can still produce deeply unfair results. Responsible audit work has to be willing to inspect the validity of the target variable, not only the statistical behavior of the trained model.

Representation bias appears when some populations are insufficiently present, poorly distributed, or unevenly described in the data. This is often where teams begin because it is comparatively visible, but even here superficial analysis is common. It is not enough to confirm that a protected group appears in the dataset. The harder question is whether that group appears in the right contexts, in enough volume, with sufficient label quality, and in combinations that matter operationally. A subgroup may exist in aggregate counts and still be effectively invisible once the model is deployed across intersections of age, region, income, disability, or language. That is why intersectional analysis matters more than many teams admit. Bias is often easiest to hide inside averages.

Algorithmic or aggregation bias is usually introduced later in the pipeline. The model may optimize for a global objective that looks acceptable overall but creates systematic degradation for particular groups. A single threshold, a single loss function, or a single ranking strategy may compress populations with different error tolerances and different real-world consequences into one general model behavior. This is one of the reasons fairness audits cannot be reduced to data audits. Even a relatively balanced dataset can produce unequal outcomes if the optimization logic privileges one performance trade-off over another. A team might celebrate headline accuracy while missing a much more consequential disparity in false positives, false negatives, calibration, or ranking exposure.

Evaluation bias and deployment bias push the problem even further. Evaluation bias appears when test sets, benchmark criteria, or chosen metrics fail to represent the actual use environment. A model can appear fair in the sandbox because the benchmark was narrow, the subgroup definitions were shallow, or the fairness metric selected did not map to the real harm scenario. Deployment bias appears when the surrounding conditions of use distort the meaning of the output. A model may have been designed for one workflow but inserted into another, or used by decision-makers whose behavior amplifies group disparities the development team never modeled. In those cases the problem is not simply in the training pipeline. It sits in the socio-technical system around the model, which is precisely why audit work cannot be confined to the notebook.

These distinctions matter because different forms of bias create different risks. Some are tied to direct discrimination, where protected characteristics are used explicitly or effectively. Others emerge through proxy variables, ranking behavior, or feedback loops that create indirect discrimination without ever naming the affected group. Some harms are visible at the individual level; others only become measurable as group disparity over time. A serious audit has to know which problem it is trying to detect. Otherwise the team ends up performing fairness theater: a few metrics, a few charts, and no genuine confidence that the model’s behavior has been interrogated in the way regulators, affected users, or internal reviewers would expect.

Bias auditing matters because it forces teams to move from intuition to evidence. It turns vague concern into testable claims. It creates a structure for asking whether a model behaves differently across groups, whether the disparity is acceptable under the use case, whether the source of the problem can be isolated, and whether a mitigation changed the result in a meaningful way. That is the technical side. The governance side matters just as much. Once the audit is documented, the organization has a record of what was tested, what was found, what was changed, and what residual risk remains. In the current environment, that kind of record is no longer optional window dressing. It is one of the few ways a team can demonstrate that fairness was treated as an engineering and governance problem rather than a marketing adjective.

Regulatory and Standards Landscape for Bias Auditing

Bias auditing did not become a priority because teams suddenly became more concerned about fairness. It became unavoidable because regulatory expectations, procurement scrutiny, and internal risk governance started converging on the same demand: show evidence that your system does not produce discriminatory outcomes, and show how you know[1]. In the European context, that expectation is now formalized in ways that leave less room for interpretation than many teams are used to. High-risk AI systems are expected to demonstrate that data governance practices include examination for possible biases and that appropriate measures are in place to detect, prevent, and mitigate discriminatory effects. Those requirements are not written as philosophical aspirations. They are tied to documentation, testing, and ongoing monitoring obligations that can be inspected.

It is tempting to read those requirements narrowly, as if they only apply to teams building systems that fall clearly into high-risk categories. That reading misses how the landscape is evolving. Even outside formal high-risk classification, organizations are being asked by partners, regulators, and internal governance bodies to demonstrate similar capabilities. Bias auditing is becoming a baseline expectation rather than a specialized exercise reserved for a few regulated use cases. The difference is that in high-risk systems, failure to demonstrate proper auditing carries more explicit legal consequences, including fines, restrictions on deployment, or mandatory corrective actions. That tends to focus attention quickly.

The requirements associated with data governance are particularly relevant because they push teams to confront bias at the earliest stages of the lifecycle. It is not enough to audit the model output after training and hope that mitigation can be applied retroactively. There is an expectation that datasets themselves are examined for representativeness, completeness, and potential sources of bias that could affect fundamental rights[2]. That means teams need to be able to explain how data was collected, what populations are included or excluded, how labels were assigned, and what limitations remain. A model audit without a credible data audit behind it is unlikely to satisfy serious scrutiny.

Standards frameworks reinforce the same direction from a different angle. The NIST AI Risk Management Framework treats fairness as one of the core characteristics of trustworthy AI, alongside validity, reliability, and transparency[3]. What matters in practice is that fairness is not treated as an isolated dimension. It is integrated into risk management processes that require identification, measurement, mitigation, and ongoing monitoring. That alignment matters because it pushes teams to embed bias auditing into broader governance structures rather than treating it as a standalone technical check. If fairness is framed as risk, then auditing becomes part of how risk is assessed, documented, and controlled.

Emerging management system approaches add another layer. AI governance standards increasingly expect organizations to demonstrate not only that they can perform audits, but that those audits are repeatable, controlled, and integrated into quality management processes. That introduces requirements around versioning, change management, documentation controls, and internal review. A bias audit that cannot be reproduced or traced across model versions does not meet that bar. The implication is subtle but important: bias auditing is moving from a discretionary activity to something that looks much closer to internal audit in other regulated domains.

There is also a shift in how enforcement is likely to work in practice. It is not realistic to assume that regulators or external reviewers will rerun every model and dataset from scratch. What they will examine is the audit trail. They will look at whether the organization defined the relevant protected attributes, whether appropriate fairness metrics were selected, whether results were recorded transparently, whether mitigation decisions were justified, and whether residual risks were acknowledged rather than ignored. In that sense, the quality of the audit documentation becomes almost as important as the technical work itself. Poor documentation can undermine otherwise solid analysis, while strong documentation can make the reasoning behind difficult trade-offs visible and defensible.

Another point that is often underestimated is the role of deployers versus providers. Organizations that build models are expected to perform pre-deployment bias testing and documentation. Organizations that deploy those models, particularly in high-risk contexts, are expected to monitor performance in real use conditions. That creates a two-layer responsibility. A provider may demonstrate that a system performs within acceptable fairness bounds under certain assumptions. A deployer may discover that those bounds shift once the system interacts with a different population, a different workflow, or a different decision environment. Bias auditing, therefore, does not end at deployment. It extends into post-market monitoring, where drift, feedback loops, and usage patterns can reintroduce disparities that were not visible earlier.

When these regulatory and standards pressures are combined, the conclusion is difficult to avoid. Bias auditing is no longer something teams can perform informally or sporadically. It needs to be structured, documented, and aligned with recognized frameworks. That does not mean every organization needs a complex, resource-intensive process from day one. It does mean that whatever process exists must be credible, explainable, and capable of evolving as expectations tighten. The alternative is to treat audits as a compliance afterthought and discover, too late, that the evidence is not strong enough when it is finally requested.

Step-by-Step Process for Conducting an Algorithmic Bias Audit

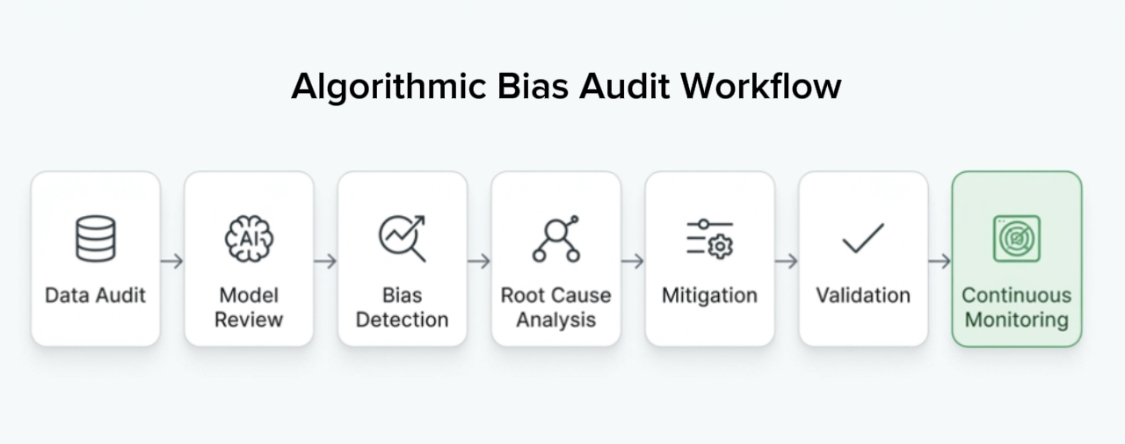

In practice, most bias audits don’t fail because teams lack technical skill. They fail because the process is unclear or applied differently each time. A few checks are performed, results are noted, and then the work stops without a clear sense of whether the right questions were asked in the right order. A more disciplined approach treats the audit as a sequence of steps that can be repeated across models and over time. The sequence does not need to be rigid, but it does need to be coherent enough that another team could understand what was done and why.

The first step is defining scope, objectives, and governance. This sounds administrative, but it shapes everything that follows. The team needs to decide which system is being audited, which decisions or outputs are in scope, which protected attributes are relevant, and what level of risk the use case carries. In some environments, this also involves defining who is accountable for the audit, who provides input, and who signs off on the results. Without that clarity, later disagreements about metrics, thresholds, or mitigation strategies become harder to resolve because there is no agreed reference point.

Data auditing comes next, and it is usually where the first meaningful insights appear. This step involves examining the dataset for representation issues, label inconsistencies, missing values, and potential sources of bias. Basic statistical summaries are useful, but they are rarely sufficient. What matters more is how the data behaves across relevant subgroups. If the dataset includes a protected attribute, distributions can be compared directly. If it does not, the team may need to consider proxy analysis or acknowledge that certain dimensions cannot be measured directly. This is also the stage where teams often realize that the data does not support the level of fairness analysis they initially assumed was possible.

Model examination follows, focusing on how the system uses the available data. Feature importance analysis, model coefficients, or explainability techniques such as SHAP or LIME can help identify whether certain variables are acting as proxies for protected attributes. This step is not about proving that the model is biased in a legal sense. It is about understanding which parts of the model behavior might contribute to differential outcomes. A variable that appears innocuous on the surface can have a disproportionate effect on certain groups once the model is trained.

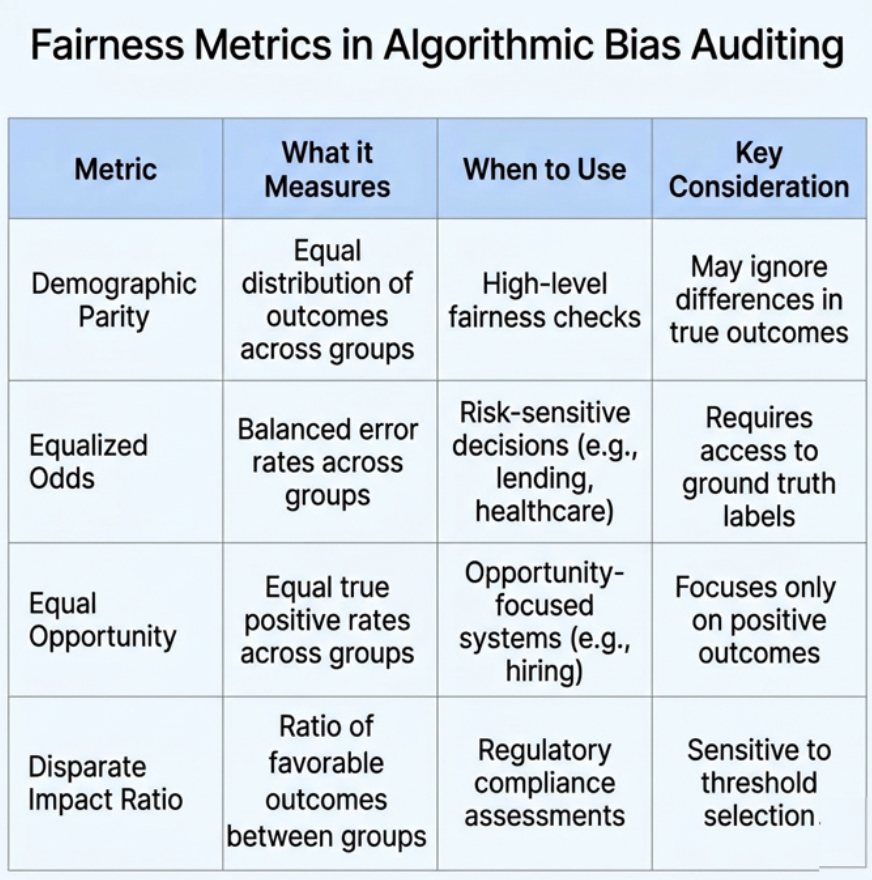

Bias detection and measurement is where formal fairness metrics are applied. This is often treated as the core of the audit, but it only makes sense if the earlier steps were done carefully. Metrics such as demographic parity, equalized odds, equal opportunity, or disparate impact ratios can reveal differences in outcomes across groups[4]. Each metric captures a different notion of fairness, and none of them is universally appropriate. The choice of metric should be tied to the use case and the type of harm the team is trying to prevent. A lending model, for example, may prioritize different fairness constraints than a medical triage system. What matters is that the selection is justified and documented.

Once disparities are identified, the audit moves into root cause analysis. This is often the most difficult part because it requires moving beyond the surface metric and asking why the disparity exists. Is it driven by data imbalance, label noise, model architecture, feature selection, or external factors in the deployment environment? Techniques such as ablation studies, subgroup analysis, and counterfactual testing can help isolate contributing factors. Without this step, mitigation becomes guesswork.

Mitigation strategies are then applied based on the identified causes. These can operate at different stages of the pipeline. Pre-processing methods attempt to adjust the dataset before training, for example through reweighting or resampling. In-processing methods modify the training objective to incorporate fairness constraints. Post-processing methods adjust the model outputs, for example by changing decision thresholds for different groups. Each approach involves trade-offs, particularly between fairness and other performance metrics. Those trade-offs need to be examined openly rather than hidden behind a single aggregate score.

Validation and testing ensure that the applied mitigation has the intended effect. This is not limited to re-running the same metrics on the same data. It may involve cross-validation, stress testing on different subgroups, or simulation of deployment conditions. In some cases, teams also introduce adversarial testing to see how the model behaves under edge cases or unusual inputs. The goal is to avoid a situation where a mitigation appears effective under one set of assumptions but fails under slightly different conditions.

The final step in the core process is documentation, reporting, and preparation for ongoing monitoring. The audit results need to be recorded in a way that captures the methodology, the metrics used, the findings, the mitigation actions taken, and any residual risks. This documentation is what allows the organization to demonstrate accountability later. It also forms the basis for continuous auditing once the system is deployed, where the same steps may need to be repeated as data distributions shift and new patterns emerge.

The Bias Audit Procedure and Reporting Template

One pattern shows up again and again: even when the technical work is solid, the output is hard to reuse. Results sit in notebooks, scattered dashboards, or internal slides that make sense only to the people who produced them. When someone new joins the team, or when an external review is requested, the work has to be reconstructed from fragments. That is not a technical failure. It is a documentation failure. A bias audit that cannot be reconstructed, challenged, or extended loses most of its value the moment the original context disappears.

A structured reporting template solves that problem in a very practical way. It forces the team to move from isolated findings to a coherent narrative that explains what was tested, how it was tested, what was found, and what decisions were made in response. It also creates a consistent format that can be used across models, which makes comparison over time possible. Without that consistency, it becomes difficult to tell whether fairness is improving, degrading, or simply changing shape as systems evolve.

At the top of the report, an executive summary should capture the essential findings in a way that is intelligible beyond the technical team. This is not a place for vague reassurance. It should state clearly whether disparities were detected, how significant they are, which groups are affected, and what actions were taken. If residual risks remain, they should be acknowledged explicitly rather than buried in later sections. Decision-makers rarely read full reports in detail, so this summary becomes the primary interface between the audit and governance decisions.

The methodology section should describe how the audit was conducted, including the scope, the datasets used, the protected attributes considered, and the fairness metrics applied. This is where transparency begins to matter. If a metric was selected because it aligns with a particular notion of fairness, that reasoning should be visible. If certain attributes could not be included due to legal or data availability constraints, that limitation should also be documented. A report that hides its assumptions is difficult to defend under scrutiny.

Data and model details form the backbone of the report. This includes descriptions of the training and evaluation datasets, any preprocessing steps, and the model architecture or training process. The goal is not to reproduce the entire pipeline, but to provide enough information that the context of the audit is clear. If the dataset has known limitations, such as underrepresentation of certain groups or known labeling issues, those limitations should be recorded here rather than treated as background noise.

The results section should present the fairness metrics in a structured and interpretable way. Tables are often more effective than narrative here, especially when multiple metrics and subgroups are involved. Visualizations can help, but they should not replace precise values. It is important to avoid selective reporting. If multiple metrics were calculated, they should be shown together, even if they tell slightly different stories. That tension is part of the reality of fairness evaluation, and hiding it does not make the problem go away.

Root cause analysis should follow directly from the results. This section is where the team explains why the observed disparities are likely occurring. It may draw on feature importance analysis, subgroup comparisons, or other investigative techniques. The explanation does not need to be definitive in every case, but it should be grounded in evidence rather than speculation. If multiple contributing factors are possible, that uncertainty should be acknowledged.

Mitigation actions and their effects should then be documented. This includes a description of what changes were made, how they were implemented, and how the fairness metrics changed as a result. Ideally, before-and-after comparisons are included so that the impact of the mitigation is visible. If trade-offs were introduced, such as a reduction in overall accuracy or a change in calibration, those should be recorded as well. Governance decisions often hinge on whether those trade-offs are acceptable.

Residual risks and recommendations complete the core of the report. Even after mitigation, it is common for some disparities to remain. Pretending otherwise undermines credibility. Instead, the report should describe what risks remain, under what conditions they might become more significant, and what additional steps could be taken if required. Recommendations may include further data collection, changes to deployment practices, or more frequent monitoring for specific subgroups.

Finally, sign-offs and versioning information anchor the report within the organization’s governance process. Who reviewed the audit, who approved it, and when it was conducted are not minor details. They establish accountability and make it possible to trace decisions over time. Appendices can include raw data summaries, code snippets, or additional analysis that supports the main findings without overloading the primary narrative.

A template structured along these lines becomes more than a reporting tool. It becomes part of the organization’s audit infrastructure. Teams can adapt it to their specific context, but the core sections remain stable enough to support comparison, review, and continuous improvement. Over time, this consistency is what allows bias auditing to move from an occasional exercise to an embedded practice.

Tools, Techniques, and Fairness Metrics in Practice

There are more than enough tools for bias auditing, and strangely, that’s part of the problem. Teams often experiment with multiple libraries, generate a wide range of metrics, and then struggle to interpret what the results actually mean. The presence of tools can create a false sense of completeness. Running a fairness library on a dataset does not, on its own, constitute a meaningful audit. The value of these tools depends on how they are used and how their outputs are interpreted.

Open-source libraries such as AIF360, Fairlearn, and Aequitas provide a starting point for many teams. They offer implementations of common fairness metrics and, in some cases, mitigation algorithms. Visualization tools such as the What-If Tool or fairness dashboards can make subgroup comparisons more accessible. These tools are useful because they reduce the friction of performing initial analyses. They allow teams to move quickly from raw data to measurable disparities. What they do not provide is judgment. That still has to come from the team.

Fairness metrics themselves deserve more careful treatment than they often receive. Demographic parity, for example, examines whether outcomes are distributed equally across groups. Equalized odds focuses on error rates, requiring that false positives and false negatives are balanced across groups. Equal opportunity narrows that focus to true positive rates. Disparate impact ratios compare the rate of favorable outcomes between groups. Each of these metrics captures a different aspect of fairness, and in many cases they cannot all be satisfied simultaneously. Choosing one metric over another is not just a technical decision. It reflects a judgment about which type of disparity is most relevant to the use case.

Advanced techniques are becoming more relevant as systems grow in complexity. Intersectional analysis examines how combinations of attributes affect outcomes, rather than treating each attribute independently. Causal approaches attempt to distinguish correlation from causation, which can be important when proxy variables are involved. In generative systems, bias can appear in outputs that are less structured than classification or ranking results, requiring different evaluation strategies. These developments expand what auditing can cover, but they also increase the burden on teams to understand the limits of each method.

One recurring issue is metric interpretation. A fairness metric rarely answers a question directly. It provides a signal that needs to be interpreted in context. A disparity in false positive rates might be acceptable in one domain and unacceptable in another. A model might satisfy one metric while violating another. Without a clear link between the metric and the real-world harm scenario, the numbers can be misleading. That is why metric selection should always be tied back to the use case and documented as part of the audit process.

Another issue is over-reliance on a single tool or metric. Teams sometimes settle on a preferred library or fairness definition and apply it across all models. This creates consistency, but it can also obscure important differences between use cases. A credit scoring model, a medical diagnosis system, and a hiring algorithm operate under different constraints and have different implications for affected individuals. A flexible approach that allows for different metrics and techniques, while maintaining a consistent audit structure, tends to produce more meaningful results.

In practice, effective auditing combines tools, metrics, and judgment. The tools provide the means to calculate and visualize disparities. The metrics provide a structured way to describe those disparities. Judgment connects the results to the real-world context in which the system operates. Without that connection, bias auditing risks becoming an exercise in numerical reporting rather than a process that informs responsible decision-making.

When Fairness Metrics Conflict: Making Defensible Decisions Under Constraint

One thing that becomes clear very quickly is that fairness rarely comes with a single correct answer. Teams often discover, sometimes uncomfortably, that improving one fairness metric can worsen another. A model adjusted to equalize error rates across groups may begin to diverge in outcome distribution. A system tuned for demographic parity may introduce distortions in scenarios where underlying conditions differ meaningfully between populations. These tensions are not edge cases. They are built into the mathematics of fairness itself.

This is where many audits lose their credibility. The team identifies multiple fairness signals, selects one that is easiest to optimize or easiest to explain, and presents the result as if it resolves the broader issue. What is missing is a clear account of the alternatives that were considered and the trade-offs that were accepted. In high-stakes systems, that omission becomes difficult to defend. A regulator, auditor, or affected stakeholder is not only interested in the final metric. They are interested in the reasoning that led to its selection.

A more defensible approach treats fairness evaluation as a constrained decision problem rather than a single-metric optimization. The team begins by identifying which types of harm are most relevant to the use case. In a medical setting, false negatives may carry a different weight than false positives. In lending, the distribution of approvals across groups may be scrutinized differently from error symmetry. Once those priorities are established, the choice of fairness metric becomes an explicit reflection of those priorities rather than an arbitrary technical preference.

Documentation plays a critical role here. If multiple metrics were evaluated, the results should be recorded side by side, even if only one is used for optimization. If trade-offs were observed, they should be described clearly. If a particular metric was selected because it aligns with regulatory expectations or domain-specific risk, that reasoning should be visible. This does not eliminate disagreement, but it changes the nature of the conversation. Instead of debating whether bias exists, stakeholders can examine how it was measured and how decisions were made in response.

Over time, this approach creates a more stable foundation for auditing. Teams become less focused on finding a single metric that “proves” fairness and more focused on building a consistent decision logic that can be applied across models. That consistency matters because fairness debates rarely disappear after deployment. They evolve. A system that is defensible today is one that can explain not only its outputs, but the reasoning behind how those outputs were evaluated.

Common Pitfalls, Failure Patterns, and Why Audits Quietly Break Down

Bias auditing almost never fails in obvious ways. There is usually no single catastrophic mistake that makes a model visibly discriminatory overnight. What tends to happen instead is a slow erosion of rigor. The audit exists, metrics are calculated, reports are written, and yet the system continues to produce outcomes that raise questions the audit was supposed to answer. When that happens, the issue is not that the team ignored bias entirely. It is that the audit process did not hold under real operational pressure.

One of the most common failure patterns is proxy blindness. Teams remove explicit protected attributes and assume the problem has been addressed. In reality, other variables continue to encode similar information. Geography, income patterns, education history, behavioral signals, or even interaction data can act as indirect stand-ins for protected characteristics. If the audit does not actively test for proxy effects, the model can produce disparate outcomes without ever referencing the attribute the team believes it has excluded. This is not a rare edge case. It is one of the default ways bias persists in production systems.

Another recurring issue is incomplete subgroup analysis. Many audits examine only a small number of high-level categories, often because those are the attributes readily available in the dataset. That approach can mask disparities that emerge at intersections. A model may appear balanced when comparing two broad groups, while producing significantly different outcomes for subgroups defined by combinations of attributes. Intersectional analysis is not always easy, especially when data is limited, but ignoring it creates a false sense of fairness that tends to collapse under closer inspection.

Metric gaming is a subtler problem. Once a team selects a fairness metric, there is a temptation to optimize toward that metric in isolation. This can lead to models that perform well on the chosen measure while introducing other forms of disparity that are not being tracked. For example, adjusting thresholds to equalize one error rate may increase another in a way that is less visible but more harmful. An audit that focuses too narrowly on a single metric risks producing results that look compliant but fail under a broader evaluation.

There is also a tendency to treat the audit as a one-time exercise. A model is evaluated before deployment, the results are documented, and the process ends. In practice, data distributions shift, user behavior changes, and feedback loops alter the system’s inputs over time. A model that appears fair at one point can drift into a different state as conditions evolve. Without a plan for continuous auditing, the original audit becomes a historical artifact rather than an active control.

Organizational factors contribute as well. Review timelines, performance incentives, and communication gaps between teams can all weaken the audit process. If delivery speed is prioritized over careful evaluation, audits may be rushed or reduced to minimal checks. If data scientists, product teams, and governance functions are not aligned, important findings may not translate into action. A technically sound audit can still fail if the organization does not create the conditions for its results to influence decisions.

Another pattern that deserves attention is what some practitioners have started to call audit washing. This occurs when the presence of an audit is used as evidence of responsibility, regardless of its depth or effectiveness. The audit exists, therefore the system is considered safe. In reality, the audit may have been narrow in scope, limited in metrics, or disconnected from deployment conditions. This is not simply a communication issue. It is a governance failure, because it allows weak processes to stand in for meaningful control.

Addressing these pitfalls requires more than technical fixes. It requires a shift in how audits are positioned within the organization. They need to be treated as part of the system’s lifecycle, supported by appropriate resources, and connected to decision-making processes. When audits are embedded in this way, they are more likely to surface uncomfortable results early, when there is still time to respond. When they are treated as formalities, those results tend to appear later, under less forgiving circumstances.

What an Audit Cannot See: Blind Spots That Require Judgment

Even the most carefully structured bias audit operates within limits. Some of those limits are technical, others are legal, and some are simply a function of how real-world systems behave. It is possible to measure disparities across known groups, test multiple fairness metrics, and apply mitigation strategies, and still miss forms of harm that only become visible in use. Recognizing these blind spots does not weaken an audit. It makes it more honest.

One common limitation is the absence of protected attribute data. In many environments, collecting or using such attributes is restricted, incomplete, or unreliable. Teams may attempt to infer group membership through proxies, but those approaches introduce their own uncertainties. The result is that certain disparities cannot be measured directly, even though they may exist in practice. An audit that acknowledges this limitation is more credible than one that quietly assumes it does not matter.

Another blind spot appears in how systems are used. A model may be evaluated under controlled conditions and behave within acceptable fairness bounds, yet produce different outcomes once integrated into a decision process. Human interpretation, workflow design, and institutional incentives can all amplify or dampen disparities in ways that are difficult to capture during development. In these cases, the boundary of the audit extends beyond the model itself into the broader system in which it operates.

Temporal effects introduce further complexity. Data distributions shift, user populations change, and feedback loops alter the inputs a model receives. An audit captures a snapshot of behavior at a particular moment, but it cannot guarantee that the same patterns will hold indefinitely. Continuous monitoring helps, but it does not eliminate the need for periodic re-evaluation and contextual judgment.

What these limitations suggest is not that auditing is insufficient, but that it cannot function in isolation. Technical measurement needs to be complemented by domain knowledge, stakeholder input, and governance processes that can interpret results in context. The most effective teams treat audits as one source of evidence among several, rather than as a final answer. That perspective allows them to respond more flexibly when new risks emerge that were not fully visible during initial evaluation.

Case Observations and Lessons from Real Deployments

Bias auditing becomes easier to understand when viewed through real deployments rather than abstract examples. In financial services, for instance, credit scoring systems have been scrutinized for producing different approval rates across demographic groups. In several cases, audits revealed that the disparities were not driven by a single feature, but by a combination of variables that collectively acted as proxies. Mitigation required not only adjusting the model, but also revisiting the data sources and the assumptions embedded in them. The lesson was not that bias could be eliminated entirely, but that it could be reduced and made visible in a way that supported more accountable decision-making.

Healthcare systems offer a different perspective. Diagnostic and risk prediction models often rely on historical data that reflects unequal access to care. In some documented cases, models underestimated risk for populations that had historically received less treatment, because the training data used healthcare utilization as a proxy for need. Auditing these systems required questioning the validity of the target variable itself, not just the distribution of the data. The resulting changes improved equity in outcomes, but also highlighted how easily bias can be introduced through measurement choices.

Public-sector applications add another layer of complexity. Systems used for resource allocation, eligibility assessment, or enforcement decisions operate within legal and social frameworks that demand a high level of transparency. Audits in these environments often need to address not only statistical disparities, but also procedural fairness and the ability to explain decisions to affected individuals. This expands the scope of the audit beyond purely technical considerations, reinforcing the idea that bias auditing is part of a broader governance process.

There are also well-known examples where insufficient auditing led to failure. Hiring systems that favored certain profiles based on historical data, recidivism models that produced controversial risk scores, and facial recognition systems that performed unevenly across demographic groups all illustrate what happens when bias is not examined rigorously before deployment. These cases are often discussed in isolation, but they share a common feature: the audit process was either missing, incomplete, or not taken seriously enough to influence design decisions.

The most consistent lesson across these cases is that bias auditing is not about achieving perfection. It is about reducing harm, making trade-offs explicit, and creating a record that can be examined and challenged. Systems that undergo meaningful audits are not immune to criticism, but they are better equipped to respond to it. Systems that rely on superficial checks tend to face more severe consequences when issues emerge.

Measuring Audit Effectiveness and Sustaining the Process

Once a bias audit has been completed, the question becomes how to determine whether it was effective. The presence of a report is not enough. Effectiveness needs to be assessed through observable indicators that reflect how the system behaves and how the organization responds to that behavior.

One useful indicator is the change in fairness metrics over time. If mitigation steps were applied, there should be measurable differences in the relevant metrics. These changes need to be interpreted carefully, particularly when trade-offs are involved, but they provide a starting point for evaluating impact. Another indicator is the frequency and quality of audits. Regular audits that follow a consistent structure suggest that the process is embedded rather than ad hoc.

Response time to identified issues is also informative. When a disparity is detected, how quickly is it investigated and addressed? Delays can allow problems to persist and grow, especially in systems that operate at scale. Documentation quality provides another signal. Detailed, transparent reports indicate that the team is engaging seriously with the audit process, while sparse or inconsistent documentation may point to underlying weaknesses.

Over time, organizations can develop a sense of audit maturity. Early-stage processes may be reactive and limited in scope. More mature processes integrate auditing into development workflows, continuous integration pipelines, and monitoring systems. In these environments, bias auditing becomes part of how models are maintained rather than a separate activity that occurs occasionally.

Sustaining this process requires ongoing attention. Data changes, models evolve, and external expectations shift. What counted as a sufficient audit at one point may no longer meet expectations later. Teams that treat auditing as a continuous practice are better positioned to adapt to these changes without starting from scratch each time.

Future Direction: Bias Auditing in an Evolving AI Landscape

The shape of bias auditing is already changing. Earlier work focused heavily on classification models with relatively stable datasets. That environment made it possible to define clear evaluation sets, calculate a small number of fairness metrics, and draw conclusions that held long enough to inform deployment decisions. That assumption is becoming less reliable. Systems are now interacting with dynamic data streams, generating outputs that are less structured, and operating in contexts where user behavior feeds back into the model in real time. Under those conditions, a static audit has limited value.

One development is the gradual movement toward continuous auditing. Instead of treating bias as something that is checked at specific milestones, teams are beginning to integrate fairness monitoring into the same pipelines that track performance, reliability, and drift. This does not mean that every fairness question can be answered automatically. It does mean that signals can be detected earlier, and that audits can be triggered by changes in data or behavior rather than by external pressure. The technical challenge is building monitoring systems that are sensitive enough to detect meaningful changes without overwhelming teams with noise.

Another development is the expansion of auditing into new model types. Generative systems, multimodal models, and agent-based architectures introduce forms of bias that are harder to measure with traditional metrics. Outputs may be open-ended, context-dependent, or influenced by long chains of interaction. Evaluating fairness in these systems often requires new approaches, including qualitative analysis, scenario testing, and user-centered evaluation methods. The underlying principle remains the same: identify where harm can occur, measure it where possible, and document the limits of what can be measured.

Regulatory expectations are also likely to evolve. As enforcement mechanisms mature, the emphasis may shift from whether audits exist to how they are conducted and maintained. Organizations may be asked to demonstrate not only that they performed an audit, but that they acted on the findings and continued to monitor the system afterward. This raises the bar for documentation and traceability. It also increases the importance of having processes that can be explained clearly to external reviewers.

There is a broader shift as well, one that is less about specific tools and more about mindset. Bias auditing is moving from a reactive activity to something closer to design discipline. Instead of asking how to detect bias after a model is built, teams are beginning to ask how to reduce the likelihood of bias at earlier stages. This does not eliminate the need for audits. It changes their role. Audits become part of a feedback loop that informs how systems are designed, rather than a final check applied after most decisions have already been made.

Conclusion

Algorithmic bias auditing sits in a difficult place. It requires technical precision, because the work depends on data, models, and metrics that need to be handled carefully. It also requires governance discipline, because the results have implications for how systems are used, how risks are managed, and how organizations are held accountable. When either side is missing, the audit loses its value. Technical work without governance becomes difficult to interpret or act upon. Governance without technical depth becomes detached from the realities of how systems behave.

What makes bias auditing effective is not the presence of a particular tool or metric, but the structure that connects the pieces together. A clear scope, a careful data audit, a thoughtful choice of metrics, a willingness to investigate root causes, a transparent approach to mitigation, and documentation that can withstand scrutiny. None of these elements is individually complex. The challenge is maintaining all of them at once, especially as systems scale and evolve.

There is no realistic scenario in which bias auditing eliminates every form of disparity. Trade-offs exist, data is imperfect, and some risks only become visible over time. The purpose of auditing is not to create an illusion of perfection. It is to reduce harm, make decisions visible, and provide a basis for accountability. In environments where AI systems influence access to resources, opportunities, or services, that purpose is not optional.

For teams working in responsible AI, the practical step is to move from occasional checks to a repeatable process. Run an audit, document it, revisit it, and integrate it into how systems are maintained. The Bias Audit Procedure and Reporting Template discussed earlier is intended to support that shift by providing a structure that can be adapted and reused. Over time, consistency in how audits are conducted becomes one of the strongest signals that fairness is being treated as an operational concern rather than a rhetorical one.

Bias in AI systems will continue to attract attention, not because it is new, but because its consequences are becoming more visible. The teams that handle that attention well will not be the ones that claim their systems are unbiased. They will be the ones that can show how they tested, what they found, what they changed, and what they continue to watch. That level of clarity is what turns auditing from a defensive exercise into a form of credibility.

Download the Full AI Bias Audit & Governance Toolkit

This comprehensive toolkit provides a structured approach to assessing fairness, identifying bias risks, and implementing governance controls across AI systems. It is designed for teams that require a practical, end-to-end framework covering evaluation, mitigation, validation, and decision accountability.

Use this resource to standardize your audit process, document critical decisions, and support responsible AI deployment across projects and organizations.

AI Bias Audit & Governance Toolkit

Complete fairness assessment framework, mitigation workflow, and governance documentation.

References

- European Commission. Ethics Guidelines for Trustworthy AI. 2019.

https://digital-strategy.ec.europa.eu/en/library/ethics-guidelines-trustworthy-ai

↩ - European Union. EU Artificial Intelligence Act (AI Act). 2024.

https://eur-lex.europa.eu/eli/reg/2024/1689/oj

↩ - National Institute of Standards and Technology (NIST). AI Risk Management Framework (AI RMF 1.0). 2023.

https://nvlpubs.nist.gov/nistpubs/ai/NIST.AI.100-1.pdf

↩ - Barocas, Solon, Moritz Hardt, and Arvind Narayanan. Fairness and Machine Learning. MIT Press.

https://fairmlbook.org/

↩

Frequently Asked Questions About Algorithmic Bias Auditing (FAQ)

What is algorithmic bias auditing in AI systems?

Algorithmic bias auditing is a structured process used to identify, measure, and mitigate discriminatory outcomes in AI systems. It involves analyzing datasets, model behavior, and decision outputs across different groups to detect disparities and ensure fairness within operational and regulatory expectations.

Is bias auditing required under the EU AI Act?

Yes. For high-risk AI systems, the EU AI Act requires providers to implement data governance and risk management practices that include identifying, preventing, and mitigating bias. Organizations must also document these processes and monitor system behavior after deployment.

Which fairness metrics are commonly used in bias audits?

Common fairness metrics include demographic parity, equalized odds, equal opportunity, and disparate impact ratio. Each metric captures a different type of disparity, and the choice depends on the use case and the type of harm the system may produce.

How often should algorithmic bias audits be conducted?

Bias audits should not be one-time exercises. They should be conducted before deployment and continuously after deployment as part of monitoring processes, especially when data distributions, user behavior, or system conditions change over time.

Can bias be completely removed from AI systems?

No. Bias cannot be fully eliminated because it often reflects underlying data limitations and real-world inequalities. However, structured auditing can significantly reduce harmful disparities, make trade-offs visible, and ensure accountability in how AI systems are designed and used.

Covering responsible AI, governance frameworks, policy, ethics, and global regulations shaping the future of artificial intelligence.