Global AI governance has reached an awkward stage. The old argument that regulation is “coming someday” no longer holds up. In Europe, the conversation has shifted into implementation, enforcement planning, and practical redesign of internal controls[1]. In the United States, companies still face a more fragmented environment, but nobody serious is treating that as a reason to stay casual[2]. The pressure is now coming from several directions at once: procurement teams asking harder questions, sector regulators expecting evidence of risk management, boards wanting assurance that expansion into new markets will not trigger expensive rework, and customers increasingly unwilling to accept the answer that an AI system was built responsibly simply because someone said so. The friction is not theoretical anymore. It shows up in deal cycles, product roadmaps, vendor reviews, and legal sign-off.

I have seen the same tension repeat across multinational organizations in different forms. A company builds one internal governance model for the European market because the EU AI Act is detailed enough to force discipline. Then it keeps a looser, more voluntary process for US operations because the legal environment looks less centralized. A third layer sits somewhere above both, drafted in broad language around trust, fairness, or accountability, often inspired by OECD principles or internal ethics statements. Each layer makes sense on its own. Together they create duplication, inconsistent documentation, conflicting control language, and the quiet risk that a system approved in one jurisdiction will have to be partially rebuilt for another. That is where cost starts to build. Not dramatic at first, but the kind that quietly compounds. Repeated review cycles. Additional testing. Parallel policy stacks. Delayed launches. Procurement friction. Executive confusion about which standard is actually governing what.

The mistake is assuming harmonization means waiting for governments to agree on a single global rulebook. That is not how this field is developing. The more realistic question is how organizations can build one governance architecture that is strong enough to travel across jurisdictions without pretending the legal differences do not matter. The most durable answers usually come from mapping intersections rather than chasing uniformity. A rights-heavy European obligation does not disappear because a US framework is voluntary. A voluntary framework does not become strategically irrelevant because it lacks statutory penalties. OECD principles do not become soft background material simply because they are not written like a regulation. In practice, each of these instruments influences design decisions, audit expectations, procurement language, and internal governance maturity in different ways.

That is why the more practical approach in 2026 is not to ask which framework matters most in the abstract. It is to understand where the major frameworks overlap, where they diverge, and which of them can serve as anchors for a governance model that will hold up under cross-border scrutiny. The EU AI Act matters because it introduces concrete obligations, especially for high-risk systems, and because its reach extends beyond organizations physically established in the Union. NIST matters because its risk management structure is practical, legible to technical teams, and increasingly influential even outside strictly American contexts. The OECD principles still matter because they continue to shape the international vocabulary of trustworthy AI and have become part of the conceptual grammar behind many newer instruments. ISO/IEC 42001 matters because its certifiable management-system structure is gaining rapid adoption in 2026, providing organizations with a practical, auditable way to embed responsible AI governance and demonstrate alignment across multiple jurisdictions. The Council of Europe Convention matters because it adds another rights-centered layer that legal and public-sector teams are already watching closely.

The organizations handling this well are usually not the ones with the loudest public statements. They are the ones that treat interoperability as an operational design problem. They inventory their systems, sort them by risk and jurisdiction, identify the controls that are common across frameworks, and then add targeted layers where the laws or expectations genuinely diverge. They also accept a fact that many teams resist for too long: if you are going to build a serious multinational AI governance program, you need a living map of obligations, not a stack of disconnected PDFs. Once that becomes clear, harmonization stops looking like an impossible legal puzzle and starts looking more like architecture.

This article takes that view seriously. The aim is not to produce another generic survey of AI rules. It is to examine how the leading frameworks in circulation actually relate to each other, where the pressure points are for multinational organizations, and how a governance model can be built to reduce duplication without flattening meaningful legal differences. The center of the analysis is a practical regulatory mapping approach that can be used to compare obligations across the EU AI Act, NIST AI RMF, OECD principles, ISO/IEC 42001, and related instruments. That matters because companies rarely fail here from lack of awareness. They fail because awareness never becomes structure.

The 2026 Global AI Governance Landscape: Fragmentation, Convergence, and the Pressure in Between

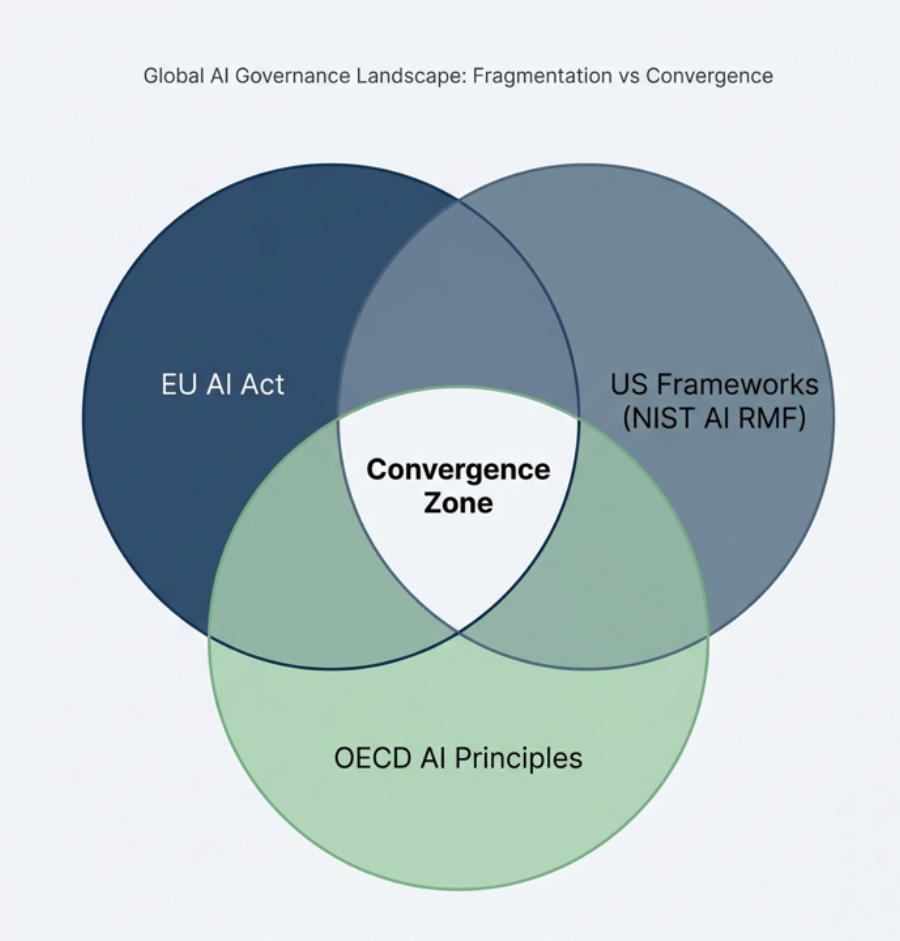

It is easy to misunderstand the current landscape by describing it as either fragmented or converging. It is both—and that tension explains most of the strategic difficulty. On one side sits a visibly uneven regulatory environment. The European Union has moved ahead with a formal, risk-based legal regime. The United States now combines a deregulatory federal stance (with active preemption of many state AI laws) and continued reliance on voluntary standards, procurement expectations, and targeted sectoral oversight. The OECD remains principles-based and international rather than directly punitive. ISO standards operate through certification and management-system adoption rather than public enforcement. The Council of Europe framework brings in treaty logic tied to human rights, democracy, and the rule of law. None of these instruments is interchangeable with another. Their legal force, institutional design, and implementation pathways differ in ways that matter.

At the same time, if you look closely, the points of convergence are obvious. The language is not identical, but the recurring concerns are familiar: risk management, documentation, transparency, accountability, human oversight, technical robustness, bias and discrimination, lifecycle monitoring, and governance responsibility. The OECD principles helped establish that vocabulary early. NIST translated much of that language into a risk-management structure that technical and operational teams could work with. The EU AI Act did not simply appear out of nowhere with an unrelated philosophy. It absorbed and hardened many themes that were already circulating internationally, then attached them to legal obligations, conformity logic, and institutional enforcement. ISO/IEC 42001, in turn, offers a management-system structure that many organizations now see as a practical bridge between broad principle and internal implementation.

The European model is still the most prescriptive and the most operationally disruptive for multinational firms. Major obligations for most high-risk systems become applicable from 2 August 2026, with full enforcement ramping up thereafter (and short delay proposals under discussion for certain conformity assessments). That is not only because of fines, though the financial exposure matters. It is because the EU approach forces classification, documentation, testing discipline, governance assignment, and market-facing accountability in ways that many organizations can no longer postpone. Once a company builds those controls for Europe, it often discovers that it would be irrational to keep the rest of its global governance program substantially weaker. The cost of maintaining two very different maturity levels usually becomes harder to justify than lifting the rest of the organization toward a more consistent baseline. This is one reason the so-called Brussels Effect keeps resurfacing in AI conversations. Even where direct legal obligation does not apply, the operational benchmark tends to travel.

The United States creates a different kind of pressure. The absence of one comprehensive federal AI statute does not produce a vacuum so much as a layered compliance problem. NIST AI RMF remains formally voluntary, but voluntary frameworks should not be confused with optional influence. NIST language shows up in internal governance programs, public-sector expectations, vendor diligence, and corporate risk conversations because it offers something many legal texts do not: a practical operating model. State-level measures add more complexity, especially where employment, consumer protection, algorithmic discrimination, or sector-specific risk reviews are concerned. The result is not a neat alternative to the European system. It is a looser environment that still punishes organizational complacency, often through a mix of litigation exposure, enforcement risk, procurement barriers, and reputational fallout rather than one single AI code.

The OECD occupies a different position again. It would be a mistake to dismiss it as soft material for conference panels. The OECD principles still matter because they continue to shape the international baseline for what responsible AI governance is supposed to cover[3]. They also remain useful because they are broad enough to travel across jurisdictions while still specific enough to inform internal policy design. That is one reason multinational governance teams keep returning to them when they need common language across business units operating under different local legal regimes. The OECD does not solve the enforcement problem. It helps solve the coherence problem.

Then there is the layer of instruments that sit slightly outside the three frameworks most often named in business conversations but increasingly influence how harmonization is done in practice. ISO/IEC 42001 has gained attention because organizations need a management-system model that can hold policies, procedures, roles, reviews, and continuous improvement in one place. The Council of Europe Framework Convention is significant because it extends the rights conversation in a legally serious direction[6] and will matter to institutions operating close to public-sector, democratic-process, or high-impact rights contexts. G7 and GPAI processes matter less because they impose direct obligations and more because they shape the direction of interoperability debates. They are part of the reason the field now talks less about isolated national frameworks and more about translation layers between them.

The practical consequence is uncomfortable but useful: global AI governance is no longer a matter of choosing one preferred framework and ignoring the rest. Organizations have to work with a stack. Some elements in that stack are legally binding. Some are voluntary but influential. Some are managerial. Some are normative. Some are rapidly becoming procurement expectations even before they harden into law. The companies that keep asking which one is the real standard are usually asking the wrong question. The more useful question is which framework can do what inside a larger governance architecture. One may be best for legal scoping. Another may be better for risk process design. Another may work best as a common international vocabulary. Another may provide the management structure that keeps the whole program from collapsing into a document library nobody maintains.

That is the context in which harmonization becomes a serious governance task rather than a thought exercise. The problem is not only that obligations differ. It is that they operate at different levels of abstraction and are administered by different institutional logics. Trying to reconcile them through broad principle statements usually produces vagueness. Trying to reconcile them through jurisdiction-by-jurisdiction silos produces duplication. The more workable path is a mapped approach that identifies common controls, jurisdiction-specific add-ons, and the points where one framework can serve as the implementation vehicle for another. That is where the article now needs to go, because once the landscape is clear, comparison becomes unavoidable.

Deep Comparison of Core Frameworks: Where Alignment Exists and Where It Breaks

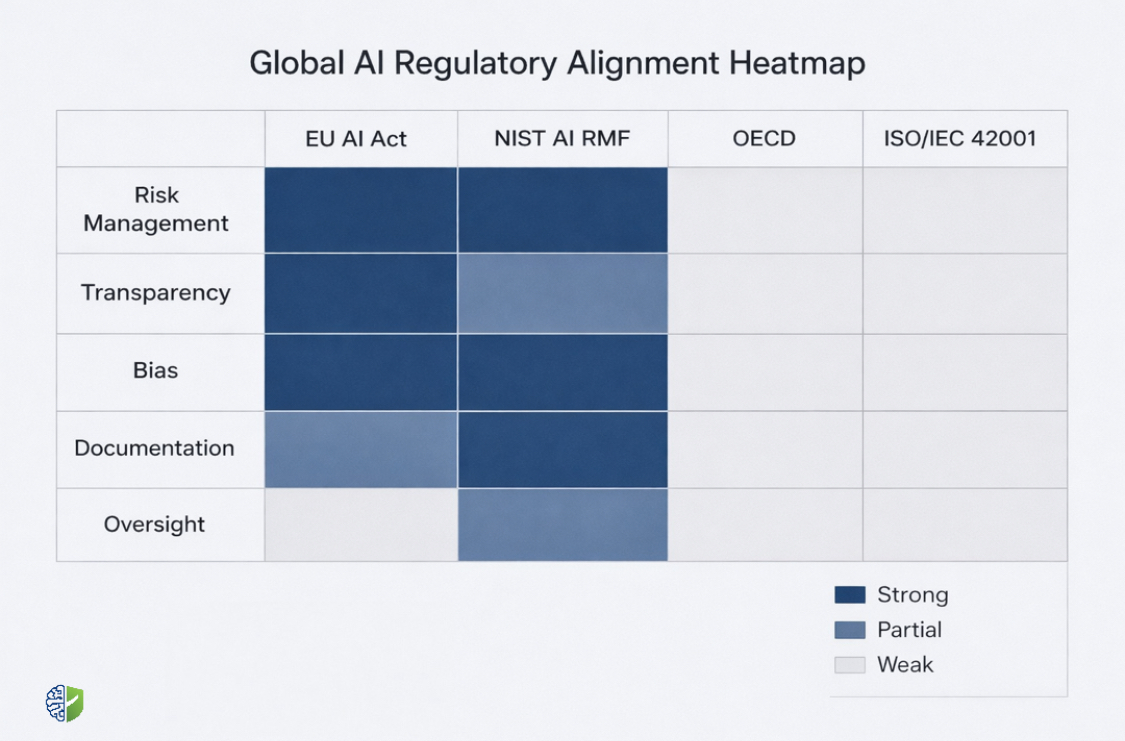

At a glance, most AI governance frameworks seem to be saying similar things. They all speak about risk, accountability, transparency, fairness, and oversight. The difficulty only becomes visible when those ideas are translated into operational requirements. That is where alignment starts to thin out and where multinational organizations begin to feel the weight of running parallel systems. The real comparison is not about language. It is about how each framework expects organizations to behave when systems are designed, deployed, monitored, and challenged.

The EU AI Act operates from a legal and classification-driven perspective. Systems are grouped into categories based on risk, and those categories trigger obligations that are not optional[1]. Documentation is not simply recommended. It is required.

The NIST AI Risk Management Framework takes a different path. It is not structured as a law, and it does not classify systems into formal legal categories. Instead, it provides a risk-based operational model built around functions such as governance, mapping, measuring, and managing[2]

The OECD AI Principles sit even higher in abstraction. They define what responsible AI should achieve rather than prescribing how each requirement must be implemented. Concepts like human-centered values, transparency, robustness, and accountability are articulated in a way that can be adopted across legal systems and industries. This flexibility is precisely why they remain influential. They provide a shared language that allows organizations operating across jurisdictions to anchor their governance philosophy in something broadly recognized. At the same time, they do not solve the problem of operational detail. They depend on other frameworks to translate principles into controls.

ISO/IEC 42001 introduces another layer entirely. It does not compete with legal frameworks or high-level principles. It focuses on management structure[4]. The logic is familiar to organizations that have worked with ISO standards in other domains. Policies, roles, responsibilities, monitoring, internal audits, and continuous improvement are organized into a system that can be certified and maintained. This makes it particularly useful as a bridge.

Recent US executive actions — beginning with the January 2025 revocation of EO 14110 via EO 14179, followed by the July 2025 release of “Winning the Race: America’s AI Action Plan,” and the December 2025 EO asserting federal primacy over many state AI laws — have shifted the environment toward explicit deregulation and preemption of state-level rules deemed burdensome[5]. These measures prioritize innovation, infrastructure acceleration, and US global dominance while directing NIST to revise the AI RMF (removing certain references) and emphasizing ideologically neutral AI in federal use. The result is a deliberately light-touch federal posture at the national level, combined with continued sectoral oversight in high-stakes domains, procurement expectations, and active federal efforts to limit state fragmentation. NIST remains a widely referenced operational benchmark — especially in procurement and vendor diligence — even as the broader policy direction reduces centralized compliance pressure compared with the EU approach.

High-Level Comparison of Core Frameworks

| Framework | Nature | Scope | Risk Approach | Operational Depth | Enforcement |

|---|---|---|---|---|---|

| EU AI Act | Binding Regulation | EU + extraterritorial | Risk classification (unacceptable, high, limited) | High (detailed obligations) | Strong (fines, restrictions) |

| NIST AI RMF | Voluntary Framework | US + global adoption | Contextual risk management | High (practical implementation) | Indirect (procurement, expectations) |

| OECD AI Principles | Voluntary Principles | International | Principle-based | Moderate (conceptual) | None (influence-based) |

| ISO/IEC 42001 | Management Standard | Global | Process-driven governance | High (organizational systems) | Certification-based |

| US Executive / Sectoral | Deregulatory federal policy + state preemption push | US (federal primacy asserted + state) | Innovation-first with targeted sectoral risk | Low to moderate (federal light-touch) | Indirect (procurement, litigation, preemption) |

What becomes clear from even a simple comparison is that no single framework covers everything at the same level. The EU AI Act is strongest where legal certainty and enforceability are required. NIST is strongest where operational clarity is needed. OECD is strongest where cross-border conceptual alignment is necessary. ISO is strongest where governance must be embedded into repeatable organizational systems. The US environment, taken as a whole, introduces pressure through multiple channels rather than one central rule.

Obligations Mapping Across Frameworks

| Requirement | EU AI Act | NIST AI RMF | OECD Principles | ISO 42001 |

|---|---|---|---|---|

| Risk Assessment | Mandatory for high-risk systems | Core function (Map/Measure) | Implied under safety & accountability | Embedded in management process |

| Documentation | Detailed technical documentation required | Recommended practice | Conceptual expectation | Formal documentation required |

| Transparency | Specific disclosure obligations | Contextual transparency guidance | Core principle | Policy-driven |

| Human Oversight | Explicit requirement | Governance function | Human-centered principle | Defined roles & controls |

| Bias / Fairness Controls | Required for high-risk systems | Risk mitigation process | Fairness principle | Integrated into controls |

| Monitoring & Review | Post-market monitoring required | Continuous lifecycle management | Ongoing responsibility | Continuous improvement cycle |

Looking at obligations side by side exposes something that often goes unnoticed in high-level discussions. The frameworks are not competing with each other. They are filling different layers of the same problem. The EU AI Act specifies what must be done and when it becomes legally binding. NIST explains how organizations can structure their internal processes to actually do it. OECD provides the underlying rationale that keeps those actions aligned with broader expectations of responsible AI. ISO provides the mechanism for sustaining all of it within an organization over time.

This layered structure is where harmonization becomes realistic. Instead of asking which framework to follow, organizations can begin to assign roles to each one. Legal obligation, operational process, conceptual alignment, and organizational embedding do not need to be solved by the same instrument. When they are treated as interchangeable, governance becomes inconsistent. When they are treated as complementary, structure starts to emerge.

Enforcement and Practical Consequences

The enforcement dimension is where many governance discussions become distorted. There is a tendency to treat binding regulations as serious and voluntary frameworks as secondary. In practice, the distinction is not that simple. The EU AI Act carries direct penalties and market restrictions, which makes it impossible to ignore. But voluntary frameworks often shape procurement requirements, investor expectations, and internal audit standards in ways that produce real consequences even without formal fines. A company that cannot demonstrate alignment with widely recognized frameworks may find itself excluded from contracts or subjected to deeper scrutiny, even if no regulator has issued a penalty.

This is one of the reasons multinational organizations increasingly treat NIST alignment, OECD consistency, and ISO certification as part of their overall risk posture rather than optional add-ons. The absence of enforcement does not mean the absence of consequence. It often means that consequences arrive indirectly, through business relationships, reputation, or internal governance expectations rather than formal legal action.

Once this is understood, the comparison between frameworks stops being an academic exercise. It becomes a practical question of how to design a governance system that can satisfy legal requirements, support operational teams, align with international expectations, and withstand external scrutiny without fragmenting into disconnected parts. That is where a structured mapping approach becomes essential, because without it, even well-intentioned governance programs tend to drift into inconsistency over time.

Choosing the Anchor Framework: Where Harmonization Actually Begins

One of the most overlooked decisions in global AI governance is not how to map frameworks, but where to start. Organizations often assume that harmonization begins with comparison. In practice, it begins with anchoring. Without an anchor framework, mapping exercises tend to remain descriptive rather than operational. Everything starts to look equally important, and every framework competes for priority. The result is not alignment, but indecision structured as analysis.

An anchor framework does not replace others. It defines the primary logic through which governance is organized. This distinction matters because different frameworks operate at different levels. The EU AI Act defines legal obligations. NIST structures operational risk management. OECD establishes conceptual alignment. ISO embeds governance into systems. Treating them as interchangeable creates duplication. Assigning one as the anchor creates direction.

In many multinational environments, the anchor emerges from regulatory pressure. The EU AI Act often plays this role because it introduces binding obligations tied to system classification, documentation, and enforcement. Once those controls are implemented, they create a baseline that other frameworks can align with. NIST can then be used to operationalize those controls. OECD principles can ensure conceptual consistency across regions. ISO can stabilize the system within organizational processes.

In other cases, particularly where organizations are earlier in their governance maturity, the anchor may be operational rather than legal. NIST provides a structure that technical teams can implement quickly, allowing governance to take shape before legal requirements are fully imposed. Over time, EU obligations are layered onto that structure as systems expand into regulated markets. The sequence differs, but the principle remains the same. Harmonization begins when one framework is chosen to organize the others.

This decision is rarely documented explicitly, but it shapes every subsequent step. It determines how risks are classified, how controls are designed, how documentation is structured, and how compliance is demonstrated. Without it, governance remains fragmented even when mapping appears complete. With it, alignment becomes directional rather than reactive.

The Global AI Regulatory Mapping Table: Turning Fragmentation into Structure

Most organizations do not struggle because they are unaware of AI governance requirements. They struggle because those requirements live in different documents, written in different styles, enforced in different ways, and interpreted by different teams. The result is a familiar pattern. Legal teams track regulatory obligations. Engineering teams follow internal development practices. Compliance teams maintain policy documents. Each group believes it is addressing governance. Very few organizations can clearly show how those efforts connect across jurisdictions. That gap is where harmonization either succeeds or quietly fails.

The most reliable way to close that gap is to build a working mapping layer. Not a theoretical comparison, but a structured view of how obligations and expectations align across frameworks. Once that exists, governance stops being a collection of separate interpretations and starts becoming a coordinated system. It becomes easier to identify where one control satisfies multiple frameworks, where additional requirements need to be layered, and where potential conflicts or gaps exist. More importantly, it allows organizations to maintain consistency as frameworks evolve rather than restarting analysis each time something changes.

The table below represents a simplified version of that mapping approach. In practice, organizations expand it based on their systems, risk profiles, and jurisdictions. The objective is not to force uniformity across frameworks. It is to create a clear operational picture of alignment and divergence so that decisions can be made deliberately rather than reactively.

Global AI Regulatory Mapping Table (Core Structure)

| Requirement | EU AI Act | NIST AI RMF | OECD Principles | ISO 42001 | Alignment Level | Notes / Harmonization Approach |

|---|---|---|---|---|---|---|

| Risk Identification | Mandatory classification for high-risk systems | Map function defines context & risk | Implied through safety & accountability | Formal risk processes required | Strong | Use NIST mapping as base, extend to EU classification |

| Technical Documentation | Detailed documentation required | Recommended documentation practices | High-level expectation | Documented system processes required | Strong | Align EU documentation with ISO structure for consistency |

| Transparency | Specific obligations depending on system type | Contextual transparency guidance | Core principle | Policy-driven transparency controls | Partial | Define baseline transparency standard, add EU-specific disclosures |

| Human Oversight | Required for high-risk AI | Governance function includes oversight | Human-centered values principle | Role-based control requirements | Strong | Establish unified oversight policy across jurisdictions |

| Bias & Fairness Controls | Explicit requirement for high-risk systems | Risk mitigation processes | Fairness principle | Integrated control mechanisms | Strong | Use NIST for methodology, EU for enforcement baseline |

| Monitoring & Lifecycle Management | Post-market monitoring required | Continuous lifecycle risk management | Ongoing responsibility | Continuous improvement cycle | Strong | Align lifecycle monitoring across all frameworks |

| Incident Reporting | Mandatory for serious incidents | Recommended risk response processes | Implicit in accountability | Defined escalation processes | Partial | Adopt EU reporting standard globally where feasible |

| Accountability & Governance | Defined roles and responsibilities | Governance function core | Accountability principle | Formal governance structure required | Strong | Use ISO as backbone for governance roles |

| Third-Party Risk | Obligations extend to providers & deployers | Supply chain risk included | Responsible value chain principle | Vendor control processes required | Strong | Integrate supplier governance into core framework |

| Conformity Assessment | Required for high-risk systems | Not defined formally | Not defined | Internal audit & certification | Partial | Use EU conformity as benchmark, ISO audits as support |

What emerges from this mapping is not just comparison, but a pattern. Some requirements align almost perfectly across frameworks, even if they are described differently. Others align conceptually but differ in how strictly they are enforced. A smaller group exists only in certain frameworks, often reflecting specific regulatory priorities. Recognizing these categories allows organizations to build governance layers deliberately instead of duplicating effort or leaving gaps.

In practice, many organizations use a color-coded version of this table internally. Green for strong alignment, yellow for partial overlap, and red for gaps that require additional controls. Over time, the table becomes less of a static reference and more of a working instrument. It is updated as new regulations emerge, as internal systems evolve, and as audit findings reveal weaknesses. The value is not only in the initial mapping. It is in maintaining a live view of how governance obligations shift over time.

The most effective implementations also connect this table directly to internal processes. Instead of treating it as a separate document, they link each requirement to specific controls, policies, and system-level practices. A transparency requirement is tied to actual disclosure mechanisms. A monitoring obligation is tied to specific dashboards and review cycles. A documentation requirement is linked to templates and version control systems. This reduces the distance between governance theory and operational reality.

There is also a strategic benefit that is easy to overlook. Once an organization can demonstrate a clear mapping between frameworks, it becomes easier to communicate with regulators, partners, and customers. Instead of presenting fragmented evidence, the organization can show how its governance model satisfies multiple expectations simultaneously. This reduces friction in audits, procurement processes, and cross-border deployments. It also signals maturity. Not because the organization claims to follow every framework, but because it can explain how those frameworks have been integrated into a coherent structure.

It is worth noting that no mapping table remains complete for long. The landscape continues to evolve. New guidance appears. Interpretations shift. Sector-specific rules expand. That is not a weakness of the approach. It is the reason the approach is necessary. Without a structured mapping layer, each change forces organizations to restart analysis from the beginning. With it, updates become incremental rather than disruptive.

Once this foundation is in place, the conversation naturally moves from comparison to execution. The question is no longer how frameworks relate to each other. It becomes how organizations can build governance programs that use these relationships to operate efficiently across jurisdictions. That is where harmonization becomes practical rather than conceptual.

Harmonizing Compliance in Practice: Building a Governance Model That Travels

Once the mapping layer is in place, the conversation changes. The question is no longer how different frameworks relate to each other in theory. It becomes how to build a governance model that can operate across them without constant redesign. This is where many organizations slow down. Not because the problem is unclear, but because translating alignment into operational structure requires decisions that affect systems, teams, and internal authority. The organizations that move forward tend to treat harmonization as a design problem rather than a compliance checklist.

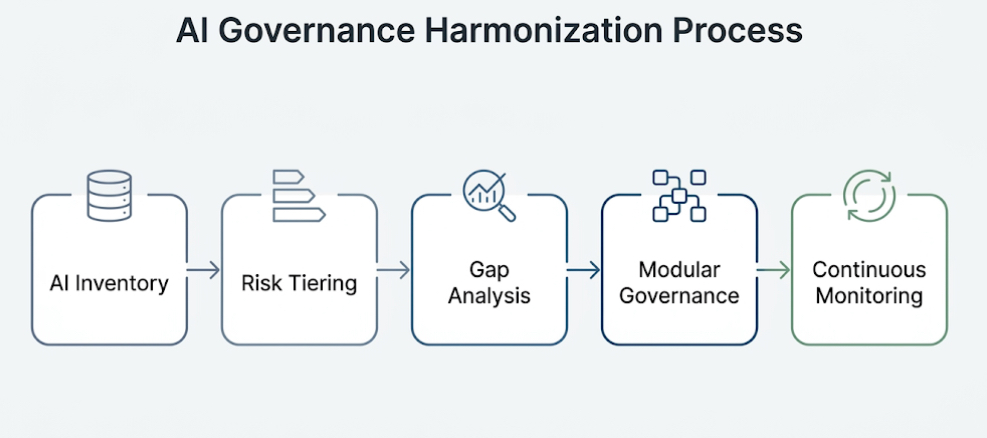

The first step is to understand what actually exists inside the organization. An enterprise-wide inventory of AI systems is not a formality. It is the point where governance stops being abstract. Systems need to be identified, categorized, and tied to business functions. It becomes immediately clear that not all systems carry the same level of risk, and not all operate under the same regulatory exposure. Some systems will fall into categories that trigger strict obligations under the EU AI Act. Others may operate primarily in environments shaped by NIST guidance or sectoral rules. Without this inventory, harmonization efforts tend to overreach in some areas and miss critical exposure in others.

Once systems are visible, risk tiering becomes the next layer of structure. This is where the organization decides how different frameworks will apply to different categories of systems. High-risk systems, especially those with cross-border exposure, often become the anchor point. Controls are built to meet the most demanding requirements, which in many cases means aligning with EU obligations. Lower-risk systems may still follow the same governance structure but with lighter controls. The objective is not to reduce governance where it is inconvenient, but to apply it proportionately so that resources are focused where risk is highest.

The mapping table then becomes a working tool rather than a reference document. Gap analysis is performed against real systems. Where the EU AI Act requires specific documentation, the organization checks whether existing processes under NIST or internal policies already satisfy those requirements or need to be extended. Where NIST provides detailed operational guidance, it is used to fill in areas where legal texts remain high-level. Where OECD principles define expectations that are not explicitly captured elsewhere, they are translated into internal policy language to ensure conceptual alignment across regions.

This process usually reveals something uncomfortable but useful. Many organizations discover that they are already doing parts of what is required, but in inconsistent ways. Documentation exists, but not in a standardized format. Risk assessments are performed, but not systematically across all systems. Monitoring occurs, but without a unified reporting structure. Harmonization does not require starting from zero. It requires bringing these fragments into a coherent structure and making them visible across the organization.

Modular governance is where that structure begins to stabilize. Instead of building separate governance programs for each jurisdiction, organizations define a core set of policies, processes, and controls that apply globally. These core elements are usually aligned with widely accepted principles and operational frameworks, such as OECD concepts and NIST risk management functions. On top of that core, jurisdiction-specific modules are added where necessary. EU-specific obligations for high-risk systems are layered in. Sectoral requirements in the United States are incorporated where relevant. The result is not a single uniform system, but a modular architecture that can adapt without fragmenting.

ISO/IEC 42001 plays a useful role at this stage because it provides a structure for embedding governance into the organization. Policies, roles, responsibilities, monitoring, and review processes are formalized within a management system that can be audited and maintained. This reduces the risk that governance remains a set of documents without operational traction. It also creates continuity. As frameworks evolve, updates can be incorporated into an existing system rather than requiring a full redesign.

Cross-functional coordination becomes unavoidable as harmonization progresses. Legal teams interpret obligations. Compliance teams translate them into controls. Technical teams implement those controls within systems. Product teams manage deployment and user interaction. If these groups operate in isolation, governance becomes inconsistent. Organizations that handle this well create shared processes where decisions about risk, mitigation, and documentation are visible across functions. This does not eliminate disagreement. It makes disagreement explicit and manageable.

Continuous monitoring is where harmonization either holds or begins to drift. Frameworks evolve. Systems change. Data shifts. New use cases emerge. A governance model that is not updated regularly will fall out of alignment, even if it was well designed initially. Effective programs build monitoring and review into their structure. This includes periodic reassessment of system risk, updates to the mapping table, internal audits, and feedback loops from incidents or near-misses. The objective is not to maintain perfect alignment at all times. It is to ensure that misalignment is detected early and addressed deliberately.

There is also a strategic dimension that becomes clearer over time. Organizations that invest in harmonized governance often find that it reduces friction beyond compliance. Procurement becomes smoother because requirements can be demonstrated consistently. Partnerships become easier because governance expectations are clear. Internal decision-making improves because risk is framed in a structured way. Harmonization, when done well, stops being a defensive exercise and becomes part of how the organization operates.

Harmonization Checklist for Multinational Organizations

- Establish a complete inventory of AI systems across jurisdictions

- Classify systems by risk and regulatory exposure

- Map obligations across EU AI Act, NIST, OECD, and ISO frameworks

- Identify gaps and overlaps using a structured mapping table

- Define a global core governance layer aligned with common principles

- Add jurisdiction-specific modules for legal and sectoral requirements

- Implement a management system to embed governance processes

- Create cross-functional coordination mechanisms for decision-making

- Establish continuous monitoring, audit, and update cycles

- Document all processes to support internal and external review

These steps are rarely followed in a straight line. Organizations move back and forth between them as new information emerges. The important point is that harmonization is treated as an ongoing process rather than a one-time project. The frameworks will continue to evolve. The governance model needs to be able to evolve with them without losing coherence.

At this stage, the technical and structural work is largely in place. What remains are the points where harmonization tends to break down under pressure. Those are not always technical failures. They are often organizational, strategic, or interpretive. Understanding those failure points is as important as understanding the frameworks themselves, because they determine whether a governance model holds in practice.

Where Harmonization Breaks: Real Challenges and What They Reveal

On paper, harmonization looks structured and achievable. Frameworks can be mapped. Controls can be aligned. Policies can be written. But once systems move into production environments and across jurisdictions, friction begins to appear in ways that are not always anticipated. The breakdown rarely comes from a single point of failure. It emerges gradually, often at the intersection of interpretation, operational pressure, and organizational priorities.

One of the most common points of tension is over-compliance. In an effort to reduce regulatory risk, organizations sometimes default to the strictest interpretation of every framework and apply it universally. This can create a governance model that is technically compliant but operationally heavy. Teams begin to experience delays. Product development slows. In some cases, systems that do not require high-risk controls are subjected to them anyway, creating unnecessary complexity. The intent is to stay safe. The outcome can be reduced agility and internal resistance to governance processes.

The opposite problem is less visible but equally significant. Under-compliance often occurs when organizations treat voluntary frameworks as optional rather than foundational. NIST guidance, for example, may not carry the same legal force as the EU AI Act, but it shapes expectations around risk management and operational discipline. Ignoring it can leave gaps that only become visible during audits, incidents, or external scrutiny. What appears as flexibility in the short term can become exposure over time.

There is also a structural challenge that becomes more pronounced in multinational environments. Governance responsibilities are often distributed across regions. Different teams interpret the same requirement in slightly different ways. Documentation standards vary. Risk assessments are conducted using different criteria. Without a strong central structure, harmonization begins to fragment again, not because frameworks are incompatible, but because implementation is inconsistent. The mapping layer may exist, but it is not enforced as a shared reference point.

Supply chains introduce another layer of complexity. AI systems rarely operate in isolation. They depend on data providers, model components, third-party services, and external platforms. Each of these elements carries its own governance assumptions and limitations. Ensuring alignment across this network is difficult, particularly when partners operate under different regulatory expectations. Contracts can address part of this, but they do not always translate into operational transparency. This creates a situation where an organization may meet its own governance requirements while inheriting risk from its dependencies.

Interpretation itself becomes a source of divergence. Legal language is often intentionally broad to allow flexibility. Operational frameworks provide guidance rather than strict rules. As a result, different teams may arrive at different conclusions about what constitutes sufficient compliance. One team may interpret a transparency requirement as a need for detailed user-facing disclosures. Another may view it as internal documentation. Both interpretations can be defended, but only one may hold under regulatory review. Harmonization requires not only mapping frameworks, but also aligning interpretations internally.

There is also a tendency to underestimate the impact of time. Governance is often designed at a single point, based on current understanding of frameworks and system behavior. Over time, systems evolve. Data distributions shift. New use cases emerge. Regulations are updated. Without continuous adjustment, a governance model that was once aligned begins to drift. This drift is rarely dramatic. It accumulates quietly until it becomes visible during an audit or an incident. At that point, correction is more difficult because the gap has widened.

Some organizations encounter what can be described as “compliance silos.” Different teams focus on satisfying specific frameworks independently. One team ensures alignment with EU requirements. Another focuses on NIST implementation. Another tracks OECD principles. Each group produces documentation and evidence that satisfies its own objectives. But when viewed together, these efforts do not form a coherent system. There are overlaps, contradictions, and gaps. The organization appears compliant from multiple angles, but lacks a unified governance structure.

Mitigating these challenges requires a shift in how governance is treated internally. Instead of being seen as a set of obligations to be satisfied, it becomes a system that needs to be maintained. Central coordination plays a role, not in controlling every decision, but in ensuring that decisions are consistent with a shared structure. This often involves establishing common definitions, shared templates, and regular review processes that cut across teams and regions.

External validation also becomes more relevant as governance matures. Internal assessments are necessary, but they are shaped by the organization’s own assumptions and constraints. Independent audits, peer reviews, or participation in external initiatives can reveal blind spots that internal processes may not capture. This is not only about demonstrating compliance. It is about testing whether the governance model holds under different perspectives.

Ultimately, the challenges that emerge during harmonization are not signs of failure. They are indicators of where the model needs to be strengthened. Organizations that treat these challenges as feedback tend to build more resilient systems over time. Those that treat them as isolated issues often find themselves addressing the same problems repeatedly in different forms.

Understanding these patterns makes it easier to recognize them early. It also sets the stage for examining how organizations have navigated similar challenges in practice, and what can be learned from those experiences.

The Hidden Cost of Misalignment: Where Governance Quietly Fails

Misalignment in AI governance rarely appears as a single failure. It accumulates gradually across decisions that seem reasonable in isolation. A system is approved under one framework but lacks documentation expected in another. A risk assessment satisfies internal policy but does not align with external audit expectations. A transparency measure is implemented in one region but not replicated elsewhere. Each gap is small, but together they form a pattern that becomes visible only when systems are examined under cross-border scrutiny.

The cost of this misalignment is often underestimated because it does not always present as immediate regulatory exposure. It appears instead as friction. Procurement processes slow down because evidence cannot be standardized. Product launches are delayed while additional reviews are conducted. Engineering teams revisit systems that were previously considered complete. Legal teams spend time reconciling interpretations that were never aligned from the beginning. These costs are rarely captured in governance metrics, but they accumulate across the lifecycle of a system.

There is also a reputational dimension that becomes more significant as AI governance matures. Organizations are increasingly expected to demonstrate not only that they comply with specific requirements, but that their governance approach is coherent. Inconsistent practices across regions or systems raise questions about reliability. Even where no formal violation exists, perceived inconsistency can affect trust among partners, regulators, and customers.

Understanding this cost changes how harmonization is approached. It shifts the focus from avoiding penalties to reducing friction. Governance becomes a way of maintaining consistency across decisions rather than a series of isolated compliance checks. This is where structured mapping, anchored frameworks, and continuous monitoring begin to show their full value. They do not eliminate complexity. They prevent complexity from turning into inefficiency.

Case Patterns: What Actually Works and What Quietly Fails

Across industries, the same pattern starts to show up when organizations attempt to align governance across jurisdictions. It is not always visible in public case studies, but it becomes clear through audit reports, internal reviews, and regulatory interactions. Some organizations build governance structures that hold under pressure. Others appear aligned until the moment their systems are examined more closely. The difference is rarely about resources alone. It is about how alignment was approached from the beginning.

In one case, a multinational financial services firm approached harmonization by treating ISO/IEC 42001 as its structural backbone. Governance roles, documentation processes, and monitoring cycles were defined within that system. Instead of building separate compliance tracks for each jurisdiction, the organization mapped EU AI Act obligations, NIST practices, and OECD principles into that structure. What this created was not a perfect alignment, but a stable one. When EU requirements changed, updates were made within an existing system rather than added as isolated controls. When US expectations evolved, the same structure absorbed them. The organization was not exempt from challenges, but it avoided fragmentation because its governance model was anchored in a consistent framework.

Another example comes from a healthcare organization deploying diagnostic AI systems across multiple regions. Initially, governance was handled locally. Each regional team interpreted requirements independently. Documentation varied. Risk assessments were conducted using different criteria. On the surface, each region appeared compliant within its own context. The problem became visible when the organization attempted to present a unified compliance posture to external stakeholders. Differences in interpretation created inconsistencies that could not be easily reconciled. The solution was not to rewrite everything, but to introduce a centralized mapping layer and standardize key processes. Over time, the organization moved from fragmented compliance to coordinated governance, but only after recognizing that local optimization had created global inconsistency.

There are also cases where harmonization efforts were technically sound but operationally unsustainable. A technology company operating in multiple jurisdictions implemented a governance model that attempted to satisfy the strictest interpretation of every framework simultaneously. Controls were comprehensive. Documentation was extensive. From a regulatory perspective, the system was robust. Internally, however, teams struggled to maintain it. Processes became slow. Decision-making was delayed. Over time, informal workarounds began to appear as teams tried to meet operational demands. The governance model remained intact on paper, but its practical effectiveness declined. The lesson was not that strict compliance is unnecessary, but that governance needs to be proportionate and maintainable.

Failure patterns often share common characteristics. One of the most frequent is the absence of a clear connection between policy and implementation. Organizations define principles and requirements, but those definitions do not translate into system-level controls. Another pattern is reliance on static documentation. Policies are written, approved, and stored, but not revisited as systems evolve. When audits occur, documentation reflects an earlier state rather than current practice. This creates a gap between what is recorded and what is actually happening.

Successful implementations tend to show a different set of characteristics. Governance is treated as part of system design rather than an external layer. Documentation is integrated into workflows rather than maintained separately. Monitoring is continuous, not periodic. Perhaps most importantly, there is clarity about responsibility. Teams understand not only what needs to be done, but who is accountable for ensuring that it is done consistently across jurisdictions.

There is also a shift in how organizations view alignment over time. In early stages, harmonization is often framed as a compliance requirement. As programs mature, it becomes a way of reducing uncertainty. Teams know how to approach new use cases because a structure already exists. Decisions can be made more quickly because governance expectations are clear. In this sense, harmonization moves from being a constraint to being an enabler.

These patterns do not eliminate the need for ongoing effort. Frameworks continue to evolve. New regulatory interpretations emerge. Technologies introduce new forms of risk. What they demonstrate is that alignment is achievable in practice, but only when it is treated as an evolving system rather than a one-time alignment exercise.

Measuring Alignment and Sustaining It Over Time

Once a governance model is established, the next question is how to determine whether it is actually working. Alignment is not something that can be assumed based on initial design. It needs to be observed, measured, and adjusted. This is where many organizations find themselves without clear indicators. They have policies, processes, and documentation, but limited visibility into how effectively those elements function together.

A practical way to approach this is to define a small set of indicators that reflect both compliance and operational effectiveness. Coverage is one of them. Organizations need to understand what proportion of their AI systems fall within the defined governance structure and how many remain outside it. Audit outcomes provide another signal. The frequency of findings, the nature of those findings, and how quickly they are resolved all indicate how well governance is functioning. Over time, patterns begin to emerge that show where alignment is strong and where it needs reinforcement.

Time also becomes a measurable factor. The ability to bring new systems into compliance without significant delay is an indicator of how well governance has been integrated into development processes. If compliance introduces consistent delays, it suggests that governance is still operating as an external requirement rather than part of the system lifecycle. Conversely, if systems can be deployed with governance controls already in place, it indicates that alignment has been embedded effectively.

Risk reduction is more difficult to measure directly, but it can be observed through indirect signals. Fewer incidents, clearer escalation paths, and more consistent decision-making all point to a governance model that is functioning as intended. These indicators do not eliminate risk, but they suggest that risk is being managed in a structured way rather than addressed reactively.

To sustain alignment, governance needs to be tied to regular review cycles. This includes updating the mapping table as frameworks evolve, reassessing system classifications, and revisiting assumptions about risk. These reviews are not simply administrative tasks. They are opportunities to detect drift and realign before gaps become significant. Organizations that maintain this rhythm tend to avoid the accumulation of hidden inconsistencies.

Over time, governance maturity becomes visible. Early-stage programs focus on establishing structure. Intermediate programs refine processes and improve consistency. Mature programs integrate governance into strategic decision-making. At that stage, alignment is no longer a separate activity. It becomes part of how the organization operates across jurisdictions.

The direction of travel is clear. Global frameworks are not becoming identical, but they are becoming more interconnected. Organizations that invest in alignment now are positioning themselves to adapt more easily as that convergence continues. Those that delay often find themselves reacting to changes rather than shaping how they respond to them.

Looking Ahead: Convergence Without Uniformity

There is a tendency to expect that global AI governance will eventually settle into a single unified framework. The reality is more complex. Different jurisdictions will continue to reflect different priorities, legal traditions, and policy objectives. The EU will maintain its rights-based, prescriptive approach. The United States will continue to combine voluntary frameworks with sector-specific regulation. International bodies will promote principles that aim to bridge these differences without eliminating them.

At the same time, the connections between these frameworks are becoming stronger. Concepts move between them. Practices are shared. Standards such as ISO/IEC 42001 provide a common structure that organizations can adopt regardless of jurisdiction. Initiatives at the international level continue to explore interoperability and mutual recognition. The result is not uniformity, but a form of convergence that reduces fragmentation without removing diversity.

For organizations, this creates a different kind of challenge. It is no longer enough to follow a single framework. It becomes necessary to understand how frameworks relate to each other and to build systems that can operate within that interconnected environment. This requires a level of strategic thinking that goes beyond compliance. It involves anticipating how governance expectations will evolve and designing structures that can adapt without constant disruption.

The organizations that approach this effectively tend to view governance as part of their long-term operating model rather than a response to specific regulations. They invest in structures that can absorb change, in processes that can be updated without friction, and in teams that understand how different frameworks interact. This does not eliminate complexity. It makes complexity manageable.

Conclusion

Harmonizing global AI standards is not a matter of choosing one framework over another. It is a process of understanding how different frameworks align, where they diverge, and how those relationships can be translated into a coherent governance model. The work is ongoing. Frameworks evolve. Systems change. New risks emerge. What remains constant is the need for structure.

Organizations that build that structure deliberately tend to move with greater confidence. They are better positioned to meet regulatory expectations, to manage risk, and to operate across jurisdictions without constant rework. Those that approach harmonization as a series of isolated tasks often find themselves repeating the same effort in different forms.

Where this is going is already clear. Global AI governance will continue to develop through interaction rather than consolidation. The question is not whether alignment is necessary, but how effectively it is achieved. In practice, that comes down to how well organizations translate frameworks into systems that hold under real conditions.

Download the Full AI Governance Template

For teams moving from theory to implementation, this template provides a structured way to map AI regulatory obligations across major frameworks, including the EU AI Act, NIST AI RMF, OECD AI Principles, and ISO/IEC 42001.

The document includes governance mapping tables, alignment scoring methodology, ownership structures, documentation requirements, and a complete evidence register to support compliance programs.

What’s inside:

- Framework classification and interpretation guide

- Alignment methodology and scoring scale

- Jurisdiction applicability mapping

- Governance roles and ownership structure

- Core regulatory mapping tables

- Evidence register and gap remediation tracker

References

- EU AI Act (2024).

View source - NIST AI RMF (2023).

View source - OECD AI Principles (2019).

View source - ISO/IEC 42001 (2023).

View source - White House AI Policy (2025).

View source - Council of Europe AI Convention (2024).

View source

Frequently Asked Questions About Harmonizing Global AI Standards (FAQ)

What does harmonizing global AI standards actually mean in practice?

Do organizations need to comply with all global AI frameworks at once?

Which framework should organizations prioritize?

What is the biggest challenge?

Is global AI regulation becoming unified?

Covering responsible AI, governance frameworks, policy, ethics, and global regulations shaping the future of artificial intelligence.