For years, much of AI compliance has been treated as a release exercise: complete the conformity work, ship, archive the file, move on. That framing breaks down quickly in deployment. AI systems are not static products; they are deployed into environments that shift, attract new user behavior, and accumulate operational edge cases. Over time, those shifts change the risk profile in ways that are difficult to capture in pre-market testing alone.

The EU AI Act makes that lifecycle reality enforceable. Article 72 of Regulation (EU) 2024/1689 requires providers of high-risk AI systems to operate a documented, proportionate post-market monitoring system after the system is placed on the market or put into service. The effect is a shift in the compliance posture: the obligation is no longer limited to demonstrating conformity at a point in time, but to maintaining control over performance and risk in real operating conditions.

This is not unique to AI. Regulated sectors with safety-critical products have long treated post-market surveillance as the practical test of risk controls. Medical devices can pass pre-market checks and still show unexpected failure modes in clinical use. Aviation embeds incident reporting and continuous improvement because certification does not eliminate operational risk. Financial services learned the same lesson through model risk management: governance has to persist after deployment.

Article 72 matters because material failures often surface after deployment. Bias can emerge when a system meets populations or operating conditions that were thinly represented in training and evaluation. Performance can degrade as inputs shift and operational constraints change. Safety controls can be bypassed or eroded by incentives in the field. In many public controversies around hiring, credit, biometrics, welfare administration, and content moderation, the recurring problem was not the absence of pre-deployment documentation but the absence of credible monitoring once the system was in use.

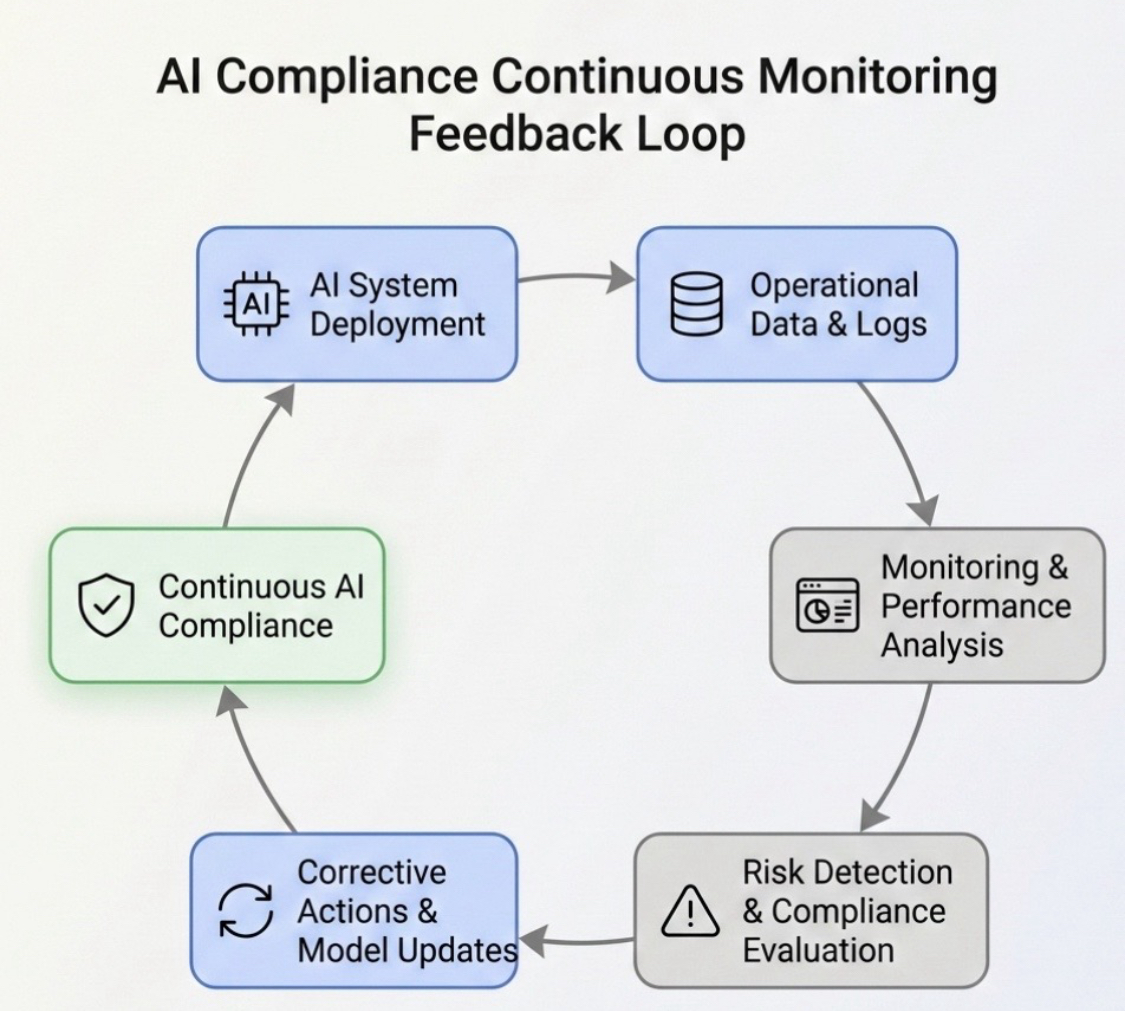

Post-market monitoring under the EU AI Act operationalizes that lesson. It requires a functioning feedback loop: systematic collection of field data (including from deployers), structured analysis, and documented decisions that translate signals into corrective action where needed. The compliance boundary therefore extends into operations, where the system is used, where failure modes appear, and where the evidence base for continued conformity is produced.

From a supervisory standpoint, post-market monitoring is also one of the easiest obligations to verify. Market surveillance reviews are evidence-driven: authorities look for records of monitoring activities, data sources, evaluation outputs, governance decisions, and corrective actions. Article 72 links monitoring directly to the technical documentation via Annex IV, and it sits alongside Article 73 on serious incident reporting, which raises the stakes for detection and escalation discipline.

In practice, delays usually come from unresolved ownership and thresholds: who reviews monitoring outputs, what triggers escalation, what constitutes a reportable incident, and what evidence is retained. Without those decisions, monitoring becomes informal and difficult to defend under external review. Article 72 is designed to force that operational clarity.

The discussion below stays close to Article 72 and the operational reality it implies: data sources that remain reliable under real usage, metrics that detect drift and emerging risk, escalation paths that engineering and compliance teams can actually run, and a monitoring plan that fits Annex IV without reading like a box-ticking exercise.12

The EU AI Act’s structure makes the philosophy clear: the most important compliance work begins after the system meets the world. Chapter IX frames the post-market monitoring and market surveillance regime in one place, which is useful when you’re aligning internal monitoring routines with supervisory expectations.3

The Legal Foundation: What Article 72 Actually Requires

Much of the public conversation about the EU AI Act gravitates toward classifications—what counts as a high-risk system, what falls into prohibited practices, which transparency duties apply to generative models. Those are important questions, but the daily operational burden of the regulation lives elsewhere. It lives in the requirement to monitor systems once they are deployed. Article 72 is where that obligation becomes explicit.

The text of Article 72 may appear concise on first reading, but the obligations it creates reach deeply into how organizations design governance around deployed AI systems. In practical terms, the article introduces a requirement that providers maintain a structured process for collecting, reviewing, and acting on information about system performance once the system is already operating in the real world. The law recognizes that the conditions under which an AI model is trained and evaluated rarely remain stable after deployment. Environments evolve, users adapt behavior, and datasets drift.

Paragraph 1 establishes the core duty: providers of high-risk AI systems must establish and document a post-market monitoring system. The wording emphasizes proportionality. The monitoring framework must reflect both the nature of the AI system and the level of risk it presents. In practice, this means that a model supporting medical triage decisions or biometric identification cannot be monitored with the same minimal oversight one might apply to a low-impact internal tool. The monitoring architecture must be commensurate with the potential harm the system could produce.

The proportionality principle is important because it prevents a purely formalistic approach. A monitoring system cannot exist only on paper. Authorities reviewing compliance will expect evidence that the process genuinely tracks real-world system behavior. Logs, deployer feedback, evaluation results, and corrective actions become the operational evidence that monitoring is actually taking place.

Paragraph 2 of Article 72 introduces a second obligation that is often overlooked when teams first read the regulation. Providers are not simply expected to maintain monitoring procedures; they must actively and systematically collect, document, and analyze relevant data on the performance of their AI systems throughout the system’s lifetime. The language deliberately avoids narrow interpretation. Data may come from deployers, users, logs, or other available sources that reflect how the system behaves under operational conditions.

That requirement is significant because it effectively transforms monitoring from a passive review process into an ongoing data collection exercise. Providers must ensure that operational feedback loops exist. If deployers encounter anomalies, unexpected outputs, or performance degradation, the provider must have a mechanism for capturing that information. Without that feedback channel, the monitoring system would fail the standard implied by Article 72.

The provision also requires providers to use collected information to evaluate whether the system continues to comply with the requirements established in Title III, Chapter 2 of the Act. Those requirements include risk management, data governance, transparency obligations, human oversight mechanisms, accuracy, robustness, and cybersecurity. Monitoring is therefore not limited to performance metrics; it must also examine whether the system still meets the legal standards that justified its placement on the market.

Paragraph 3 ties the monitoring system directly to technical documentation. The regulation requires that the monitoring framework be based on a documented post-market monitoring plan that forms part of the technical documentation described in Annex IV. This linkage is crucial for compliance audits. Technical documentation is the record authorities examine when verifying conformity. If the monitoring plan is incomplete, inconsistent, or absent from the documentation package, regulators may interpret that as a failure of the provider’s compliance system.

The European Commission is also expected to develop implementing acts specifying the structure or template for the monitoring plan. Although organizations do not need to wait for those templates to begin building monitoring capabilities, the expectation is that providers will maintain a clear and structured plan describing how monitoring operates in practice. Such a plan typically includes the objectives of monitoring, data collection methods, analysis procedures, and the roles responsible for interpreting monitoring results.

Article 72 does not exist in isolation within the regulation. It connects directly with other provisions that extend the lifecycle compliance model. Article 73 addresses the reporting of serious incidents and malfunctioning AI systems. If monitoring detects a severe failure or safety issue, providers must report that incident to national authorities within the timelines defined in the regulation. Monitoring therefore acts as the detection layer that feeds the incident reporting framework.

The monitoring regime also interacts with Article 26, which establishes responsibilities for deployers of high-risk AI systems. Deployers must monitor system operation within their own environment and inform providers when issues arise. In effect, Article 26 and Article 72 create a shared oversight structure: deployers observe operational behavior, while providers analyze aggregated information and implement corrective measures when needed.

Seen together, these provisions create a regulatory architecture that mirrors mature safety regimes in other sectors. Monitoring, incident reporting, corrective action, and documentation are all linked. The goal is not only to detect failures but also to ensure that providers maintain a continuous understanding of how their AI systems behave once they leave controlled development environments.

For organizations preparing for the regulation’s enforcement phase, understanding Article 72 as an operational requirement rather than a legal formality is essential. Monitoring is not a single activity but an infrastructure: a system for capturing signals, evaluating them against compliance expectations, and acting when necessary. Providers that design this infrastructure early will find the rest of the lifecycle obligations easier to manage.

Scope: Who Must Implement Post-Market Monitoring

Understanding the scope of post-market monitoring under the EU AI Act requires looking carefully at how the regulation defines responsibility across the AI value chain. Article 72 is directed primarily at providers of high-risk AI systems. In the language of the regulation, the provider is the natural or legal person that develops an AI system or has it developed and places it on the market or puts it into service under its own name or trademark. That definition matters because it assigns lifecycle accountability to the entity that controls the system’s design and distribution.

The obligation therefore attaches most strongly to systems classified as high risk under Annex III of the Act. These include AI systems used in sensitive contexts such as employment decisions, creditworthiness evaluation, law enforcement support, migration management, access to essential services, and biometric identification. In these domains, the potential for harm is not theoretical. Automated decisions can influence livelihoods, access to financial systems, public safety outcomes, and civil rights. For that reason, the regulation requires continuous scrutiny of how these systems behave once they operate outside controlled development environments.

Annex III does not simply list abstract categories; it reflects real areas where algorithmic systems have already generated significant policy debate. Employment screening tools, for instance, have faced criticism when models trained on historical hiring patterns reproduced discrimination embedded in past decisions. Credit scoring models have raised questions about explainability and bias. Biometric identification systems have triggered concerns about surveillance and fundamental rights. These controversies are precisely why regulators insist that monitoring cannot stop after conformity assessments are completed.

While providers carry the primary responsibility, deployers also play a meaningful role in the monitoring ecosystem. Article 26 of the EU AI Act requires deployers to operate high-risk systems according to the instructions provided by the provider and to monitor the system’s functioning during use. Deployers must inform the provider when they identify incidents or malfunctions that could present risks. In practice, this creates a feedback channel from the operational environment back to the system’s developer.

The relationship between provider and deployer becomes particularly important when AI systems are embedded within complex operational infrastructures. Consider an AI model integrated into a banking platform to assist with credit risk assessment. The provider may maintain the model and its underlying training pipeline, but the deployer—the financial institution—observes how the model performs in daily lending decisions. If the system begins producing unexpected outputs or if regulators question particular decisions, the deployer’s observations become essential signals for the provider’s monitoring process.

This shared responsibility does not dilute the provider’s accountability. The regulation expects providers to design monitoring mechanisms capable of receiving, interpreting, and acting upon information generated by deployers. Without such mechanisms, feedback from real-world operations would remain fragmented and compliance would become difficult to demonstrate.

The proportionality principle appears again when considering how extensive monitoring must be. Not every high-risk system requires identical oversight structures. A biometric identification system deployed across multiple jurisdictions may require extensive monitoring dashboards, automated alerts, and formal review committees. A smaller-scale AI application used in a limited operational context may rely on simpler reporting channels and periodic review cycles. What regulators will look for is alignment between the scale of risk and the rigor of the monitoring process.

Organizations sometimes misunderstand proportionality as permission to reduce monitoring obligations. In practice, the opposite is often true. If a system affects fundamental rights, influences financial outcomes, or supports public decision-making, the expectation of rigorous monitoring increases. Regulators will examine whether monitoring practices realistically capture the kinds of risks that could arise from the system’s deployment environment.

Another aspect of scope involves systems that evolve over time. AI models may be retrained, updated, or integrated with new datasets after deployment. When these changes occur, monitoring becomes even more critical. Updates can alter system behavior in subtle ways that are difficult to anticipate during development. Post-market monitoring provides the mechanism through which providers observe how those changes affect performance, reliability, and compliance.

For organizations operating internationally, the scope of Article 72 extends beyond the European Union itself. If a provider places a high-risk AI system on the EU market or makes it available within the EU, the obligations apply regardless of where the company is headquartered. This extraterritorial dimension mirrors other EU regulatory frameworks such as the General Data Protection Regulation, reinforcing the expectation that global technology providers must align with European governance standards when operating in the EU market.

From a governance perspective, the scope of Article 72 encourages organizations to rethink how they structure accountability for AI systems after deployment. Monitoring responsibilities cannot be confined to engineering teams alone. Compliance specialists, legal advisers, product managers, and operational stakeholders must collaborate to ensure that monitoring signals are interpreted correctly and that corrective measures are implemented when necessary.

In practical terms, organizations that deploy high-risk AI systems often establish cross-functional monitoring structures that combine technical oversight with regulatory awareness. These structures allow monitoring signals—such as unusual performance metrics or deployer complaints—to be evaluated in both technical and legal contexts. The goal is not merely to track metrics but to ensure that the system remains aligned with the regulatory framework that permitted its deployment.

Understanding the scope of Article 72 therefore requires seeing post-market monitoring as a distributed process rather than a single technical task. Providers design the monitoring system, deployers supply operational insights, and authorities verify that the process operates effectively. Together these actors form the feedback loop that allows AI systems to remain compliant throughout their operational lifecycle.

Building the Continuous Feedback Loop in Real Operations

In regulatory language, the phrase “post-market monitoring” can sound procedural. In practice, it describes something more dynamic: a feedback loop that allows providers to see how an AI system behaves once it leaves the laboratory and enters the friction of real environments. Article 72 does not prescribe a specific technical architecture for this loop, but the intent becomes clear when the provision is read alongside the broader compliance framework of the EU AI Act. Monitoring must generate evidence about how the system performs over time and how emerging risks are detected before they escalate into incidents.

Real monitoring begins with data. Providers must decide what operational signals reveal whether a system remains within acceptable performance boundaries. Logs generated by deployed systems often form the first layer of this visibility. These logs capture how models respond to inputs, how frequently certain outputs appear, and whether unusual error conditions occur. When collected systematically, such records allow engineers and compliance teams to observe patterns that might otherwise remain invisible.

Operational telemetry is equally important. Many modern AI deployments already generate telemetry through application infrastructure, model serving platforms, and monitoring tools used in machine learning operations. Latency metrics, error rates, throughput levels, and system health indicators can reveal early signs that the operational environment has changed in ways that affect model behavior. While these signals are often viewed as technical performance indicators, they also provide early warnings relevant to compliance oversight.

User and deployer feedback provides another layer of monitoring intelligence. AI systems rarely operate in isolation; they interact with professionals, administrators, and everyday users who observe their behavior during real tasks. These individuals frequently notice anomalies before automated monitoring systems do. Structured reporting channels—such as incident portals, feedback dashboards, or dedicated reporting procedures—allow providers to capture these observations and integrate them into monitoring analysis.

Article 72(2) and the Role of “Other Sources”

One part of Article 72 that deserves closer attention is paragraph 2’s reference to monitoring information that may arise from “other sources.” Read in context, that wording suggests post-market monitoring should not be limited to internal technical telemetry or provider-controlled reporting channels alone.

For high-risk AI systems, relevant monitoring signals may also emerge from the system’s real-world use environment: user complaints, customer support records, dispute mechanisms, consumer protection bodies, legal proceedings, regulatory inquiries, and independent expert or research findings. These sources can reveal patterns of harm, rights impacts, or operational failures that are not immediately visible through system logs or model performance dashboards.

This matters because the EU AI Act is not concerned only with whether a system continues to function in technical terms. It is also concerned with whether the system continues to operate in a way that protects health, safety, and fundamental rights once deployed. In practice, a credible Article 72 monitoring framework should therefore be capable of receiving and evaluating both internal operational signals and external indicators of harm.

That does not mean every complaint or external allegation automatically becomes evidence of non-compliance. It means providers should design monitoring processes that can capture, assess, and where necessary escalate signals coming from outside the model’s own technical environment. For many organizations, this will be one of the more difficult aspects of turning Article 72 into a functioning governance process.

Monitoring systems also benefit from external signals. Public reporting, academic scrutiny, and regulatory inquiries sometimes reveal systemic risks that individual providers might not detect internally. In several well-known cases involving algorithmic decision systems, investigative research or public complaints exposed performance disparities long before providers recognized the issue. Effective monitoring therefore includes mechanisms for tracking public discourse and independent findings related to deployed AI technologies.

Collecting information alone is not sufficient. The monitoring system must interpret the signals it receives. Many providers rely on performance indicators designed to reveal drift in model behavior. Distribution shifts in input data can gradually degrade model accuracy even when system infrastructure functions normally. Monitoring pipelines therefore track changes in data distributions, model confidence scores, or prediction patterns. When these indicators cross predefined thresholds, they trigger deeper analysis.

Bias detection metrics often form part of this monitoring layer. If a system was evaluated for fairness during development, those fairness indicators should continue to be measured after deployment. Changes in user populations, regional data characteristics, or operational policies can introduce disparities that were not visible during testing. Monitoring metrics allow providers to detect these patterns early and evaluate whether mitigation measures are necessary.

Robustness indicators also matter. Unexpected outputs, model instability, or repeated edge-case failures may indicate that the model is encountering inputs outside its expected operating conditions. In certain sectors—particularly those involving public services or financial systems—such signals can indicate systemic risks that demand immediate attention.

To manage these diverse signals, many organizations deploy monitoring dashboards that consolidate operational metrics, incident reports, and performance indicators into a single environment. These dashboards do not replace analysis, but they allow teams to see emerging patterns quickly. Alerts can be configured to notify engineers or compliance officers when predefined thresholds are exceeded, ensuring that signals are not buried within large volumes of operational data.

Automation often plays a role in these monitoring pipelines. Machine learning operations platforms frequently include tools for detecting concept drift, monitoring prediction confidence, and flagging anomalies. When integrated properly, such tools help ensure that monitoring does not depend entirely on manual review. Instead, automated systems surface potential problems early, allowing teams to investigate before issues escalate.

The final component of a functioning feedback loop is the pathway from signal to action. Monitoring is valuable only if it leads to decisions. When indicators reveal emerging risks, organizations must determine whether the issue requires retraining a model, updating system documentation, adjusting operational procedures, or initiating formal incident reporting under Article 73. These decisions require collaboration across engineering, compliance, and product teams.

In mature monitoring environments, this response process is documented as clearly as the monitoring pipeline itself. Teams define escalation thresholds, assign responsibility for investigations, and maintain records of corrective actions taken in response to monitoring signals. These records eventually form part of the evidence that providers present during compliance audits.

The architecture of the monitoring loop therefore resembles a continuous cycle. Signals emerge from operational environments, monitoring systems collect and analyze them, organizations respond to the findings, and the updated system continues to operate under observation. Over time this cycle produces a growing body of knowledge about how the AI system behaves in the real world. That knowledge becomes one of the most valuable assets an organization possesses when demonstrating responsible AI governance.

The Post-Market Monitoring Plan and Its Place in Technical Documentation

Behind every functioning monitoring system there is a written plan. Article 72 makes this explicit by linking the monitoring obligation to the technical documentation requirements described in Annex IV of the EU AI Act. The regulation expects providers not only to monitor deployed systems but also to document how monitoring will operate. This plan becomes part of the compliance record that authorities may review when verifying whether a provider has established an adequate governance framework.

Technical documentation in the context of the EU AI Act is not merely a descriptive file about how an AI system was built. It is a structured record that allows regulators and auditors to understand the lifecycle of the system—from design and training to deployment and post-deployment oversight. When the monitoring plan is embedded within this documentation, it provides evidence that the provider anticipated the need for continuous supervision rather than treating monitoring as an afterthought.

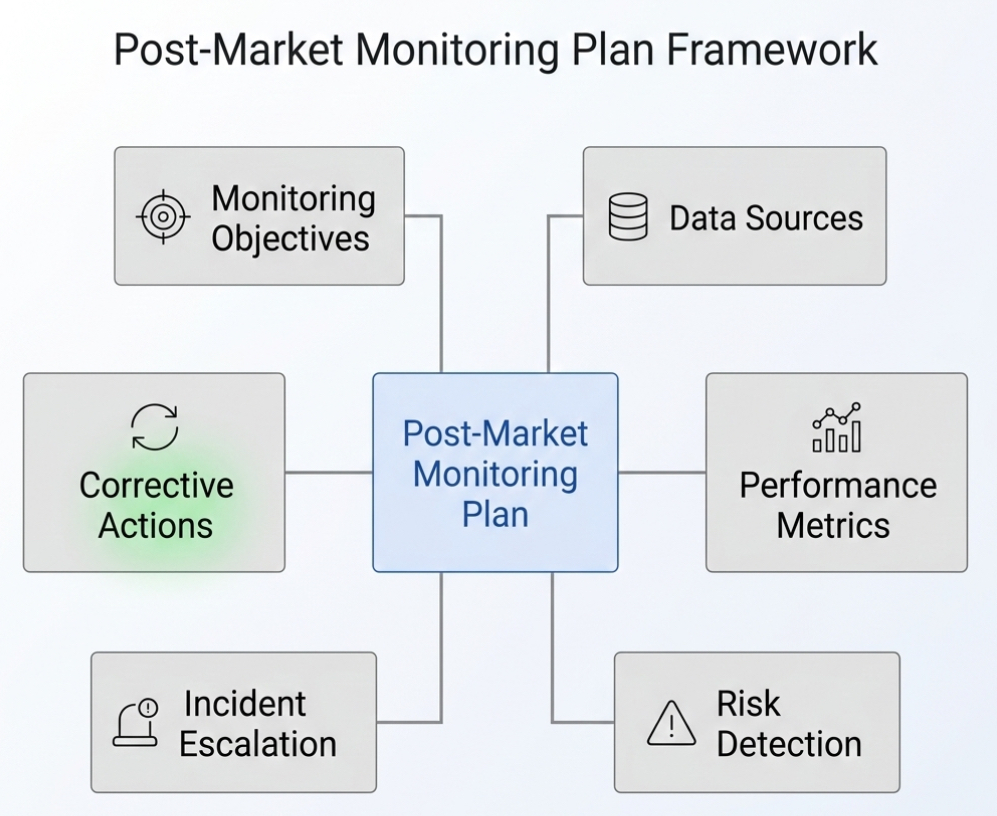

A well-constructed post-market monitoring plan normally begins by defining the objectives of monitoring. These objectives clarify why monitoring exists and what risks the provider intends to observe over time. For example, a monitoring plan may specify that the system must maintain certain accuracy levels across demographic groups, or that it must detect unusual operational conditions that could compromise system reliability. These objectives translate the broader legal requirements of the EU AI Act into operational expectations.

The plan then describes how monitoring data will be collected. Providers typically outline the sources of information they will rely upon, including system logs, telemetry generated by model serving infrastructure, feedback from deployers, and reports submitted by users or administrators. The plan may also reference mechanisms through which deployers communicate operational concerns back to the provider. Because Article 72 emphasizes active and systematic data collection, documentation must show that these channels exist and are maintained.

Another essential element of the monitoring plan involves the analytical procedures used to interpret collected data. Providers should explain how monitoring signals will be evaluated, which metrics are tracked, and how anomalies are identified. Some organizations describe statistical thresholds for performance drift or bias indicators, while others outline review procedures carried out by dedicated monitoring teams. The precise structure varies by system, but the documentation should make clear that monitoring involves structured evaluation rather than ad hoc observation.

Responsibilities within the monitoring process must also be defined. Monitoring rarely belongs to a single department. Engineering teams may operate the technical monitoring infrastructure, compliance officers may evaluate regulatory implications, and product teams may coordinate system updates or retraining cycles. The monitoring plan therefore identifies which roles interpret monitoring results and who is authorized to initiate corrective measures when risks appear.

Review frequency is another aspect that appears frequently in monitoring plans. Some signals require continuous automated monitoring, while others may be assessed through periodic review sessions. For example, system performance metrics might be evaluated weekly, whereas broader governance reviews may occur quarterly. What matters from a compliance perspective is that the plan demonstrates a deliberate rhythm of observation rather than sporadic checks.

Corrective actions form the final operational component of most monitoring plans. When monitoring reveals a risk—such as performance degradation, emerging bias, or operational anomalies—the provider must determine how to respond. Possible responses include retraining the model with updated datasets, adjusting system parameters, updating user instructions, or temporarily suspending certain functions. Documenting these response mechanisms ensures that monitoring signals translate into meaningful action.

Because the monitoring plan becomes part of the technical documentation package, it must remain consistent with other elements described in Annex IV. The documentation should show how monitoring interacts with the risk management system, data governance procedures, and human oversight mechanisms already required under the EU AI Act. In well-organized compliance frameworks, these elements form a connected structure rather than isolated documents.

The European Commission is expected to publish implementing acts that specify elements of the monitoring plan in greater detail. These acts may provide standardized templates or minimum information requirements that providers must follow when preparing monitoring documentation. Organizations do not need to wait for these templates to begin designing monitoring plans, but they should remain attentive to future guidance that may refine the structure expected by regulators.

In practice, organizations often treat the monitoring plan as both a compliance document and an operational handbook. Engineers consult it when implementing monitoring pipelines, compliance officers rely on it when reviewing system behavior, and auditors examine it when assessing whether monitoring obligations are fulfilled. The document therefore serves as a bridge between regulatory expectations and everyday operational practice.

When properly implemented, the monitoring plan becomes more than a static document stored in a compliance archive. It becomes a living framework that evolves alongside the AI system itself. As systems are updated, retrained, or deployed in new contexts, the monitoring plan can be revised to reflect those changes. This adaptability ensures that documentation remains aligned with the system’s real operating conditions.

Risk Mitigation and Incident Handling in Post-Deployment Environments

Monitoring has little value if it does not lead to action. The EU AI Act therefore treats post-market monitoring not only as an observational activity but also as a mechanism for identifying and addressing risks that emerge during the operational life of an AI system. Article 72 establishes the monitoring obligation, but its real significance becomes clearer when it is read alongside Article 73, which governs the reporting of serious incidents and malfunctioning systems.

In operational terms, monitoring functions as the early detection layer within the broader governance framework. Signals collected from system logs, deployer reports, telemetry, and performance indicators may reveal patterns that suggest emerging risks. These signals do not automatically represent compliance violations, but they provide the first indication that the system’s behavior may be shifting beyond expected boundaries. Detecting such shifts early is precisely what monitoring systems are meant to accomplish.

Risk mitigation begins with structured evaluation of these signals. When monitoring systems flag anomalies—such as unusual prediction patterns, sudden performance degradation, or unexpected error conditions—teams responsible for system oversight must investigate the underlying causes. Investigations may involve reviewing input datasets, analyzing system logs, replicating the conditions under which anomalies occurred, or consulting with deployers who observed the behavior in operational settings.

Some risks originate from environmental changes rather than flaws in the original system design. Data distributions evolve as social, economic, or behavioral patterns shift. A model trained on historical information may therefore encounter situations that differ significantly from the data it originally learned from. Monitoring helps identify these changes by revealing when prediction confidence drops, when output distributions drift, or when model decisions begin to diverge from expected patterns.

When such risks are confirmed, providers must decide whether corrective action is necessary. Corrective actions vary depending on the nature of the issue. In some cases, retraining the model with updated datasets may restore performance and fairness indicators. In other situations, adjustments to decision thresholds or operational policies may reduce risk exposure. Documentation updates may also be required when monitoring reveals new operational constraints or limitations that deployers should understand.

The EU AI Act also anticipates scenarios in which monitoring reveals issues serious enough to trigger formal reporting obligations. Article 73 requires providers to report serious incidents or malfunctioning AI systems that result in risks to health, safety, or fundamental rights. The regulation specifies timelines for notifying national authorities once such incidents are identified. Post-market monitoring therefore acts as the mechanism through which providers detect conditions that may require regulatory notification.

Serious incident reporting is not intended to punish organizations that monitor their systems responsibly. On the contrary, regulators expect that well-governed AI systems will occasionally reveal unexpected behaviors through monitoring. What authorities examine is whether providers detect issues promptly, investigate them thoroughly, and report them transparently when required. A robust monitoring system demonstrates that the provider is capable of managing the risks associated with deploying advanced technologies.

For this reason, organizations often design escalation procedures within their monitoring frameworks. These procedures define how potential incidents are reviewed and who participates in the decision-making process. Engineering teams may analyze technical signals, compliance officers evaluate regulatory implications, and senior governance bodies determine whether formal reporting obligations apply. This cross-functional approach ensures that decisions are informed by both technical evidence and regulatory context.

Maintaining detailed records of these investigations is essential. Documentation should capture the monitoring signals that triggered the investigation, the analysis performed by responsible teams, and the corrective measures implemented in response. Such records form part of the compliance evidence that authorities may examine during audits or investigations.

Risk mitigation also involves preventive learning. When monitoring reveals vulnerabilities or weaknesses, organizations often incorporate those insights into future system development. Updated training datasets, improved evaluation protocols, and strengthened governance procedures may emerge from lessons learned during post-deployment monitoring. Over time, this feedback process strengthens both the individual system and the organization’s overall approach to responsible AI deployment.

The combination of monitoring, investigation, corrective action, and reporting creates a continuous safety cycle. AI systems do not remain static once they are deployed. They interact with new environments, new data, and new users. Post-market monitoring provides the institutional memory that allows providers to understand these interactions and respond appropriately when risks emerge.

Seen in this light, risk mitigation under the EU AI Act is not merely a compliance requirement. It is a recognition that complex systems require ongoing stewardship. Monitoring ensures that providers remain aware of how their systems behave in the field and that they possess the mechanisms necessary to intervene when those systems begin to operate outside acceptable boundaries.

Common Pitfalls and Practical Lessons from Early Monitoring Efforts

Organizations that begin implementing post-market monitoring often discover that the challenge is not technical complexity alone but institutional discipline. Monitoring requires coordination across engineering, compliance, and operational teams. Without that coordination, monitoring signals may exist but fail to influence decision-making. Several recurring pitfalls have already appeared in early governance efforts around AI systems.

One frequent mistake is treating monitoring as a purely technical exercise. Engineering teams may implement sophisticated logging or drift detection systems, yet the signals generated by those tools remain isolated from regulatory oversight processes. When monitoring data is not shared with compliance or governance teams, organizations lose the ability to interpret technical findings in a regulatory context.

Another pitfall involves insufficient data quality. Monitoring systems depend heavily on the reliability of the information they collect. If logs are incomplete, feedback channels poorly structured, or deployer reports inconsistent, the resulting monitoring analysis may produce misleading conclusions. Effective monitoring therefore requires deliberate attention to data governance, ensuring that operational signals are captured consistently and stored in ways that support meaningful analysis.

Fragmented organizational structures can also undermine monitoring effectiveness. In many technology organizations, responsibility for AI systems is distributed across multiple departments. Product teams oversee deployment, engineering teams maintain infrastructure, and compliance officers review regulatory obligations. When these groups operate independently, monitoring insights may fail to reach the individuals responsible for corrective actions. Successful monitoring frameworks typically include cross-functional review processes that bring these perspectives together.

Another challenge arises when organizations underestimate the scale of monitoring required for high-risk systems. Early monitoring initiatives sometimes focus on a narrow set of performance indicators while ignoring broader governance concerns. Yet the EU AI Act expects monitoring to address a wide spectrum of issues, including fairness, robustness, transparency obligations, and system reliability. Effective monitoring frameworks therefore combine technical metrics with governance-oriented evaluations.

Lessons can be drawn from other regulated industries where post-market surveillance has long been standard practice. In the medical device sector, manufacturers maintain continuous surveillance programs that track device performance after clinical deployment. These programs rely on structured reporting from hospitals, systematic data analysis, and rapid corrective actions when safety concerns arise. Similar approaches have evolved in aviation safety and pharmaceutical regulation. These industries demonstrate that monitoring becomes most effective when it is integrated into everyday operational culture rather than treated as an external regulatory burden.

Preparing for the Next Phase of EU AI Act Enforcement

The EU AI Act establishes a phased timeline for implementation, with major obligations related to high-risk systems expected to become operational during the coming years. For organizations developing or deploying AI systems in the European market, preparation for these obligations cannot wait until enforcement deadlines arrive. Building monitoring infrastructure takes time, and organizations that begin the process early will be better positioned to demonstrate compliance when authorities begin conducting systematic oversight.

Preparation often begins with internal assessments of existing monitoring capabilities. Many organizations already operate machine learning monitoring systems designed to track performance metrics or system stability. The challenge lies in aligning those technical capabilities with the regulatory expectations described in Article 72. Governance frameworks must ensure that monitoring data is evaluated not only for engineering purposes but also for compliance implications.

Organizations may also benefit from pilot monitoring programs that simulate the processes expected under the EU AI Act. Such pilots allow teams to test data collection mechanisms, reporting channels, and escalation procedures before regulatory scrutiny intensifies. These exercises often reveal gaps that would otherwise remain hidden until an incident forces immediate attention.

Another preparation step involves strengthening collaboration with deployers. Because deployers observe system behavior during everyday operations, their insights are essential to the monitoring loop envisioned by the regulation. Providers should therefore establish clear communication channels that allow deployers to report anomalies, concerns, or operational difficulties quickly. These channels ensure that monitoring reflects real-world conditions rather than relying solely on internal technical signals.

As guidance from the European Commission evolves, organizations should also remain attentive to implementing acts or additional documentation clarifying monitoring expectations. Regulatory frameworks of this scale typically develop through a combination of legislation, guidance documents, and supervisory practice. Staying informed about these developments allows organizations to refine monitoring plans as the regulatory environment matures.

Conclusion: Monitoring as the Backbone of Responsible AI Deployment

The EU AI Act introduces many obligations, but few capture the spirit of the regulation as clearly as post-market monitoring. By requiring providers to observe how their systems behave after deployment, Article 72 acknowledges a fundamental reality of modern artificial intelligence: real-world environments are unpredictable, and responsible governance requires continuous awareness of system behavior.

Monitoring transforms compliance from a one-time certification exercise into an ongoing practice of observation, learning, and improvement. Through systematic data collection, structured analysis, and documented corrective actions, providers maintain visibility into the systems they place on the market. This visibility allows organizations to detect emerging risks, respond to unexpected conditions, and demonstrate accountability to regulators and the public.

Over time, organizations that embrace monitoring as a core operational discipline often discover that it offers benefits beyond regulatory compliance. Continuous observation of deployed systems produces insights that improve model performance, strengthen governance frameworks, and build trust with stakeholders who depend on reliable AI technologies.

The EU AI Act ultimately seeks to ensure that artificial intelligence develops in ways that respect safety, fairness, and fundamental rights. Post-market monitoring provides the mechanism through which those principles remain visible long after a system leaves the development environment. In that sense, monitoring is not simply a regulatory requirement; it is the practical foundation of trustworthy AI.

Download Template

Post-Market Monitoring Plan Template

For teams moving from interpretation to implementation, the template below provides a structured starting point for documenting post-market monitoring procedures under the EU AI Act. It is designed to support practical monitoring workflows, governance oversight, incident handling, and audit-ready evidence management for high-risk AI systems.

It can be used as an internal working document, adapted to existing technical documentation, or reviewed alongside Article 72 monitoring obligations and related incident reporting processes under Article 73.

Download the PDF

PDF • EU AI Act Article 72 & 73 framework

Frequently Asked Questions About EU AI Act Post-Market Monitoring (FAQ)

What is “post-market monitoring” under Article 72 of the EU AI Act?

It is the provider’s documented, ongoing system for collecting and analyzing real-world information about how a high-risk AI system performs after it is placed on the market or put into service. Article 72 treats monitoring as continuous lifecycle oversight—evidence that the system remains compliant in practice, not only at the point of release.

Who is responsible for post-market monitoring—the provider or the deployer?

The primary obligation in Article 72 sits with the provider of the high-risk AI system. Deployers still matter: Article 26 expects deployers to monitor the system during use and feed relevant observations back. In audits, authorities typically look for a functioning feedback channel between deployers and providers, with clear roles and documented follow-through.

What does a Post-Market Monitoring Plan need to contain?

It should spell out objectives, data sources, collection methods, metrics and thresholds, review cadence, responsibilities, escalation routes, and the corrective-action toolbox. Article 72 links the plan to the technical documentation package in Annex IV, so it should read like an operational document that can be evidenced—logs, reports, review records, decisions—not a theoretical statement.

What kind of data should be collected for EU AI Act post-market monitoring?

Providers typically rely on a mix: system logs, telemetry, error rates, distribution shift indicators, outcome quality measures, user and deployer complaints, customer support escalations, and traceable records of overrides or human-in-the-loop decisions where relevant. Depending on the system and deployment context, monitoring may also need to consider external sources such as dispute mechanisms, regulatory inquiries, legal cases, and independent findings that reveal risks or harms not immediately visible through technical monitoring alone.

How does Article 72 connect to Article 73 serious incident reporting?

Monitoring is the detection layer; incident reporting is the regulatory response layer. If monitoring reveals serious incidents or malfunctioning that creates risk to health, safety, or fundamental rights, Article 73 can require notification to authorities within the timelines in the Act. Weak monitoring increases the chance that incidents are detected late—or not detected at all—which becomes a compliance problem by itself.

What evidence do regulators typically expect to see during an audit?

A credible monitoring plan inside the Annex IV documentation, plus operational proof: monitoring dashboards or reports, logged alerts and investigations, documented decisions, corrective and preventive actions, communications with deployers, and records showing that monitoring findings led to changes when warranted. Authorities usually focus on whether the loop works end-to-end, not whether the language in the plan is polished.

References

- EU Artificial Intelligence Act (AI Act Explorer). (n.d.). Article 72: Post-market monitoring by providers and post-market monitoring plan for high-risk AI systems. https://artificialintelligenceact.eu/article/72/

↩ - European Union. (2024). Regulation (EU) 2024/1689 of the European Parliament and of the Council laying down harmonised rules on artificial intelligence (Artificial Intelligence Act). EUR-Lex. https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=CELEX:32024R1689

↩ - EU Artificial Intelligence Act (AI Act Explorer). (n.d.). Chapter IX: Post-market monitoring, information sharing and market surveillance. https://artificialintelligenceact.eu/chapter/9/

↩

Covering responsible AI, governance frameworks, policy, ethics, and global regulations shaping the future of artificial intelligence.