There was a time when AI governance sounded like something only banks, hospitals, public agencies, and heavily regulated technology companies needed to worry about. That time has passed. In 2026, even a modest business with a sales team, a customer support desk, a hiring process, a marketing department, or an internal knowledge base is likely using AI somewhere. Sometimes management knows about it. Sometimes visibility is incomplete across teams.[6]

That second category is where the trouble usually begins.

An employee pastes confidential customer data into a public AI tool to rewrite a support response. A procurement team signs up for a promising AI vendor without checking where data is processed. A developer connects a large language model to internal documents without testing retrieval controls. A manager uses an AI screening tool in hiring because it saves time, but nobody checks whether the tool creates unfair outcomes. A department builds a workflow agent that can send emails, update records, trigger approvals, or call APIs, yet no one has agreed who is accountable when the system acts incorrectly.

None of these examples requires a dramatic science-fiction failure. They are ordinary operational mistakes. That is why they are dangerous.

The AI governance conversation has changed. The serious question is no longer whether an organization supports responsible AI in principle. Most organizations already say they do. The harder question is whether they have the working documents, review gates, ownership structures, evidence trails, and monitoring habits to prove it when something goes wrong.

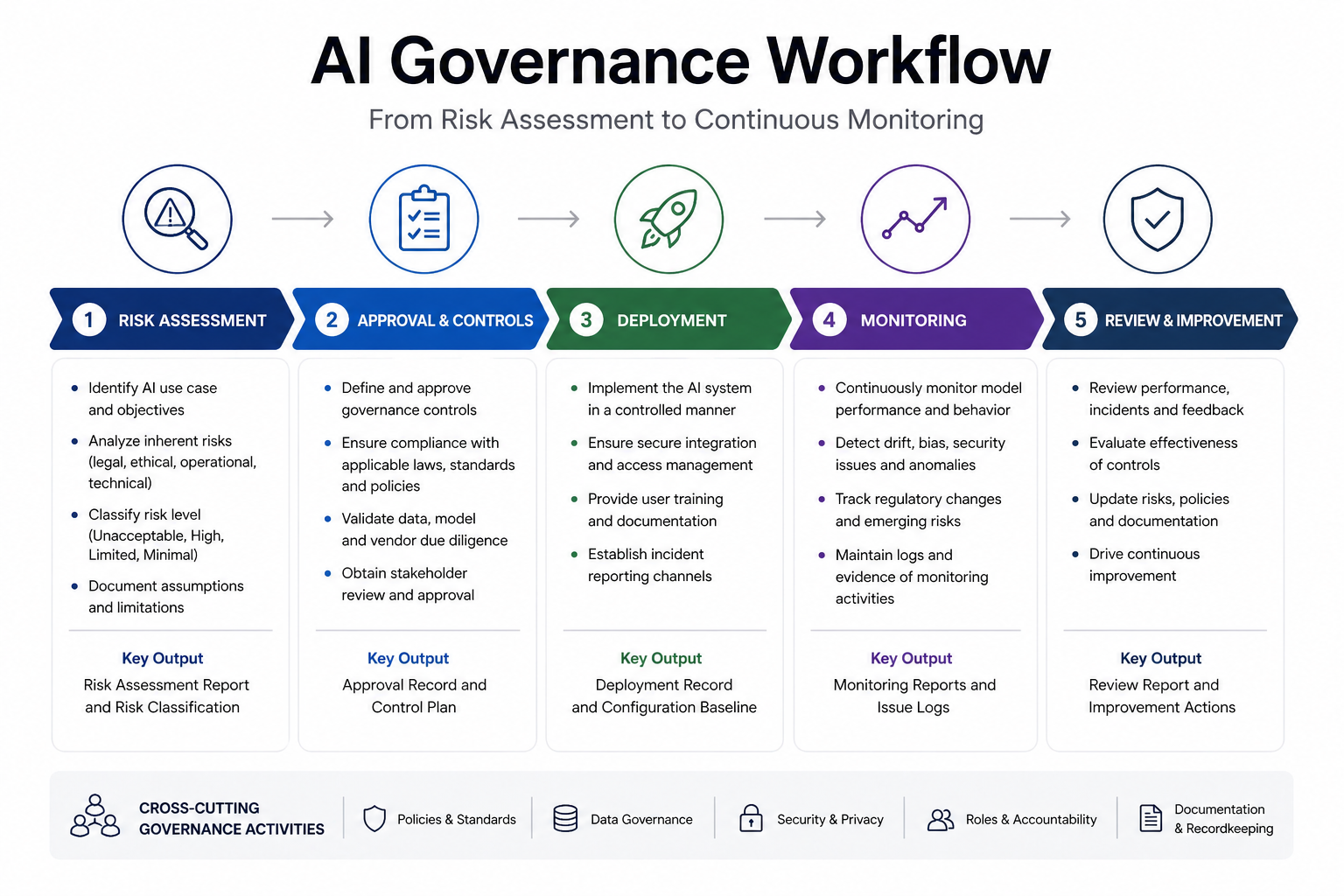

That is where a practical AI governance toolkit becomes useful. Not as a decorative policy folder. Not as a compliance slogan. A useful toolkit gives people a way to make decisions before a tool is deployed, before a vendor is approved, before sensitive data is exposed, before a model is trusted in a high-impact workflow, and before an incident becomes a board-level problem.

The strongest AI governance programs I have seen do not begin with perfect automation. They begin with simple templates that force the right questions early. Who owns this system? What data does it touch? What risk level does it fall into? What evidence shows it was tested? What restrictions apply? What is missing from the vendor side? What happens if it fails? Who reviews it after deployment?

Those questions look straightforward until teams try to answer them the same way across departments. That is when the absence of templates becomes visible.

This article is built around ten essential AI governance templates every organization should have in 2026. They are not theoretical documents. They are the operating base for an AI governance program that can survive real procurement pressure, regulatory review, internal resistance, vendor claims, and fast-moving AI adoption.

The ten templates are:

- AI Governance Charter / Committee Charter

- AI Acceptable Use Policy

- AI Risk Assessment and Classification Template

- AI Vendor Due Diligence and Third-Party Risk Checklist

- Data Governance and Classification Rules for AI

- Model Inventory and Documentation Template

- Bias and Fairness Assessment Tool

- AI Incident Response and Reporting Workflow

- AI Approval and Deployment Workflow

- Monitoring, Auditing and Continuous Improvement Template

Together, these templates create a working foundation for organizations that want to move from scattered AI use to governed AI use. They also support alignment with major references shaping the field, including the NIST AI Risk Management Framework[1], the EU AI Act[2], ISO/IEC 42001[3], and emerging AI security and risk guidance such as the OWASP Top 10 for Large Language Model Applications[5].

If your organization already has an AI policy, this toolkit helps test whether the policy has operational teeth. If your organization is just starting, the templates give you a realistic starting point without pretending every team has a large legal department, a mature model risk office, or an enterprise governance platform already in place.

Why AI Governance Feels Different in 2026

AI governance in 2026 feels different because AI is no longer sitting on the side. Not long ago, most governance discussions focused on outputs — whether a chatbot gave the wrong answer, whether a model produced biased language, or whether a summary missed something important. Those concerns still matter, but they are no longer enough.

Modern AI systems are increasingly connected to documents, databases, workflows, browsers, software tools, code repositories, calendars, procurement systems, HR platforms, and customer records. This level of integration introduces risks such as prompt injection, unintended data exposure, and insecure system interactions, which are now widely documented in security guidance like the OWASP Top 10 for Large Language Model Applications.[5]

Once AI touches workflow, governance becomes an operating issue.

A weak AI answer can be corrected. A weak AI workflow can create a trail of bad actions before anyone notices. A poorly governed chatbot may embarrass a company. A poorly governed agentic workflow can expose data, approve the wrong action, trigger a contractual problem, or make a decision no one can explain later.

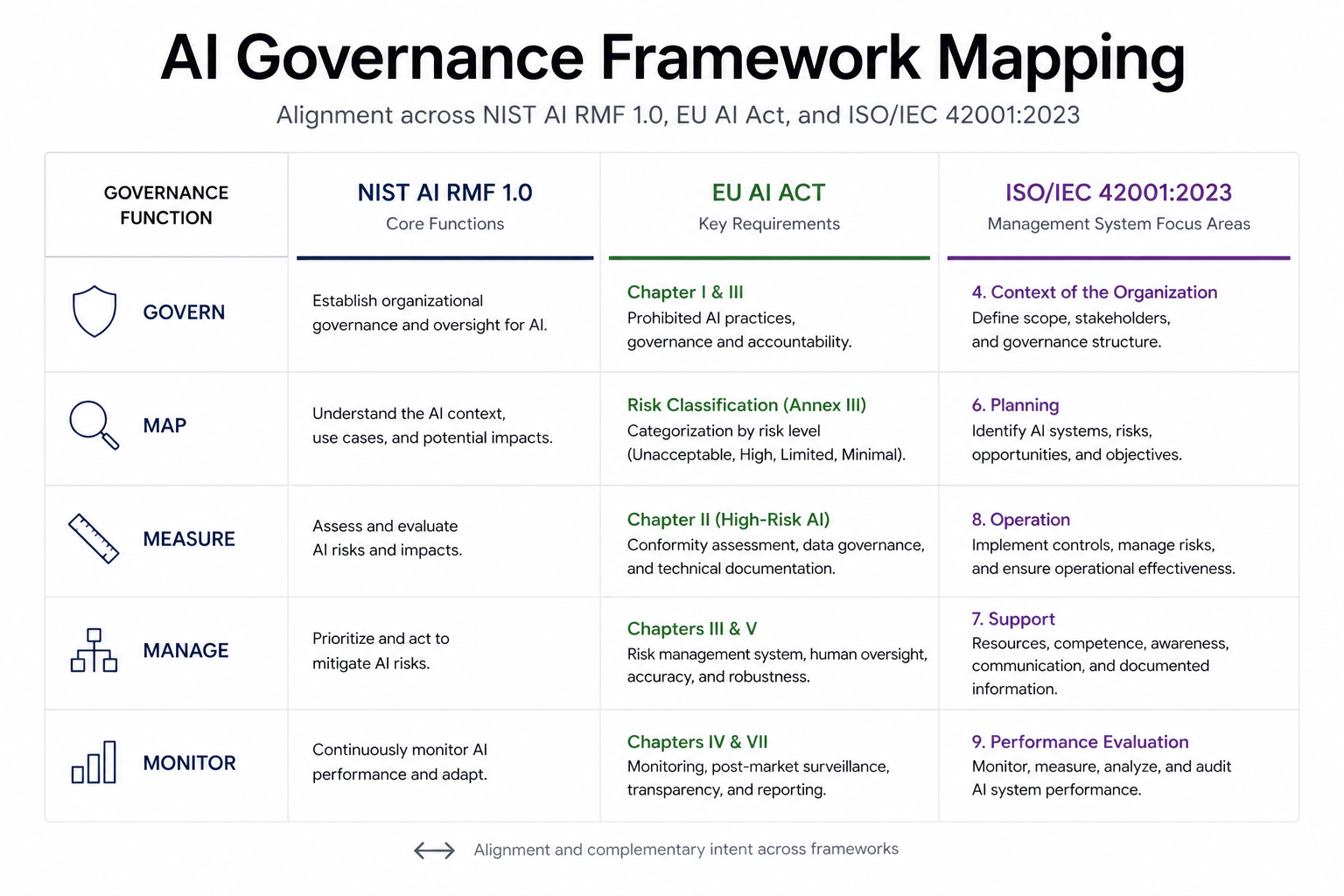

This is why organizations are paying more attention to records, accountability, risk classification, impact assessment, monitoring, and human oversight. The EU AI Act’s risk-based structure has pushed many organizations to think more formally about prohibited, high-risk, limited-risk, and lower-risk AI use cases. NIST’s AI Risk Management Framework has influenced how teams organize governance around govern, map, measure, and manage functions. ISO/IEC 42001 has added another important layer by treating AI governance as a management system, not merely a one-time policy exercise.

The practical implication is simple: organizations need documents that help people act consistently. Not thirty-page policies nobody reads. Not broad ethics statements that say the right things but decide nothing. They need templates that sit inside procurement, product development, data governance, cybersecurity, legal review, privacy review, audit, and incident response.

For many organizations, the first sign of AI governance maturity is not a public statement about responsible AI. It is a well-maintained AI inventory.

The second sign is a clear acceptable use policy.

The third is a risk review process that people actually use before adoption, not after the tool has already spread across the business.

That is why templates matter. They turn governance from intention into repeatable work.

The Problem With Most AI Governance Documents

Many AI governance documents look impressive but fail in practice. They contain the right language: fairness, transparency, accountability, privacy, security, human oversight, robustness. The problem is not that those principles are wrong. The problem is that principles alone do not tell a sales manager whether they can upload a customer spreadsheet into an AI assistant. They do not tell procurement what to ask an AI vendor. They do not tell engineering when a model needs bias testing. They do not tell legal who signs off on a high-risk use case. They do not tell leadership how many AI systems are actually in use.

In real organizations, governance usually breaks between what is written and what people actually do.

That space is where templates are useful. A risk assessment template forces teams to describe the use case. A vendor checklist forces procurement to ask about training data, subprocessors, audit rights, security controls, and incident notification. A model inventory creates one place where AI systems can be tracked. An incident workflow prevents panic when an AI system produces harmful, inaccurate, discriminatory, or unauthorized output. A monitoring template gives audit and compliance teams something more concrete than promises.

The mistake many organizations make is trying to begin with a perfect governance architecture. They wait for a full platform, a complete policy suite, a cross-functional committee, external counsel, and executive alignment before doing anything useful. By the time the program is “ready,” employees have already adopted tools, vendors have already entered the stack, and sensitive workflows may already depend on systems nobody reviewed.

A better approach is to start with a minimum viable governance system. Not weak governance. Practical governance. The kind that can be implemented quickly, improved over time, and connected to existing business processes.

That is the role of the AI Governance Toolkit 2026.

What Makes a Useful AI Governance Toolkit

In practice, a toolkit becomes useful in a few very specific ways.

First, it creates ownership. AI governance becomes weak when everyone supports it but nobody owns it. A charter defines who has authority, who reviews sensitive use cases, who approves exceptions, who maintains the inventory, who escalates incidents, and who reports risk to leadership.

Second, it creates decision points. Organizations do not need every AI use case to go through the same heavy review. That would frustrate teams and encourage them to bypass the process. A good toolkit separates low-risk productivity use from high-impact, sensitive, regulated, or externally facing AI systems. The templates help teams decide when a lightweight review is enough and when deeper assessment is required.

Third, it creates evidence. Regulators, auditors, customers, investors, and enterprise buyers increasingly want to know not only whether an organization uses AI responsibly, but how it proves that claim. Evidence may include inventories, risk assessments, vendor reviews, test results, approval records, monitoring logs, incident reports, and annual review notes.

The evidence layer is often ignored until the organization needs it. At that point, rebuilding decisions from emails, Slack messages, procurement notes, and scattered spreadsheets becomes painful. A template-based toolkit prevents that mess by creating documentation from the beginning.

A strong AI governance toolkit should also be adaptable. A small education startup does not need the same review structure as a multinational bank. A healthcare organization must treat patient data and clinical decision support differently from an e-commerce company using AI for product descriptions. A public sector agency faces different transparency and accountability expectations than a private marketing firm.

The templates should therefore provide structure without pretending every organization has the same risk profile. That balance matters. Too much rigidity slows adoption. Too much flexibility creates loopholes.

The Frameworks Behind the Toolkit

The ten templates in this article sit around the overlap between three major governance references: NIST AI RMF, the EU AI Act, and ISO/IEC 42001. They do not replace legal advice, certification work, or sector-specific requirements. What they do provide is a structure teams can actually work with and connect to those expectations.

NIST AI Risk Management Framework

The NIST AI Risk Management Framework remains one of the most practical references for organizing AI risk work because it does not reduce governance to one department. Its core functions — Govern, Map, Measure, and Manage — encourage organizations to think about AI risk across leadership, context, testing, and ongoing control.

For a toolkit, this matters because each template should support at least one of those functions. The governance charter supports Govern. The risk assessment and data classification templates support Map. Bias testing, vendor review, and model documentation support Measure. Incident response, monitoring, and deployment workflows support Manage.

That connection is useful because it prevents the toolkit from becoming a random bundle of documents. Each template has a place in the operating model.

EU AI Act

The EU AI Act has changed how many organizations think about AI risk, even outside Europe[4]. Its risk-based structure has pushed businesses to ask whether a system is prohibited, high-risk, limited-risk, or lower-risk. For companies operating in or selling into the EU, that classification work is not optional. For companies outside the EU, the Act still influences customer expectations, procurement reviews, and global governance discussions.

A practical toolkit should therefore include a risk assessment template that helps teams classify use cases early. It should also include documentation, monitoring, incident, human oversight, and vendor review components because those are the areas where regulatory expectations often become operational burdens.

The important point is not to turn every organization into a legal department. The point is to stop treating AI risk as a vague concern. If a use case affects employment, education, access to services, safety, financial opportunity, health, or fundamental rights, it deserves a stronger review process than an internal writing assistant.

ISO/IEC 42001

ISO/IEC 42001 matters because it frames AI governance as a management system. That changes the tone of the work. A management system is not a one-time policy. It requires scope, leadership, planning, support, operation, performance evaluation, and improvement.

That is where many AI governance programs are heading. Organizations want more than principles; they want repeatable controls. They want to show that AI is governed throughout its lifecycle. They want evidence that risks are reviewed, controls are maintained, incidents are handled, and improvements are made.

The monitoring and continuous improvement template is especially important here. AI systems can drift. Vendor terms can change. Model behavior can shift. Data sources can become outdated. A use case that was low-risk at launch can become more sensitive after integration with new data or automation. ISO-style thinking helps organizations avoid the trap of approving an AI system once and forgetting it exists.

Quick Framework Mapping for the Toolkit

| Toolkit Component | NIST AI RMF Alignment | EU AI Act Relevance | ISO/IEC 42001 Relevance |

|---|---|---|---|

| AI Governance Charter | Govern | Accountability and oversight | Leadership, roles, responsibilities |

| AI Acceptable Use Policy | Govern / Manage | Use restrictions, prohibited practices awareness | Operational control and internal rules |

| Risk Assessment Template | Map / Measure | Risk classification and high-risk screening | Risk planning and assessment |

| Vendor Due Diligence Checklist | Map / Measure / Manage | Third-party provider accountability | Supplier and external provider control |

| Data Governance Rules | Map / Measure | Data quality, privacy, sensitive data controls | Resource and operational controls |

| Model Inventory and Documentation | Govern / Map | Technical documentation and traceability | Documented information and lifecycle management |

| Bias and Fairness Assessment | Measure / Manage | Fundamental rights and discrimination risk | Performance evaluation and risk treatment |

| Incident Response Workflow | Manage | Post-market monitoring and serious incident handling | Corrective action and continual improvement |

| Approval and Deployment Workflow | Govern / Manage | Pre-deployment review and oversight | Operational planning and control |

| Monitoring and Audit Template | Measure / Manage | Ongoing compliance and monitoring | Performance evaluation and improvement |

This mapping is not meant to be decorative. It should be used when building the downloadable AI Governance Starter Toolkit ZIP. Each template should carry a short note showing which governance function it supports, which risk question it answers, and which team should maintain it.

That small discipline makes the toolkit easier to adopt. A legal team can see where the EU AI Act screening appears. A compliance team can see the audit trail. A data team can see where classification rules sit. A procurement team can see where vendor risk enters the process. A leadership team can see whether AI governance has a real owner.

What AI Governance Looks Like When It Fails Quietly

Most discussions about AI governance focus on visible failures. A biased outcome. A data leak. A system behaving in a way that draws attention. Those cases matter, but they are not the most common form of failure.

What usually happens is much quieter.

An AI system is introduced for a narrow use case. It works well. Over time, it is connected to additional data sources. New teams begin to rely on it. Outputs are reused in ways that were not originally intended. The system becomes part of decision-making without anyone formally expanding its scope.

No single change appears risky. The system still “works.” But the context around it has changed.

This is where governance gaps tend to appear.

Data that was once considered low sensitivity becomes more sensitive when combined with other sources. Outputs that were reviewed informally begin to influence decisions more directly. A tool that was initially optional becomes embedded in workflows.

Because the change is gradual, it rarely triggers immediate review.

By the time questions are raised, the system may already be relied upon in ways that were never assessed.

This is one of the reasons inventories, reassessment triggers, and monitoring matter. They are not only about catching errors. They are about detecting change.

Organizations that revisit their AI use periodically tend to identify this drift earlier. They adjust controls, update classifications, and refine oversight before the system becomes difficult to unwind.

Governance, in this sense, is less about preventing a single failure and more about preventing a slow shift into unmanaged risk.

The Operating Principle: Govern the Use Case, Not the Hype

One of the most useful habits in AI governance is separating the technology from the use case. The same model can be low-risk in one setting and high-risk in another. A language model used to summarize public blog comments does not raise the same issues as a model used to evaluate job applicants, triage patients, detect fraud, assign credit risk, or generate legal advice for customers.

This distinction is where many governance conversations become clearer. Teams often get stuck debating whether a tool is “safe” in general, which rarely leads anywhere useful. The better question is: safe for what purpose, with what data, under whose oversight, and what happens if it fails?

A proper AI governance toolkit should force that question repeatedly.

The acceptable use policy tells employees what they can and cannot do with AI tools. The risk assessment template examines the actual use case. The data governance rules decide what information may be used. The vendor checklist examines third-party dependencies. The model card records what the system is, how it works, where it is used, and what its limits are. The approval workflow prevents sensitive deployments from slipping through informal channels. The monitoring template keeps the review alive after launch.

That is how governance becomes practical. It follows the use case from idea to retirement.

The 10 Essential AI Governance Templates for 2026

When organizations begin to take AI governance seriously, the first instinct is often to draft a policy. That instinct is understandable, but incomplete. A policy without supporting templates tends to remain theoretical. What actually shapes behavior inside organizations are the documents people must fill, review, approve, and maintain as part of their daily work.

The templates below are not meant to sit in isolation. They are designed to connect with one another. A risk assessment should feed into an approval workflow. A vendor review should influence data governance decisions. A model inventory should support monitoring and audits. An incident report should trigger updates to policies and controls.

Each template solves a specific operational problem. Together, they form a system.

1. AI Governance Charter / Committee Charter

The first real sign that AI governance is taken seriously inside an organization is not a policy document. It is a clear answer to a simple question: who is responsible?

Without a defined governance structure, AI decisions tend to scatter across departments. Engineering adopts tools to move faster. Procurement signs vendors under pressure to deliver results. Legal reacts after deployment. Compliance hears about AI when an audit is already underway. Leadership assumes someone else is watching the risk.

A governance charter closes that gap.

At its core, the charter defines a cross-functional group responsible for overseeing AI use across the organization. In smaller companies, this may be a lightweight working group. In larger organizations, it often becomes a formal committee involving representatives from technology, legal, compliance, data, security, and business units.

The value of the charter is not in formality. It is in clarity.

A strong charter typically includes:

- Defined ownership for AI governance at the executive or senior level

- Clear roles and responsibilities across departments

- Scope of authority (what the committee can approve, reject, or escalate)

- Decision-making structure and voting or review process

- Meeting cadence and reporting expectations

- Integration points with existing governance bodies (risk, security, privacy, audit)

In practice, organizations often struggle less with writing the charter and more with enforcing it. Teams may bypass governance if they see it as a blocker. That is why the charter should be tied to real processes. Procurement should require governance review for certain AI vendors. Product teams should require approval before deploying high-impact systems. Compliance teams should reference the charter when conducting audits.

I have seen organizations where governance existed on paper but had no operational presence. In those environments, AI adoption continued exactly as before, just with more documentation. The difference comes when the charter is connected to actual decision points.

One practical approach is to start with a narrow scope. Focus the committee’s authority on high-risk or externally facing AI use cases. As the process matures, the scope can expand. Trying to govern everything from the beginning often leads to resistance and avoidance.

The charter also sets the tone. If governance is framed as a way to enable safe and scalable AI use, teams are more likely to engage. If it is framed purely as restriction, it will be treated as an obstacle.

2. AI Acceptable Use Policy (AUP)

If there is one document that immediately changes behavior across an organization, it is the acceptable use policy.

Most employees do not read governance frameworks. They do, however, pay attention when a policy clearly states what they can and cannot do with AI tools.

The need for this policy has become more urgent with the spread of easily accessible AI systems. Employees are using AI for drafting emails, summarizing documents, writing code, generating reports, analyzing data, and interacting with customers. Much of this activity happens outside formal approval channels.

The acceptable use policy creates boundaries without shutting down productivity.

A practical AI AUP should address:

- Which types of AI tools are approved, restricted, or prohibited

- Clear rules on handling sensitive, confidential, or personal data

- Restrictions on uploading internal documents to external systems

- Guidelines for using AI-generated content in external communications

- Requirements for human review in specific contexts

- Prohibited uses (e.g., discriminatory decision-making, unauthorized surveillance, misleading content)

One of the most effective sections in a well-written AUP is the “never paste” rule. It does not need to be complicated. It simply states that certain categories of information should never be entered into external AI tools. This includes customer data, proprietary business information, internal strategy documents, security credentials, and regulated data.

Organizations that skip this step often discover the problem after the fact, when sensitive information appears in unexpected places.

Another important element is clarity around AI-generated content. Employees should understand when AI assistance is acceptable, when disclosure is required, and when human authorship must take priority. In some industries, presenting AI-generated content without review or attribution can create legal or reputational risk.

The challenge with acceptable use policies is keeping them realistic. If the policy is too restrictive, employees will ignore it. If it is too vague, it becomes meaningless. The balance comes from understanding how AI is actually being used inside the organization.

One approach that works well is to observe usage patterns for a short period before finalizing the policy. Identify common tools, common use cases, and common risks. Then write the policy in a way that addresses real behavior rather than hypothetical scenarios.

3. AI Risk Assessment and Classification Template

This is where AI governance begins to move from awareness to structure.

Not every AI use case carries the same level of risk. Treating all systems equally either creates unnecessary friction or leaves serious risks unaddressed. A risk assessment template provides a consistent way to evaluate AI use cases before they are deployed.

In many organizations, this becomes the most frequently used governance document.

A well-designed risk assessment template should capture:

- A clear description of the AI use case and its purpose

- The type of data involved (public, internal, confidential, personal, sensitive)

- The level of human oversight in decision-making

- The potential impact of incorrect or biased outputs

- The affected users or stakeholders

- Dependencies on third-party models or services

- Security and privacy considerations

The template should also include a scoring or classification mechanism. This does not need to be overly complex. The goal is to separate low-risk use cases from those that require deeper review.

For example, an internal productivity assistant used to draft meeting notes may fall into a low-risk category. A system used to evaluate job applicants, approve financial transactions, or support medical decisions would fall into a higher-risk category and require more thorough review.

One of the common mistakes I have seen is treating risk assessment as a one-time exercise. In reality, AI systems evolve. New data sources are added. Integrations expand. Automation increases. What started as a low-risk tool can become more sensitive over time.

That is why the risk assessment template should include a review trigger. Changes in data, scope, functionality, or integration should prompt a reassessment.

The real value of this template is not the score itself. It is the conversation it forces. Teams are required to think through how the system works, what could go wrong, and who is affected. That awareness alone prevents many avoidable issues.

4. AI Vendor Due Diligence and Third-Party Risk Checklist

In 2026, very few organizations build all their AI systems from scratch. Most rely on a combination of external models, APIs, platforms, and vendors. That dependency introduces risk that cannot be managed through internal policies alone.

A vendor due diligence checklist brings structure to how organizations evaluate AI providers before integrating their tools.

Procurement teams are already familiar with vendor risk assessments, but AI introduces new dimensions that traditional checklists may not cover. These include training data sources, model behavior, update cycles, explainability, bias mitigation, and data handling practices specific to AI systems.

A strong AI vendor checklist should include:

- Data handling practices (what data is collected, stored, or reused)

- Security controls and certifications

- Model transparency and documentation

- Subprocessor and third-party dependencies

- Incident response commitments and notification timelines

- Contractual terms related to liability and data ownership

One area that deserves particular attention is data reuse. Some AI vendors use customer inputs to improve their models. That practice can be acceptable in certain contexts, but it must be clearly understood and controlled. Organizations should know whether their data becomes part of a broader training dataset and what safeguards are in place.

Another important consideration is vendor lock-in. AI systems that are deeply integrated into workflows can be difficult to replace. The due diligence process should therefore consider not only current capabilities but also long-term flexibility.

In practice, vendor governance often becomes reactive. Teams adopt a tool because it works, and the review happens later. The checklist helps reverse that pattern by placing governance before integration.

Organizations that consistently apply vendor due diligence tend to face fewer surprises during audits, fewer incidents related to third-party systems, and fewer disputes over contractual responsibilities.

5. Data Governance and Classification Rules for AI

Most AI governance failures are not caused by the model itself. They are caused by the data flowing through it.

Organizations tend to underestimate how quickly data exposure risk increases once AI tools are connected to internal content. Retrieval systems pull from documents that were never designed for external processing. Prompts include fragments of confidential material. Logs capture inputs that contain sensitive information. Outputs combine data in ways that make it easier to infer details that were not explicitly shared.

A data governance template for AI should address these realities directly.

At a minimum, it should define:

- Data categories (public, internal, confidential, restricted, regulated)

- Rules for which categories can be used with which types of AI systems

- Handling requirements for personal and sensitive data

- Guidelines for anonymization or masking where appropriate

- Retention and logging expectations for AI interactions

In many organizations, data classification already exists, but it is not applied consistently to AI use. The template should bridge that gap. It should make it clear that classification rules extend to prompts, outputs, training data, and integrated systems.

One of the most overlooked areas is retrieval-based AI. Systems that connect language models to internal knowledge bases are often treated as safe because they operate “inside” the organization. In practice, they can expose sensitive information if access controls are weak or if the retrieval layer is not properly scoped.

The template should therefore include controls specific to retrieval workflows, such as access-based filtering, logging of queries, and periodic review of indexed content.

Another area that requires attention is synthetic data generation. Some teams use AI to create test datasets or training examples. While useful, this practice can introduce subtle risks if the generated data reflects patterns from real individuals or proprietary material.

The strength of a data governance template lies in its clarity. It should not require interpretation. Teams should be able to look at a data category and know immediately what is allowed.

6. Model Inventory and Documentation Template

Ask a simple question inside most organizations: how many AI systems are currently in use?

The answers are usually incomplete.

Some systems are officially approved. Others are in pilot stages. Some are embedded in third-party tools. Others are used informally by teams without central visibility. Without a model inventory, governance becomes guesswork.

A model inventory template creates a single source of truth.

It does not need to be complicated. It needs to be maintained.

A practical inventory should capture:

- System name and description

- Owner or responsible team

- Use case and business function

- Risk classification

- Data sources and types

- Underlying model or vendor

- Approval status and review history

Over time, organizations may expand this into more detailed documentation, often referred to as model cards or system documentation. These can include performance metrics, known limitations, testing results, and intended use boundaries.

The value of the inventory is not just visibility. It supports multiple governance functions at once. Compliance teams can track which systems require review. Security teams can identify exposure points. Audit teams can verify that processes are followed. Leadership can understand how widely AI is used across the organization.

The challenge is adoption. Teams may resist adding entries if the process feels like extra work. One way to address this is to link the inventory to approval workflows. If a system cannot be deployed without being registered, the inventory stays current.

Another approach is to start small. Capture high-impact systems first, then expand coverage gradually. Trying to document everything at once often leads to incomplete records.

A well-maintained inventory is often the difference between reactive governance and proactive governance.

7. Bias and Fairness Assessment Tool

Bias in AI systems is often discussed in abstract terms, but it becomes tangible when decisions affect people directly.

Hiring systems, credit scoring tools, recommendation engines, fraud detection models, and customer segmentation systems all have the potential to create uneven outcomes. Sometimes the bias is obvious. Sometimes it is subtle, emerging only after patterns are analyzed over time.

A bias and fairness assessment template provides a structured way to examine these risks.

It should include:

- Identification of potentially affected groups

- Review of input data for imbalance or skew

- Evaluation of outputs for consistent treatment across groups

- Testing approaches for detecting bias

- Mitigation strategies where issues are identified

Not every organization will run advanced statistical tests. That is acceptable. What matters is that teams actively consider whether the system could create unfair outcomes and document how they approached the question.

One of the practical challenges is defining fairness. Different contexts require different interpretations. Equal outcomes, equal opportunity, and proportional representation are not the same. The template should therefore encourage teams to define fairness in relation to the specific use case.

Another challenge is data availability. In some cases, organizations do not collect demographic data needed to evaluate bias. That limitation should be documented rather than ignored. It may also influence whether the use case is appropriate.

Bias assessment is not a one-time activity. Systems can behave differently as data changes or as usage patterns evolve. Periodic review should be part of the process.

Organizations that treat fairness as a measurable concern, rather than a general principle, are better positioned to respond when questions arise.

8. AI Incident Response and Reporting Workflow

Incidents involving AI systems rarely arrive with clear labels. They emerge as unusual outputs, unexpected behavior, customer complaints, internal reports, or audit findings. Without a defined response process, teams often react in an unstructured way.

An incident response template provides a path from detection to resolution.

It should define:

- What qualifies as an AI-related incident

- How incidents are reported and by whom

- Initial triage and severity classification

- Roles responsible for investigation and remediation

- Communication protocols (internal and external)

- Documentation and post-incident review requirements

The most important part of the workflow is clarity around ownership. When an incident occurs, there should be no confusion about who leads the response.

Another critical element is timing. Some incidents require immediate action, especially those involving data exposure or regulatory implications. Others may require investigation before decisions are made. The workflow should reflect these differences.

Organizations often underestimate the importance of post-incident review. Once the immediate issue is resolved, there is a tendency to move on. That is a missed opportunity. Each incident provides insight into gaps in governance, controls, or understanding.

The template should therefore include a requirement to document lessons learned and update relevant policies or processes.

In regulated environments, incident reporting may also involve external obligations. The workflow should align with those expectations, even if the organization is not currently subject to formal reporting requirements.

9. AI Approval and Deployment Workflow

One of the most difficult aspects of AI governance is controlling how systems enter production.

Without a defined approval process, AI tools tend to spread informally. A team finds something useful, integrates it into their workflow, and only later considers governance implications. By that point, the system may already be embedded in daily operations.

An approval and deployment template creates a checkpoint before that happens.

It should outline:

- Submission requirements for new AI use cases

- Criteria for determining whether review is required

- Review stages based on risk level

- Required documentation (risk assessment, vendor review, data classification)

- Approval authority and decision records

The goal is not to slow down all innovation. It is to ensure that higher-risk systems receive appropriate scrutiny.

A practical approach is to create tiers. Low-risk use cases may require minimal documentation and a quick review. Higher-risk systems should go through a more detailed process involving multiple stakeholders.

One of the challenges is shadow AI. Employees may adopt tools outside formal channels because they perceive the approval process as slow or unclear. The workflow should therefore be transparent and efficient. If teams understand what is required and how long it takes, they are more likely to follow it.

Another useful element is discovery. Some organizations include periodic reviews to identify AI systems already in use. These can then be brought into the governance process.

Approval is not the end of governance. It is the beginning of accountability.

10. Monitoring, Auditing and Continuous Improvement Template

AI systems do not remain static after deployment. Their context changes. Data evolves. User behavior shifts. Vendors update models. New risks emerge.

Without monitoring, governance becomes outdated quickly.

A monitoring and audit template ensures that AI systems remain under observation throughout their lifecycle.

It should include:

- Key performance and risk indicators

- Logging and tracking requirements

- Scheduled review intervals

- Audit procedures and documentation

- Triggers for reassessment or escalation

Monitoring does not always require complex tools. Even simple tracking mechanisms can provide useful visibility. What matters is consistency.

One of the practical challenges is deciding what to measure. For some systems, accuracy may be the primary concern. For others, fairness, stability, or user impact may be more relevant. The template should allow for variation while maintaining a structured approach.

Auditing adds another layer. It provides an independent check on whether governance processes are followed. This can be internal or external, depending on the organization’s needs.

Continuous improvement ties everything together. Governance should evolve based on experience. Incidents, audits, and feedback should lead to updates in templates, policies, and workflows.

Organizations that treat governance as a living system, rather than a static requirement, are better prepared for the pace of change in AI.

How the Templates Work Together

Individually, each template addresses a specific concern. Together, they create a flow.

A new AI use case begins with a risk assessment. The data governance rules determine what information can be used. If an external vendor is involved, due diligence is performed. The system is registered in the inventory. If the use case is sensitive, a bias assessment may be required. The approval workflow determines whether the system can proceed. Once deployed, monitoring begins. If something goes wrong, the incident workflow is triggered. Over time, audits and reviews feed improvements back into the system.

This is where governance starts to feel like part of the work, not something separate from it.

Why Most AI Governance Efforts Break at the Hand-off Point

One of the less visible problems in AI governance appears between teams, not within them.

A use case is reviewed and approved. Documentation is completed. The system is deployed. At that point, responsibility often shifts from one group to another. What was a governance decision becomes an operational system.

This hand-off is where gaps tend to emerge.

The team that approved the system may assume it will be used within defined limits. The team operating it may extend its use to meet business needs. Over time, the connection between the original review and current use weakens.

This is not usually intentional. It is a result of normal organizational behavior. Systems are adapted, reused, and integrated into new processes.

Without a clear link between approval, ownership, and ongoing monitoring, governance becomes a point-in-time activity rather than a continuous one.

Strong governance systems treat deployment as a transition, not a conclusion. Ownership remains visible. Monitoring is expected. Changes in use trigger reassessment. Documentation is updated as the system evolves.

This is where many template-based approaches either succeed or fail. The templates themselves may be well designed, but if they are not connected across teams, the system fragments.

Maintaining that connection requires discipline more than complexity. It requires that approval records remain accessible, that ownership is clear, and that changes in use are treated as governance events rather than informal adjustments.

How Organizations Actually Implement This in Practice

On paper, AI governance looks straightforward. In practice, it rarely unfolds that way.

What I have seen across different organizations is a pattern. Teams agree that governance is necessary, but they struggle with where to begin. Some try to build everything at once and lose momentum. Others delay action until they have the “right” structure, which often means waiting until risks are already present.

The organizations that make progress tend to follow a more pragmatic path. They do not try to perfect governance before using it. They introduce structure in stages and let the process mature through use.

Phase 1: Establish Visibility Before Control

The first step is not control. It is visibility.

Trying to control AI use without knowing where it exists usually leads to frustration. Teams feel constrained, and governance teams lack the information needed to make informed decisions.

This phase focuses on understanding how AI is already being used inside the organization.

- Identify commonly used AI tools across departments

- Map basic use cases (content generation, data analysis, automation, decision support)

- Capture high-impact or externally facing applications

- Begin a simple model inventory, even if incomplete

This does not need to be perfect. In fact, it rarely is. What matters is creating an initial picture.

One of the most effective techniques at this stage is informal discovery. Instead of launching a formal audit, some organizations start with short conversations across teams. They ask what tools people are using, how they are using them, and what problems they are trying to solve.

The answers are often more revealing than expected.

In several cases, I have seen teams relying heavily on AI tools that leadership assumed were not in use at all. That gap is not a failure. It is a signal that governance needs to catch up with reality.

Phase 2: Introduce Core Guardrails

Once there is a basic understanding of usage, the next step is to introduce guardrails that apply across the organization.

This is where the acceptable use policy and data governance rules become important.

The goal is not to eliminate risk entirely. It is to reduce the most obvious and immediate risks.

- Define clear rules for handling sensitive data in AI systems

- Communicate acceptable and prohibited uses in simple language

- Provide examples that reflect actual work scenarios

- Ensure employees know where to find guidance when unsure

Communication matters as much as the content itself. Policies that are buried in internal portals or written in dense legal language tend to be ignored. Policies that are practical, visible, and connected to everyday work are more likely to be followed.

Some organizations support this phase with short training sessions or internal briefings. These do not need to be extensive. Even a clear explanation of what not to do can prevent avoidable incidents.

Phase 3: Create Structured Review for Higher-Risk Use Cases

At this point, governance begins to take a more structured form.

Instead of applying the same level of review to all AI use, organizations introduce a tiered approach. Low-risk activities continue with minimal friction, while higher-risk systems go through defined review steps.

This is where the risk assessment template, vendor checklist, and approval workflow become active.

- Require risk assessments for new or expanded AI use cases

- Apply vendor due diligence before integrating external systems

- Route higher-risk use cases through a governance review process

- Document decisions and approvals

The key here is consistency, not perfection. Even a simple review process, applied consistently, creates a stronger governance posture than a complex process that is rarely followed.

Organizations often discover that only a small percentage of use cases require deep review. Most activity falls into lower-risk categories. That realization helps maintain momentum, because governance does not become a bottleneck for everyday work.

Phase 4: Embed Governance Into Existing Workflows

Governance becomes sustainable when it is part of how work is already done.

Instead of treating AI governance as a separate layer, organizations integrate it into existing processes.

- Procurement processes include AI vendor due diligence

- Product development includes AI risk assessment checkpoints

- Security reviews consider AI-specific risks

- Compliance and audit functions reference AI documentation

This integration reduces duplication and makes governance more natural. Teams do not feel like they are doing extra work. They are following an extended version of processes they already understand.

In some organizations, this phase also involves tooling. Governance platforms, workflow systems, and internal dashboards can help track approvals, maintain inventories, and monitor usage. These tools can be helpful, but they are not a prerequisite. The templates themselves provide the structure needed to begin.

Phase 5: Monitor, Review, and Adjust

No governance system remains static.

As AI use expands, new questions appear. Some controls may be too strict. Others may be too loose. New technologies introduce new risks. Regulatory expectations evolve.

This phase focuses on maintaining and improving the system over time.

- Conduct periodic reviews of AI systems and use cases

- Update risk classifications when scope or data changes

- Review incidents and adjust controls accordingly

- Refine policies and templates based on experience

The organizations that handle this phase well tend to treat governance as a continuous process rather than a one-time project. They expect change and build flexibility into their approach.

What Slows Organizations Down

Even with a clear structure, implementation is rarely straightforward. Across different organizations, the same issues tend to come up.

One of the most common challenges is resistance. Teams may see governance as something that slows down innovation. This is especially true in environments where speed is valued. The response is not to remove governance, but to make it proportionate. When teams see that low-risk work is not heavily restricted, they are more willing to engage with the process.

Another challenge is resource constraint. Not every organization has dedicated AI governance teams. In many cases, responsibilities are shared across existing roles. This can work if expectations are realistic. The templates should be simple enough to use without requiring specialized expertise for every step.

There is also the issue of fragmentation. Different departments may adopt their own approaches to AI, leading to inconsistent practices. A central toolkit helps reduce this fragmentation, but it requires coordination.

Finally, there is the pace of change. AI tools evolve quickly. New capabilities appear before governance processes have fully adapted. Organizations that wait for stability before acting often fall behind. Those that build adaptable systems tend to respond more effectively.

Small Teams vs Larger Organizations

AI governance does not need to look the same everywhere.

In smaller organizations, the toolkit can be implemented in a lightweight form. A single responsible lead, a simplified risk assessment, and a basic approval process may be enough to create meaningful structure.

In larger organizations, governance tends to be more formal. Committees, detailed documentation, layered review processes, and integration with enterprise systems become more common.

The important point is not the size of the system, but its consistency.

A simple process that is followed is more effective than a complex one that is ignored.

Organizations that start with manageable steps and expand gradually tend to build stronger governance over time than those that attempt to design a complete system upfront.

What Happens in Practice: Patterns, Failures, and Quiet Successes

There is a gap between how AI governance is described and how it shows up inside organizations. On paper, governance looks structured. In reality, it often develops in response to pressure.

Sometimes that pressure comes from regulators. Sometimes from customers. Sometimes from internal audit. And sometimes from a situation that should not have happened.

Looking across different organizations, a few patterns repeat often enough to be worth paying attention to.

When Governance Arrives Too Late

In several organizations, AI adoption moved faster than expected. Teams experimented with tools, integrated APIs, automated parts of workflows, and shared results across departments. For a while, everything appeared to work.

The problem usually surfaced when something small revealed a larger issue.

It might be a client asking how their data was handled. It might be an internal review noticing that multiple teams were using different AI tools with inconsistent controls. It might be a vendor contract that did not clearly define data usage. It might be a system producing outputs that could not be explained or justified.

At that point, the organization often had to pause and retrace steps.

Without an inventory, it was difficult to identify all active systems. Without a risk assessment record, it was unclear which use cases required deeper review. Without a vendor checklist, procurement decisions had to be revisited. Without a clear policy, employees were unsure what was acceptable.

What could have been addressed early through structured templates became a reactive exercise.

The lesson is not that organizations should slow down adoption. It is that adoption without structure creates hidden work that eventually surfaces.

When Governance Becomes a Bottleneck

There is another pattern that appears in organizations that take governance seriously but approach it too heavily.

In these cases, every AI-related activity is routed through the same review process. Simple use cases are treated the same as complex ones. Documentation requirements become extensive. Approval timelines stretch.

The result is predictable.

Teams begin to look for ways around the process.

Shadow AI increases, not because employees intend to bypass governance, but because they need to complete their work. Governance becomes associated with delay rather than support.

The organizations that avoid this pattern tend to separate use cases by risk level. They allow low-risk activity to proceed with minimal friction while focusing attention on areas where impact is higher.

This is where the risk assessment template plays a practical role. It allows governance to be selective without being arbitrary.

Quiet Success: Governance That Feels Invisible

The most effective AI governance systems are not always visible. They are embedded.

In these environments, teams do not talk about governance as a separate activity. It is part of how decisions are made.

When a new AI tool is introduced, someone naturally asks about data usage. When a vendor is considered, procurement follows a checklist. When a system affects customers, a review is triggered. When something unusual happens, there is a clear path to report it.

These behaviors do not require constant reminders because they are supported by templates that are already part of the workflow.

One organization I observed had a simple but effective approach. Any new AI-related purchase required a short risk assessment form. The form was not complex, but it forced the requester to describe the use case, data involved, and potential impact. In many cases, that alone was enough to identify issues early.

Another organization focused on maintaining an up-to-date inventory. Every approved system was logged, and periodic reviews were scheduled. This created a level of visibility that made it easier to respond to questions from leadership and auditors.

These are not dramatic interventions. They are small, consistent practices.

Lessons From Incidents

When incidents occur, they often reveal gaps that were not obvious before.

In one case, an organization discovered that employees were using an external AI tool to process customer inquiries. The tool was effective, but it retained interaction data in a way that was not fully understood. The issue came to light during a routine review.

The response involved updating the acceptable use policy, introducing clearer data handling rules, and requiring vendor review for similar tools.

In another case, a system used to categorize applications produced uneven outcomes across different groups. The issue was not immediately visible because the outputs appeared reasonable at a surface level. A deeper review identified patterns that required adjustment.

This led to the introduction of a bias assessment step for similar use cases and more frequent monitoring.

These examples are not unusual. They reflect how governance evolves through experience.

What matters is not the absence of incidents. It is the ability to respond, learn, and improve.

Industry Differences That Shape Governance

While the core templates remain relevant across sectors, their application varies depending on context.

Financial Services

In financial environments, the focus is often on decision-making systems. Credit assessment, fraud detection, risk scoring, and customer interactions all carry regulatory and reputational implications.

Governance in this space tends to emphasize documentation, auditability, and explainability. Risk assessment and model documentation become central. Vendor due diligence is also more detailed, given the reliance on external providers.

Healthcare

Healthcare introduces additional layers of sensitivity. Data privacy, patient safety, and clinical accuracy are critical.

Governance here often involves stricter data controls, more detailed validation processes, and closer integration with regulatory requirements. Human oversight plays a significant role, especially in systems that influence clinical decisions.

Public Sector

Public sector organizations face expectations around transparency and accountability. AI systems used in public services must often be explainable not only to internal teams but also to the public.

Governance frameworks in this context may include additional documentation, public disclosures, and structured review processes.

Commercial and Technology Companies

In commercial environments, the balance between speed and control is more pronounced.

These organizations often adopt AI to gain competitive advantage, improve efficiency, or enhance customer experience. Governance must therefore support innovation while managing risk.

Templates that are flexible and scalable tend to work better in these settings. Overly rigid processes can slow adoption, while insufficient structure can lead to inconsistent practices.

Where Tools and Platforms Fit

As governance matures, many organizations begin to explore tools that support the process.

Platforms can help track inventories, manage workflows, document decisions, and monitor systems. Some focus specifically on AI governance, while others extend existing governance, risk, and compliance systems.

These tools can be useful, but they are not a starting point.

Without clear processes, a platform becomes a repository for incomplete information. With clear templates and workflows, tools can add efficiency and visibility.

Organizations that adopt tools after establishing basic structure tend to see better results than those that rely on tools to define their governance approach.

What Still Makes AI Governance Difficult

Even with structure in place, AI governance remains a moving target.

The difficulty is not only technical. It is organizational. AI systems sit across departments, rely on external providers, evolve quickly, and are often adopted before formal processes catch up. That combination makes it difficult to maintain consistency.

One challenge that continues to surface is alignment. Legal, compliance, engineering, data, security, and business teams often approach AI from different perspectives. What looks like acceptable risk to one group may look like exposure to another. Templates help create a shared language, but alignment still requires ongoing discussion.

Another challenge is scale. What works for a small number of systems becomes harder to maintain as adoption grows. Inventories expand. Reviews increase. Monitoring becomes more complex. Organizations that do not adapt their approach tend to fall behind their own usage.

There is also the issue of change. AI tools are updated frequently. New features appear. Capabilities shift. Vendors adjust terms. A system that was reviewed six months ago may not behave the same way today. Governance has to account for that movement.

Finally, there is the tension between control and speed. Businesses want to move quickly. Governance introduces checkpoints. Finding the right balance is not a one-time decision. It is something organizations adjust continuously.

Looking Ahead: New Pressure Points

Several trends are already shaping how AI governance will need to evolve.

One of them is the growth of agent-based systems. These systems are designed to act, not just respond. They can trigger workflows, interact with tools, and carry out sequences of tasks with limited human intervention. That shift increases the importance of oversight and monitoring.

Another area is multimodal AI. Systems that combine text, images, audio, and other inputs introduce new forms of risk. Data handling becomes more complex. Outputs become harder to evaluate. Traditional review methods may not be sufficient.

There is also the continued rise of distributed AI use. Employees adopt tools independently. Teams experiment with new workflows. External integrations become easier. This environment makes centralized control more difficult and increases the importance of clear policies and accessible processes.

These trends do not require entirely new governance models. They require existing models to be applied more carefully and updated more frequently.

A Practical Starting Point: 30 / 60 / 90 Days

Organizations often ask how long it takes to establish AI governance. The answer depends on scope, but a phased approach helps create momentum.

First 30 Days

- Identify current AI use across key teams

- Create an initial model inventory (even if incomplete)

- Draft a basic acceptable use policy

- Define ownership for AI governance

First 60 Days

- Introduce risk assessment and classification for new use cases

- Apply vendor due diligence to external AI tools

- Refine data governance rules specific to AI

- Begin documenting higher-risk systems

First 90 Days

- Establish an approval workflow for sensitive use cases

- Introduce monitoring and periodic review

- Define an incident response process

- Align governance with existing compliance and audit functions

This timeline is not rigid. Some organizations move faster in certain areas and slower in others. What matters is progression. Governance improves through use, not through planning alone.

Checklist: Getting the Foundations Right

- Clear ownership of AI governance responsibilities

- Visibility into current AI systems and use cases

- Defined rules for acceptable use and data handling

- Structured risk assessment for new and evolving systems

- Consistent review of external vendors

- Documented approval process for higher-risk applications

- Ongoing monitoring and periodic reassessment

- Defined process for handling incidents and learning from them

These elements are not advanced. They are foundational. Without them, governance remains incomplete.

Closing Thoughts

AI governance is often framed as a requirement imposed from the outside. In practice, it becomes most valuable when it is treated as an internal capability.

Organizations that approach governance as a way to support decision-making tend to build systems that last. They create clarity around risk. They reduce uncertainty for teams. They respond more effectively when issues arise. They are better prepared when external scrutiny increases.

The ten templates outlined here are not meant to represent a finished state. They are a working foundation. They provide structure where there might otherwise be inconsistency. They turn broad principles into repeatable actions.

In 2026, the difference between organizations that manage AI well and those that struggle is rarely access to technology. It is the presence or absence of structure around how that technology is used.

That structure does not need to be complex to be effective. It needs to be applied.

What changes over time is not the need for governance, but how people actually carry it out. Organizations that build a habit of reviewing, questioning, and documenting their AI use tend to stay ahead of that change.

References

- National Institute of Standards and Technology (NIST).

“AI Risk Management Framework (AI RMF 1.0).”

https://www.nist.gov/itl/ai-risk-management-framework

↩ - European Commission.

“Artificial Intelligence Act.”

↩ - International Organization for Standardization (ISO).

“ISO/IEC 42001: Artificial Intelligence Management System.”

https://www.iso.org/standard/81230.html

↩ - European Parliament and Council.

“Regulation (EU) 2024/1689 on Artificial Intelligence.”

https://eur-lex.europa.eu/eli/reg/2024/1689/oj

↩ - OWASP Foundation.

“Top 10 Risks for Large Language Model Applications.”

https://owasp.org/www-project-top-10-for-large-language-model-applications/

↩ - McKinsey & Company.

“The State of AI: Global Survey.”

https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai

↩

Frequently Asked Questions About AI Governance Toolkit 2026

How long does it take to implement an AI governance toolkit in practice?

Most organizations begin seeing structure within 30 to 90 days, depending on how widely AI is already used. The initial phase usually focuses on visibility and acceptable use, while deeper elements like risk assessment and monitoring develop over time. What matters more than speed is consistency. A simple system applied consistently tends to outperform a complex system that teams avoid.

Do small organizations really need formal AI governance templates?

Yes, but not in the same form as large enterprises. Smaller teams benefit from lightweight versions of the same templates. Even a basic acceptable use policy, a simple risk check, and a clear owner can prevent common issues. The absence of structure tends to create more work later when systems need to be reviewed under pressure.

What is the most critical template to start with?

The acceptable use policy and the risk assessment template usually create the fastest impact. One sets boundaries across the organization, while the other introduces structure into decision-making. Together, they reduce immediate risk and create a foundation for the remaining templates to build on.

How do organizations deal with shadow AI usage?

Shadow AI is rarely eliminated completely. The more effective approach is to reduce the need for it. Clear policies, accessible approved tools, and simple approval processes make it easier for teams to work within governance rather than around it. Periodic discovery exercises also help identify tools already in use.

How often should AI systems be reviewed after deployment?

Review frequency depends on the risk level and how the system is used. Higher-risk systems should be reviewed more regularly, especially if they interact with sensitive data or decision-making processes. Changes in data sources, integrations, or functionality should also trigger reassessment, regardless of schedule.

Covering responsible AI, governance frameworks, policy, ethics, and global regulations shaping the future of artificial intelligence.