For a long time, transparency in AI lived in policy documents. It showed up in ethics statements, compliance briefings, and carefully worded blog posts that most users would never read. That model is starting to fade. What’s replacing it is less forgiving. People now encounter AI systems directly—through chat interfaces, recommendation engines, automated decisions—and the expectation is no longer that transparency exists somewhere in the background. It is expected to be present at the point of interaction, where decisions are felt, not described.

Across many organizations, the pattern is consistent. Many still treat disclosure as a layer added at the end, something to satisfy legal review or public relations concerns. But the market is moving in a different direction. Transparency is beginning to function as part of the product itself. When it is done well, it shapes user confidence, influences adoption, and increasingly determines whether a system is seen as credible or questionable.

This isn’t happening in isolation. Regulatory pressure is converging in a way that leaves less room for ambiguity. The European Commission’s evolving framework around high-risk systems has made disclosure obligations explicit, particularly where AI-generated content or automated decision-making could mislead users[1]. At the same time, work coming out of the United States has taken a more structural route. The National Telecommunications and Information Administration has pushed organizations toward a layered model of disclosure—one that includes technical artifacts like model cards and system documentation, not just surface-level explanations embedded in user interfaces[2]. These are not parallel developments. They are different expressions of the same underlying expectation: that AI systems must be understandable at multiple levels, depending on who is interacting with them.

There’s another force shaping this space, less visible but just as influential. Standards bodies and research institutions have begun to close the gap between theory and execution. The National Institute of Standards and Technology, through its AI Risk Management Framework, treats transparency not as a communications exercise but as part of risk control[3]. The OECD’s updated principles on trustworthy AI reinforce the same idea, placing emphasis on traceability, explainability, and accountability across the lifecycle of a system[4]. This is no longer abstract. It is influencing procurement decisions, vendor evaluations, and internal governance structures in ways that are already visible inside large organizations.

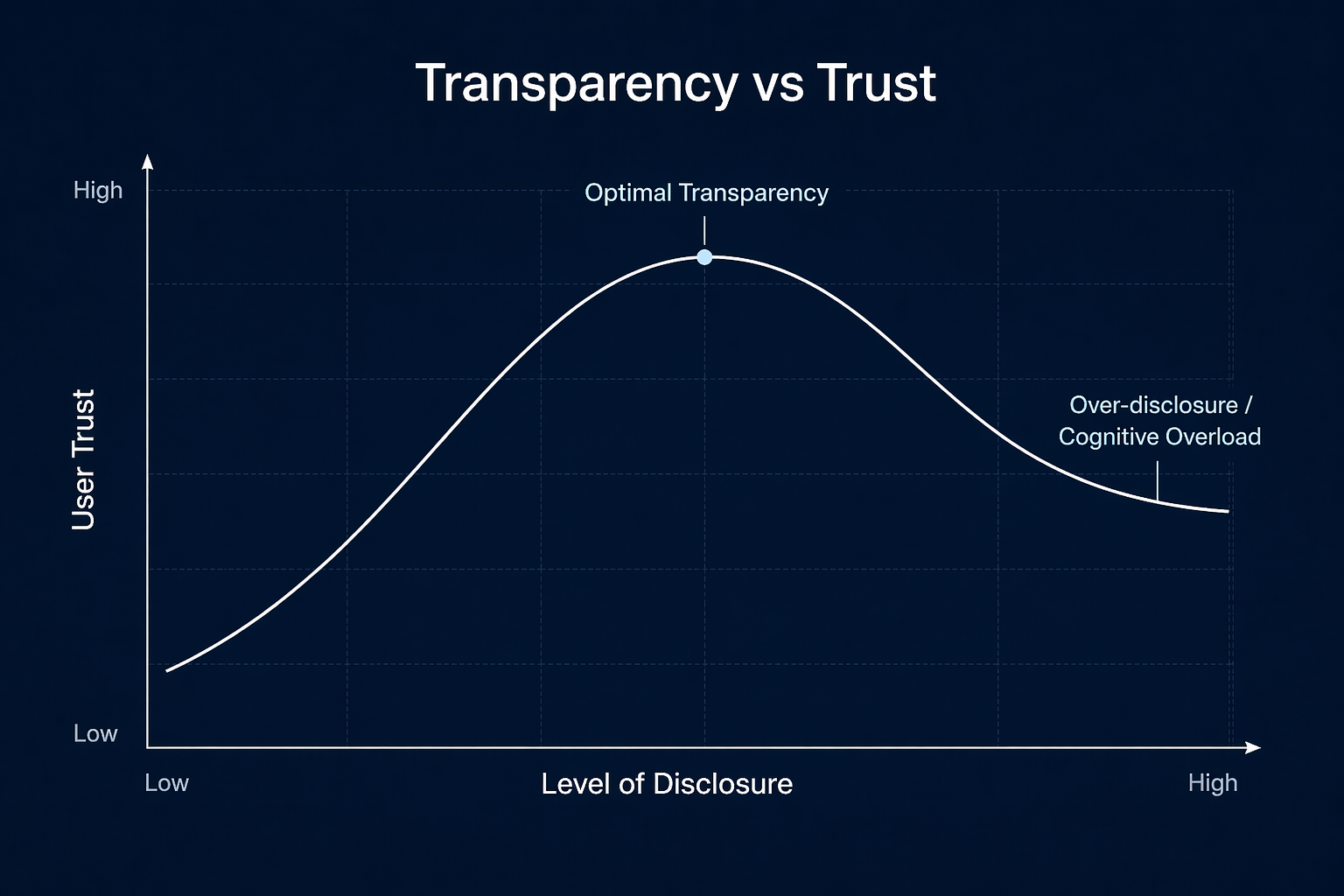

Transparency doesn’t behave in a straightforward way. Adding more disclosure does not always increase trust. In some cases, it does the opposite. Simple labels such as “AI-generated” can create skepticism if they appear without context, or if they signal automation without any indication of oversight or quality control. This tension has started to show up in research discussions and industry critiques, including work examining how major AI providers communicate their capabilities and limitations[5]. The issue is not a lack of transparency. It is a lack of usable transparency.

That distinction is where things start to break or hold. Usable transparency requires structure. It requires decisions about what to disclose, when to disclose it, and how to present it so that it can be understood without overwhelming the person on the other side. It also requires discipline. Over-disclosure leads to fatigue, while under-disclosure creates risk. Finding the balance is not a matter of instinct. It is a design problem, and increasingly, a strategic one.

What follows builds from that reality. Not from the assumption that organizations need to be convinced to care about transparency, but from the observation that many already do and are struggling with how to implement it in a way that holds up under scrutiny. The conversation has moved beyond whether disclosure is necessary. The real question now is how to make it operational—how to move from scattered statements to systems that communicate clearly, consistently, and credibly at scale.

Where Disclosure Stops Being Optional

You hear it often that transparency is becoming mandatory, but the reality is more specific than that. What is happening is a narrowing of acceptable ambiguity. In earlier phases of AI deployment, organizations could rely on general statements—“this system uses AI,” “results may vary,” “human review is applied where necessary.” Those statements are starting to look insufficient, not because they are false, but because they do not answer the questions people actually have when interacting with a system.

What users are trying to understand is simpler and more direct. Was this output generated or curated? Is there a human reviewing it? What kind of data influenced it? Where can it fail? When those questions are not answered clearly at the point of use, trust often does not degrade gradually—it drops sharply. That pattern has been visible in user studies and even more so in real-world adoption behavior, particularly in sectors where the consequences of error are visible, such as finance, healthcare, and public services.

Some organizations have started to respond by treating disclosure as a design layer rather than a legal requirement. The shift is subtle, but it changes how systems are built. Instead of asking “what must we disclose,” they ask “what does the user need to understand to use this responsibly.” That difference changes the structure of the system itself. It influences how interfaces are built, how outputs are framed, and how information is surfaced without interrupting the flow of interaction.

There is also a growing recognition that not every interaction requires the same level of transparency. The push toward proportionality has been one of the more practical developments in recent frameworks. The IAB’s approach to disclosure, for example, centers on material impact—whether the involvement of AI meaningfully affects authenticity or decision-making. That principle cuts through a common mistake: over-labeling everything in a way that reduces clarity rather than improving it.

When applied properly, proportionality forces a more disciplined mapping of AI use across a product or service. A chatbot responding to customer queries does not carry the same disclosure burden as a system generating synthetic media or influencing financial decisions. Treating them as equivalent leads to noise. Distinguishing them creates space for meaningful signals.

Different Audiences, Different Expectations

Another pressure point that has become difficult to ignore is the diversity of audiences engaging with the same system. A single AI product may be used by a general consumer, evaluated by an enterprise client, and reviewed by a regulator. Expecting one disclosure format to satisfy all three is unrealistic, yet many organizations still attempt it.

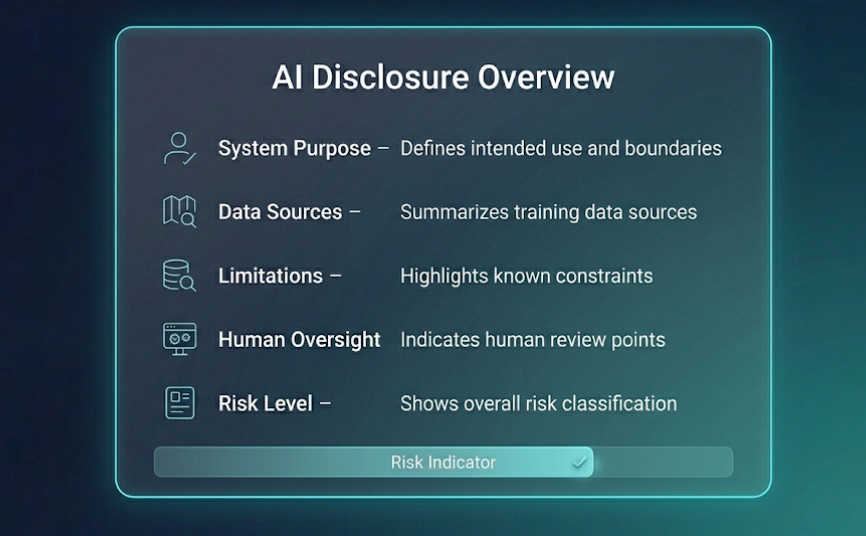

What has started to emerge instead is a layered approach. At the surface level, there is a need for something immediate and intuitive—simple indicators that communicate the presence and role of AI without requiring technical interpretation. This is where visual cues, short labels, and contextual messages play a role. They are not meant to explain everything. They are meant to signal that further information exists and can be accessed if needed.

Beneath that layer sits a more structured form of transparency. This includes documentation that outlines how the system is designed to function, where it draws its data from, and what limitations are known. Microsoft’s approach to transparency notes, for example, reflects this kind of middle layer—detailed enough for enterprise users and technical stakeholders, but still oriented toward practical understanding rather than academic completeness.

At the deepest level, there is the domain of technical and regulatory disclosure. This is where model cards, system cards, and audit-related materials come into play. The NTIA’s work has been particularly influential in framing these artifacts as part of a broader “information flow,” rather than isolated documents. The implication is that transparency is not a static report. It is a set of connected disclosures that evolve with the system.

The challenge is not in understanding these layers conceptually. It is in implementing them without fragmentation. When each layer is built independently, inconsistencies begin to appear. A system might present a confident interface while its documentation reveals significant uncertainty, or vice versa. Those gaps are often where credibility breaks down.

Why Simplicity Can Be Misleading

There is a tendency to assume that making disclosure simpler will automatically make it more effective. In practice, simplicity without substance can backfire. A single label stating that content is AI-generated might meet a formal requirement, but it rarely answers the questions that matter. In some cases, it introduces new doubts—about quality, oversight, or intent.

This is where the idea of structured summaries has started to gain traction. Borrowing from other industries, particularly food labeling and financial disclosures, organizations are experimenting with standardized formats that condense key information into a form that can be scanned quickly. These formats work not because they reduce complexity, but because they organize it. They provide a consistent way to communicate intent, data sources, limitations, and oversight without forcing users to navigate dense documentation.

What becomes clear at this stage is that transparency is no longer about visibility alone. It is about coherence. Information must align across interfaces, documentation, and underlying system behavior. When it does, users begin to develop a mental model of how the system operates. When it does not, even accurate disclosures can feel unreliable.

The next challenge is turning these principles into something operational—something that can be implemented, maintained, and scaled without becoming another layer of complexity. That is where most organizations start to struggle, and where the conversation needs to move next.

Turning Transparency Into Something You Can Actually Build

Most transparency efforts don’t fail on intent. They fail in execution. It is not because organizations lack frameworks or awareness. It is because translating those ideas into systems requires decisions that cut across product design, engineering, legal, and governance at the same time. What looks straightforward in a policy document becomes complicated the moment you try to map it onto a real product.

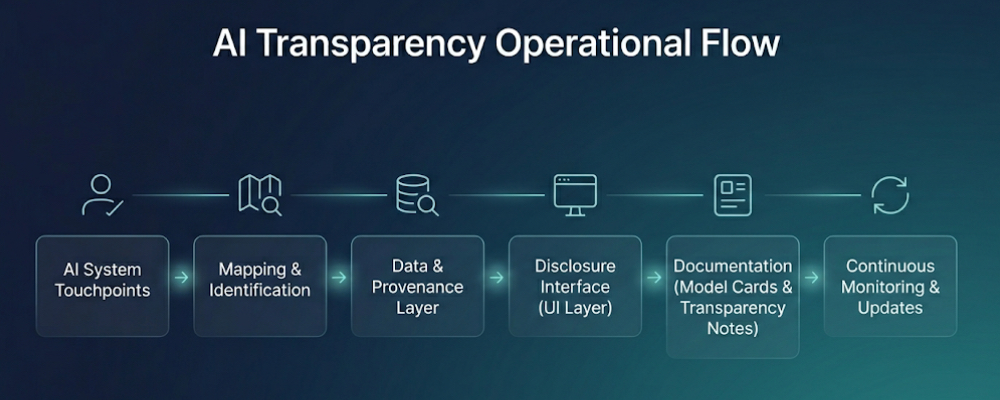

The first step is uncomfortable—and usually underestimated. It involves identifying every point where AI touches the user experience. Not just the obvious ones like chat interfaces or recommendations, but the quieter layers—ranking logic, automated moderation, decision support tools. In practice, these touchpoints are rarely documented in one place. They exist in different teams, different services, and sometimes different versions of the same system. Until they are mapped, disclosure remains guesswork.

What tends to emerge from that exercise is a more honest view of how embedded AI has become. Systems that were originally introduced as features begin to look more like infrastructure. And once that becomes clear, it changes how disclosure is approached. It stops being tied to individual components and starts being treated as something that spans the entire service.

Tracing the System Back to Its Origins

After mapping comes a more technical challenge: understanding where the system’s outputs actually come from. This is where many transparency efforts start to thin out. It is one thing to say that a system uses machine learning. It is another to describe, even at a high level, the nature of the data, the assumptions embedded in it, and the conditions under which the system was trained or tuned.

There has been a growing push toward formalizing this layer of information. Initiatives around data documentation and model reporting have tried to standardize how this is done, but the more interesting development is happening at the level of provenance. Adobe’s work on content authenticity has shown what it looks like to attach verifiable metadata to digital assets—information that travels with the content, rather than being stored separately or described after the fact[6].

This approach introduces a different way of thinking about transparency. Instead of relying entirely on explanatory text, it embeds signals directly into the content or output. When done properly, it allows systems to communicate not just that AI was involved, but how and where that involvement occurred. The technology behind it, including standards emerging from the Coalition for Content Provenance and Authenticity, is still evolving, but the direction is clear: transparency is moving closer to the data itself.

What makes this development significant is not just its technical potential, but its resilience. Text-based disclosures can be ignored, removed, or misunderstood. Metadata, when implemented consistently, becomes harder to separate from the content it describes. It also opens the door to machine-readable transparency, where systems can interpret and act on disclosure information without requiring human interpretation at every step.

Designing Disclosure Into the Interface

Even with strong documentation and provenance, transparency fails if it does not reach the user in a usable form. This is where interface design becomes critical. The question is not simply where to place a label or message, but how to present information in a way that fits the context of the interaction.

In practice, this often means moving away from static disclosures toward more dynamic elements. Small visual indicators, contextual messages, and interactive prompts have started to replace long-form explanations. A user hovering over a response to see how it was generated, or clicking an icon to understand the role of AI in a recommendation, is engaging with transparency in a way that feels natural rather than imposed.

There is a subtle balance here. Too little information, and the system feels opaque. Too much, and it becomes intrusive. The most effective implementations tend to reveal information progressively—starting with a simple signal and expanding into deeper detail only when the user chooses to explore further. This layered interaction mirrors the broader structure of disclosure, but translates it into something that can be experienced rather than read.

One of the more interesting patterns emerging from product teams is the use of what could be described as “transparency cues.” These are not full disclosures in themselves. They are signals that guide attention. A badge indicating that content has been AI-assisted, a subtle change in styling, or a short contextual note can all serve as entry points into deeper information. When combined with accessible documentation, they create a path from immediate awareness to detailed understanding.

Keeping Disclosure Alive as Systems Change

There is a tendency to treat disclosure as something that can be finalized and published. In reality, it behaves more like a living component of the system. Models are updated, data sources evolve, performance shifts over time. If disclosures do not reflect those changes, they lose relevance quickly, even if they were accurate at the moment they were written.

This is where lifecycle thinking becomes necessary. Instead of viewing documentation as static, organizations are beginning to treat it as something that must be maintained alongside the system itself. Updates to models trigger updates to disclosures. Changes in performance lead to revisions in stated limitations. New use cases require reconsideration of how information is presented to users.

The operational challenge is not trivial. It requires coordination across teams that do not always share the same priorities. Engineering teams focus on performance and reliability. Legal teams focus on risk and compliance. Product teams focus on usability. Transparency sits at the intersection of all three, which means it is often no one’s primary responsibility. The organizations that manage this well tend to formalize ownership, treating disclosure as a defined function rather than an afterthought.

Once this structure is in place, transparency begins to behave less like a reactive measure and more like part of the system’s architecture. It becomes something that can be tested, refined, and improved over time, rather than something that is written once and left behind.

At that point, the conversation shifts again. The question is no longer how to implement disclosure, but how to make it credible—how to ensure that what is being communicated reflects reality, and does not create new risks in the process.

From Principle to Implementation

At some point, transparency stops being a design discussion and becomes an implementation problem.

What matters is not whether disclosure is defined, but whether it can be consistently executed across systems without relying on interpretation.

In practice, this is where many organizations begin translating governance expectations into structured system metadata.

Not as documentation, but as something that can be read, triggered, and enforced directly within the product layer.

Implementation reference: A minimal disclosure payload can be structured as system metadata, allowing interfaces, logs, and documentation layers to pull from a single source of truth.

{

"ai_disclosure": {

"is_automated": true,

"human_oversight": "manual_review_on_escalation",

"model_reference": "v2.1",

"last_reviewed": "2026-03-15",

"data_context": "real_time_inputs",

"known_limitations": [

"edge-case inaccuracies",

"dependent on input quality"

]

}

}

This type of structure is not meant for users directly. It allows systems to surface consistent disclosure across interfaces, documentation, and audit layers without fragmentation.

The significance of this approach is subtle but important. Once disclosure is embedded at the system level, it no longer depends on individual teams to interpret policy. It becomes part of how the system operates, not just how it is described.

Why Transparency Breaks Inside the Organization Before It Reaches the User

There is a pattern that does not show up in most public discussions but becomes obvious the moment you look inside organizations trying to operationalize transparency. The breakdown rarely happens at the interface. It happens earlier, in the way different teams understand the system they are building.

Engineering teams tend to describe systems in terms of architecture, performance, and constraints. Legal teams focus on exposure, risk language, and defensibility. Product teams prioritize clarity and usability. Each of these perspectives is valid on its own. The problem is that they do not always align when it comes to describing the same system.

What reaches the user is often a compromise between these viewpoints. A disclosure that feels technically accurate but difficult to interpret. Or one that is easy to read but detached from how the system actually behaves. Over time, those compromises accumulate. The result is not a lack of transparency, but a version of it that feels inconsistent depending on where you look.

This misalignment becomes more visible as systems scale. A product may expose AI in multiple places, each owned by different teams. Without a shared structure for disclosure, each surface develops its own way of explaining what is happening. Small differences in wording begin to matter. Users notice when one part of a system is confident while another sounds uncertain about the same capability.

Organizations that manage this well tend to do something simple but uncommon. They treat transparency as a shared language rather than a final document. Instead of asking each team to describe its own component independently, they establish a common set of definitions—what counts as AI involvement, what qualifies as human oversight, how limitations are described. That shared foundation reduces friction before anything is published.

It also changes how updates are handled. When systems evolve, disclosures do not need to be rewritten from scratch. They can be adjusted within a structure that already reflects how the organization understands its own technology. That consistency is difficult to achieve, but once in place, it becomes one of the main reasons transparency holds up over time.

From the outside, users do not see this alignment directly. What they notice is its absence. Systems that explain themselves differently depending on where you look tend to feel unreliable, even if each individual explanation is technically correct. Bringing that internal layer into focus is often what separates disclosure that works from disclosure that only exists.

What a Credible Disclosure Actually Looks Like

By the time organizations reach this stage, something is already in place. There is a label, a statement, a page explaining how the system works. The problem is not absence. It is credibility. Users are becoming more sensitive to the difference between information that explains and information that reassures without substance. That distinction is subtle, but it is becoming easier to spot.

A credible disclosure does not try to say everything. It focuses on the few elements that determine how a system should be understood and used. What the system is designed to do. What it should not be used for. Where the data comes from in broad terms. Where human judgment still plays a role. And where the system is known to struggle. When those elements are present and aligned, the disclosure begins to feel grounded rather than defensive.

| Component | Why It Matters | What Strong Implementation Looks Like |

|---|---|---|

| Intent & Scope | Prevents misuse and overreliance | Clear boundaries on what the system is designed for and where it should not be applied |

| Data Provenance | Shapes trust in outputs | High-level explanation of training sources and any constraints or exclusions |

| Human Oversight | Signals accountability | Specific points where human review is applied or where escalation is possible |

| Limitations & Bias | Builds realistic expectations | Concrete examples of failure modes rather than abstract disclaimers |

What separates stronger implementations is not the elements themselves, but how they’re written. There is a difference between saying “the system may produce inaccurate results” and explaining where and why inaccuracies tend to occur. The latter requires a deeper understanding of the system, and it shows. It also changes how users behave. When people understand limitations in concrete terms, they adjust their expectations rather than abandoning trust altogether.

The Rise of Structured Summaries

One of the more practical developments in this space is the emergence of standardized summaries. Instead of long narrative disclosures, some organizations are experimenting with compact formats that resemble labels—short, structured sections that present key information in a consistent order. The idea is not new, but its application to AI is still taking shape.

Twilio’s early work on “AI nutrition facts” is often cited in this context, not because it solved the problem completely, but because it demonstrated a different way of presenting information. By organizing disclosure into defined categories, it allowed users to scan and compare systems without reading through extensive documentation[7].

The advantage of these formats is clarity. The risk is oversimplification. If the structure becomes too rigid, it can strip away nuance. The challenge is to preserve enough detail to be meaningful while keeping the format accessible. When that balance is achieved, structured summaries begin to function as entry points rather than endpoints—guiding users toward deeper information without overwhelming them at the start.

Observation from practice: The most effective disclosures I have seen are not the longest. They are the ones that answer the right questions first, then allow the reader to go deeper if needed. Length does not build trust. Precision does.

When Transparency Starts to Work Against You

There’s a point where more disclosure stops helping. This is where the so-called transparency paradox begins to show up. Users say they want more information, but when presented with it, they often disengage or misinterpret what they see. In some cases, detailed disclosures can even reduce trust if they highlight uncertainties without explaining their impact.

This is not just a communication issue. It is tied to how people interpret risk. A long list of limitations without context can make a system appear unstable, even if those limitations are well understood and managed internally. On the other hand, vague statements that avoid detail can create suspicion. Navigating this tension requires more than careful wording. It requires judgment about what information is meaningful in a given context.

Research coming out of academic and policy circles has started to question whether current disclosure practices are drifting toward what some describe as “transparency washing”—the appearance of openness without substantive insight. The Stanford-led transparency index, which has examined how major AI providers communicate their systems, reflects this concern[5]. It highlights a gap between the volume of information released and the usefulness of that information for understanding real-world behavior.

The implication is uncomfortable, but hard to ignore. Transparency is not automatically credible simply because it exists. It becomes credible when it aligns with observable behavior. If a system behaves in ways that contradict its disclosures, no amount of documentation will repair that gap.

Balancing Openness With Real Constraints

There is another layer to this conversation that rarely appears in public-facing discussions. Not all information can be disclosed freely. Organizations operate within constraints—intellectual property, security considerations, legal privilege. The challenge is to meet transparency expectations without exposing sensitive details that could create new risks.

This tension has started to shape how disclosures are written. Instead of attempting full openness, organizations are focusing on meaningful openness—sharing enough to inform users and regulators without revealing information that could be misused or that undermines competitive position. This is not an easy balance to strike, and it often requires close coordination between technical and legal teams.

What becomes clear at this stage is that transparency is not a single decision. It is a series of trade-offs. What to include, what to simplify, what to hold back. Those decisions are rarely perfect, but over time they define how a system is perceived. And in a market where trust is becoming a differentiator, perception matters as much as capability.

The final layer is where transparency moves from obligation to advantage—where it begins to shape not just compliance outcomes, but how a product is positioned and adopted. That is where the conversation is heading next.

When Transparency Becomes a Product Decision

At some point, transparency stops behaving like a requirement and starts behaving like a signal. Not the kind that sits in documentation, but the kind that influences how a system is judged before anyone reads a single line about it. This shift is already visible in enterprise procurement. Buyers are asking not just what a system can do, but how clearly it explains itself. In competitive settings, that difference is beginning to matter.

What is interesting is that this does not reward the most verbose organizations. It tends to favor those that have made deliberate choices about how transparency is expressed. Systems that communicate clearly at the point of interaction, that maintain consistency between what is said and what is observed, and that acknowledge limitations without hedging, tend to be treated differently. They are not assumed to be perfect. They are assumed to be manageable.

That distinction carries weight in environments where risk is part of everyday decision-making. It is one thing to deploy a system that is powerful but opaque. It is another to deploy one that can be interrogated, understood, and adjusted when needed. Transparency, in that sense, becomes less about reassurance and more about control.

The Quiet Role of Continuous Disclosure

There is a development taking shape beneath the surface of most discussions on this topic. It does not get much attention yet, but it is likely to become central. Disclosure is beginning to move from static statements toward continuous signals. Instead of describing a system at a single point in time, organizations are exploring ways to reflect how it behaves over time.

This is partly a response to how AI systems evolve. Models are updated, retrained, fine-tuned. Data shifts. Performance drifts. A disclosure written six months ago may still be technically accurate, but no longer representative. Static transparency struggles to keep up with dynamic systems.

What is emerging instead is a more fluid approach. Indicators that reflect current system status. Updates tied to model changes. Feedback loops that capture where outputs diverge from expectations. These are not yet standardized, but they point toward a future where transparency is less about documentation and more about ongoing visibility.

Field note: In organizations that are further along, disclosure is beginning to look less like a page and more like a layer—something that updates quietly in the background, but can be surfaced at any time when needed.

Learning From What Has Already Been Built

Some of the most practical lessons in this space are coming from implementations that were not originally designed as compliance tools. Adobe’s work on content authenticity, for example, did not begin as a regulatory response. It was an attempt to address trust in digital media. By attaching provenance data directly to content, it created a way to verify origin and modification history without relying solely on external explanations.

That approach has implications beyond media. It shows that transparency can be embedded into the fabric of a system rather than layered on top of it. When provenance becomes part of the output itself, disclosure is no longer something users have to seek out. It becomes something they encounter naturally as they interact with the system.

Another useful reference point comes from enterprise documentation practices. Microsoft’s transparency notes have shown how technical disclosure can be structured in a way that remains accessible without losing depth. They do not attempt to answer every possible question, but they provide enough clarity to support decision-making. That balance is difficult to achieve, and it is one of the reasons these documents are often used as benchmarks in internal discussions.

There is also value in looking at where things are not working. The Stanford-led efforts to evaluate transparency across major AI providers highlight a recurring issue: information exists, but it is fragmented, inconsistent, or difficult to interpret. The gap is not always in disclosure itself, but in how that disclosure is organized and connected to actual system behavior.

Avoiding the Illusion of Openness

One of the risks that has become more visible over time is the tendency to equate volume with openness. Detailed reports, long lists of principles, extensive documentation—all of these can create the impression of transparency without necessarily improving understanding. When information is not structured around how people actually use a system, it becomes difficult to navigate, and easier to ignore.

The alternative is not minimalism. It is intentional structure. Information should be layered, connected, and accessible in context. A user encountering a recommendation should not need to search for a separate document to understand why it appeared. A stakeholder evaluating a system should not need to reconcile conflicting descriptions across different sources.

When those conditions are met, transparency begins to feel less like an obligation and more like part of the system’s reliability. It reduces friction. It shortens decision cycles. It makes it easier to explain and defend how a system operates, both internally and externally.

Where This Is Heading

There is a broader shift taking place that is easy to miss when focusing on individual frameworks or case studies. Transparency is moving closer to execution. It is becoming tied to how systems are built, monitored, and updated, rather than how they are described after the fact.

This has implications beyond compliance. It affects how products are designed, how teams collaborate, and how organizations position themselves in a market that is becoming more sensitive to how AI is used. In that environment, transparency is not just about avoiding risk. It is about shaping how systems are understood and, ultimately, whether they are trusted.

That shift does not require perfection. It requires consistency. Systems that explain themselves clearly, that evolve their disclosures as they evolve their capabilities, and that align what they say with what they do, tend to stand out. Not because they claim to be more responsible, but because they make that responsibility visible in a way that can be tested.

And that is where transparency begins to move from expectation to advantage. Not as a statement, but as something that can be experienced, evaluated, and relied on.

Where It Lands in Practice

There’s a tendency to look for a clean end point in discussions like this—a moment where transparency is “done” and can be treated as complete. That moment does not really exist. What does exist is a point where disclosure becomes dependable. Where it stops lagging behind the system and starts moving with it.

The organizations that reach that point rarely describe it as a breakthrough. It usually comes from a series of adjustments. Mapping systems more carefully. Tightening how documentation is written. Aligning what appears in the interface with what exists in technical notes. Revisiting disclosures after model updates instead of leaving them untouched. None of these changes are dramatic on their own. Together, they create something that feels coherent.

What becomes noticeable at that stage is how much easier it is to answer questions. Not just from regulators or auditors, but from users, partners, and internal teams. When transparency is structured properly, it reduces the need for interpretation. It replaces vague explanations with something closer to shared understanding.

A Working Baseline for Day-to-Day Use

In practical terms, most teams end up working with a baseline set of checks. Not as a formal checklist pinned to a document, but as recurring questions that guide how systems are reviewed before and after deployment.

| Can a user tell when AI is shaping what they see? |

| Is it clear what the system is meant to do—and what it is not meant to do? |

| Are limitations described in a way that reflects real behavior, not generic caution? |

| Is there a visible path to human review or escalation where it matters? |

| Do disclosures still reflect the current state of the system? |

These questions are simple, but they tend to expose gaps quickly. A system may perform well technically and still fail on clarity. Or it may communicate well at the surface while deeper documentation lags behind. The value of this kind of baseline is not in perfection. It is in consistency. Running these checks regularly prevents disclosure from drifting out of sync with reality.

The Difference People Notice

From the outside, the difference between strong and weak transparency is rarely described in technical terms. Users tend to express it more directly. Some systems feel predictable. Others feel uncertain. That perception often comes down to whether the system explains itself clearly at the moments where decisions are being made.

In environments where AI is used heavily, this difference compounds over time. Teams working with systems that communicate clearly spend less time second-guessing outputs. They escalate issues earlier. They build internal confidence faster. The effect is not dramatic in a single interaction, but it becomes visible across repeated use.

That is where transparency begins to intersect with performance in a broader sense. Not performance as measured in benchmarks, but performance as experienced in real conditions. Systems that are understood tend to be used more effectively. Systems that are not understood tend to be used cautiously or avoided altogether.

What Holds Up Over Time

There is still a lot of movement in this space. Standards are evolving. Regulatory expectations are tightening. New techniques for embedding provenance and tracking system behavior are emerging. It would be easy to treat transparency as something that needs to be constantly reinvented to keep up.

In practice, what holds up is more stable. Clarity over volume. Alignment over appearance. Consistency over one-off improvements. Systems that follow those principles tend to adapt more easily as requirements change, because the underlying structure does not need to be rebuilt each time.

The more difficult part is discipline. Maintaining transparency over time requires attention, even when there is no immediate pressure to update or revise. It requires treating disclosure as something that evolves alongside the system, not something that is finalized and set aside.

conclusion: In most organizations, transparency does not fail because people disagree with it. It fails because it is treated as secondary to everything else. When it becomes part of how systems are built and maintained, it stops feeling like an extra step and starts behaving like a natural property of the system itself.

That is where the conversation has settled for now. Not around whether transparency is necessary, but around how well it is executed. The difference is no longer theoretical. It shows up in how systems are trusted, how they are adopted, and how they hold up when examined closely.

Once that difference becomes visible, it doesn’t go unnoticed.

For teams moving beyond high-level transparency principles into real implementation, a structured disclosure framework is provided below.

It brings together the core elements required to document AI systems clearly, consistently, and in a way that stands up to internal and external scrutiny.

AI Transparency Disclosure Framework (PDF)

Includes a complete disclosure template, layered transparency model, system-level documentation structure, and an implementation appendix with a JSON reference for engineering teams.

References

- European Commission. Artificial Intelligence Act (EU AI Act).

https://eur-lex.europa.eu/eli/reg/2024/1689/oj

↩ - National Telecommunications and Information Administration (NTIA).

AI Accountability Policy Report (NTIA, 2023).

https://www.ntia.gov/report/2023/ai-accountability-policy-report

↩ - National Institute of Standards and Technology (NIST).

AI Risk Management Framework (AI RMF 1.0).

https://www.nist.gov/itl/ai-risk-management-framework

↩ - Organisation for Economic Co-operation and Development (OECD).

OECD AI Principles.

https://oecd.ai/en/ai-principles

↩ - Stanford University.

Foundation Model Transparency Index.

https://crfm.stanford.edu/fmti/

↩ - Adobe & Coalition for Content Provenance and Authenticity (C2PA).

Content Authenticity Initiative.

https://contentauthenticity.org/

↩ - Twilio.

AI Nutrition Facts Initiative.

https://www.twilio.com/en-us/blog/ai-nutrition-facts

↩

Frequently Asked Questions About AI Transparency and Disclosure

What is the difference between AI transparency and AI disclosure?

Transparency is the broader principle—making AI systems understandable across their lifecycle. Disclosure is the practical expression of that principle, where specific information is communicated to users, stakeholders, or regulators at defined points of interaction. In practice, disclosure is how transparency becomes visible and usable.

When is it actually necessary to disclose the use of AI?

Disclosure becomes necessary when AI materially affects authenticity, decision-making, or user trust. This includes situations such as AI-generated content, automated recommendations, financial or medical outputs, and any system where users might reasonably assume human involvement. Not every use of AI requires disclosure—only those where the impact is meaningful or potentially misleading.

What makes an AI disclosure statement credible?

Credibility comes from alignment. The disclosure must reflect how the system actually behaves, not just how it is intended to work. Clear boundaries, realistic limitations, and visible points of human oversight tend to build trust. Vague or overly defensive language usually has the opposite effect, even if it meets formal requirements.

How do organizations avoid overwhelming users with too much information?

The most effective approach is layered disclosure. Start with simple, visible signals at the point of interaction, then allow users to access deeper levels of detail only when needed. This reduces cognitive load while still making full transparency available. Overloading users at the surface level often leads to disengagement rather than understanding.

Can transparency create legal or business risks for organizations?

Yes, if handled carelessly. Disclosures that reveal sensitive implementation details or contradict internal documentation can create exposure. The balance lies in meaningful transparency—providing enough information to inform users and meet regulatory expectations without disclosing proprietary or legally sensitive material. This is why many organizations involve both technical and legal teams in drafting disclosures.

Covering responsible AI, governance frameworks, policy, ethics, and global regulations shaping the future of artificial intelligence.