Your model passes validation. Accuracy looks good. Performance is stable in staging. The system behaves exactly as expected under normal conditions. Then a small, almost invisible input change causes a completely different output. Or a malicious user figures out how to bypass safeguards with a carefully crafted prompt. Or a dataset is subtly poisoned upstream, degrading performance months after deployment.

None of these failures show up in standard testing. Yet each one represents a real operational, legal, and reputational risk. This is the gap that adversarial testing is designed to expose, and it is why AI red teaming is quickly becoming a non-negotiable practice for organizations deploying serious AI systems.

For years, robustness testing was treated as a niche concern, mostly discussed in academic papers or security-focused teams. That era is over. Under the EU AI Act, organizations are now expected to demonstrate that high-risk AI systems are sufficiently robust, resilient, and secure against foreseeable misuse and attacks. In other words, it is no longer enough for a model to work well under ideal conditions. It must be stress-tested against hostile or unexpected behavior.

AI red teaming refers to the structured practice of simulating attacks against an AI system in order to uncover weaknesses before they are exploited in the real world. This includes adversarial inputs designed to fool models, poisoning attempts during training, extraction attacks, and, in the case of large language models, prompt injection and jailbreak techniques. The goal is not to break the system for its own sake, but to understand how it fails and how those failures can be mitigated.

From a regulatory perspective, AI red teaming is directly connected to EU AI Act compliance. Article 15 requires high-risk AI systems to achieve an appropriate level of accuracy, robustness, and cybersecurity throughout their lifecycle. For general-purpose AI models with systemic risk, documented adversarial testing is already emerging as an expected control. Regulators are moving away from abstract assurances and toward evidence that systems have been tested against realistic threat scenarios.

From an engineering perspective, the benefits go beyond compliance. Teams that adopt adversarial testing early tend to build models that are more reliable in production, degrade more gracefully under distribution shifts, and surface edge cases before users or attackers do. Red teaming forces engineers to think offensively, which often leads to better defensive design choices.

It is important to distinguish adversarial testing from traditional model evaluation. Standard testing focuses on performance metrics using clean, representative data. Red teaming deliberately explores worst-case conditions. It asks how the model behaves when assumptions break, when inputs are manipulated, or when the system is used in ways it was not originally designed for. This shift in mindset is critical for both robustness and regulatory defensibility.

This guide is written for machine learning engineers, technical leads, product managers, and governance teams who need a practical understanding of AI red teaming. Rather than offering high-level theory, it focuses on how adversarial testing works in real systems, which tools are commonly used, how to run a basic red team exercise, and how to document the results in a way that supports EU AI Act compliance.

In the sections that follow, we will break down what adversarial testing really means, why it is different from standard testing, and how organizations can implement red teaming without turning it into a costly or academic exercise. We will also connect these practices directly to regulatory expectations, showing how robustness testing fits into technical documentation and post-market monitoring obligations.

AI red teaming is not about paranoia. It is about realism. As AI systems take on more consequential roles, the cost of untested assumptions increases. Understanding how your model fails is one of the most effective ways to ensure that it can be trusted to operate safely, lawfully, and reliably in the real world.

What Is Adversarial Testing (Red Teaming) and Why It Is Essential for Robustness

Adversarial testing, often referred to as AI red teaming, is the deliberate practice of probing an AI system for weaknesses by simulating hostile or unexpected conditions. Instead of asking how well a model performs on typical inputs, red teaming asks how the model behaves when assumptions are violated, inputs are manipulated, or the system is intentionally stressed beyond normal operating boundaries.

In traditional machine learning workflows, testing is largely defensive. Engineers validate performance on held-out datasets, check accuracy metrics, and confirm that the model behaves consistently across known scenarios. These checks are necessary, but they assume that future inputs will resemble past data. Adversarial testing rejects that assumption and instead models the behavior of attackers, malicious users, or simply unpredictable environments.

At its core, AI red teaming is about understanding failure modes. Every model has blind spots, whether they arise from data bias, feature sensitivity, model architecture, or deployment context. Adversarial testing exposes those blind spots by intentionally crafting inputs or conditions that exploit them. This makes weaknesses visible early, when mitigation is still possible and relatively inexpensive.

There are several broad categories of adversarial threats that red teams typically explore. One of the most well-known is evasion, where carefully designed input perturbations cause a model to produce incorrect outputs while remaining indistinguishable to humans. In image classification, this might involve pixel-level changes that flip a prediction. In tabular models, small feature shifts can push outputs across decision thresholds.

Another category is data poisoning, which targets the training or fine-tuning process itself. By injecting malicious or biased data into the training pipeline, an attacker can influence model behavior in subtle ways that only become apparent after deployment. Poisoning attacks are particularly relevant in systems that rely on continuous learning or external data sources.

Model extraction and inference attacks focus on what can be learned about the model from its outputs. Repeated queries can sometimes reveal sensitive information about training data, model parameters, or decision boundaries. These risks are often overlooked in standard evaluation, yet they can have serious implications for confidentiality and intellectual property.

For large language models and other generative systems, prompt-based attacks introduce an additional dimension. Prompt injection, jailbreaks, and indirect instruction attacks exploit the model’s flexibility to bypass safeguards or produce harmful outputs. These issues are not theoretical. They regularly appear in production systems and can be difficult to detect without systematic adversarial testing.

From a robustness perspective, the value of adversarial testing lies in its ability to surface worst-case behavior. Robustness is not about eliminating all failures. It is about ensuring that failures are bounded, understood, and mitigated. A robust model degrades predictably under stress rather than failing catastrophically or silently.

This is where AI red teaming becomes directly relevant to EU AI Act compliance. Article 15 requires that high-risk AI systems achieve an appropriate level of robustness and resilience against errors, faults, and attacks. Regulators are unlikely to accept generic claims of robustness without evidence. Adversarial testing provides a concrete method for generating that evidence.

Importantly, the EU AI Act does not prescribe specific attack techniques or tools. Instead, it expects organizations to adopt measures proportionate to the system’s risk profile and intended use. For high-risk systems, this implies structured testing against foreseeable misuse and malicious behavior. Red teaming offers a defensible way to demonstrate that such risks were actively considered and addressed.

Beyond regulatory obligations, adversarial testing has clear business value. Models that are tested under hostile conditions tend to be more reliable in production. They are less likely to fail unexpectedly, and when they do fail, the failure modes are already documented. This reduces incident response time and improves confidence among stakeholders.

AI red teaming also encourages better engineering habits. It forces teams to think explicitly about threat models, assumptions, and system boundaries. This mindset often reveals design improvements that would not emerge from accuracy-driven evaluation alone. Over time, red teaming becomes less of a separate activity and more of an integrated part of responsible model development.

Understanding what adversarial testing is and why it matters is the foundation. The next step is understanding how it differs from standard testing practices, and why that distinction matters for both robustness and compliance. That distinction is where many organizations still struggle.

The Difference Between Standard Testing and Adversarial Testing for Compliance

Most AI teams already test their models extensively. They evaluate accuracy on validation sets, measure performance across key subgroups, and track metrics over time. These practices are essential, but they are designed to answer a narrow question: how well does the model perform under expected conditions? Adversarial testing answers a very different question: how does the model behave when conditions are intentionally hostile or abnormal?

Standard testing assumes that inputs are representative of the data the model was trained on. Even when fairness or robustness metrics are included, they are typically computed using clean data drawn from the same distribution. This approach is effective for optimizing performance, but it leaves a gap when models encounter manipulation, misuse, or edge cases that fall outside normal patterns.

Adversarial testing fills that gap by deliberately constructing worst-case scenarios. Instead of passively observing model behavior, red teams actively search for inputs or situations that cause failures. These scenarios may be unlikely in benign settings, but they are often realistic in environments where users, competitors, or attackers have incentives to exploit the system.

From a compliance perspective, this distinction is critical. The EU AI Act does not ask whether a model performs well on average. It asks whether appropriate measures were taken to ensure accuracy, robustness, and cybersecurity in light of foreseeable risks. Demonstrating this requires more than standard performance reports. It requires evidence that the system was tested against credible threat scenarios.

Standard testing is primarily confirmatory. It confirms that the model meets predefined performance thresholds. Adversarial testing is exploratory. It seeks to discover unknown weaknesses. This exploratory nature is what makes AI red teaming especially valuable for regulatory defensibility, because it shows that risks were actively investigated rather than assumed away.

Another key difference lies in documentation. Standard testing results are often summarized in dashboards or experiment tracking tools. While useful internally, these artifacts may not clearly explain why specific risks were addressed or how mitigation decisions were made. Adversarial testing, when conducted properly, produces structured findings that can be linked directly to risk management decisions and technical documentation.

For high-risk AI systems, this linkage matters. Article 15 of the EU AI Act expects organizations to demonstrate that robustness measures are appropriate to the system’s intended purpose. That demonstration typically involves explaining which risks were considered, how they were tested, and what actions were taken in response. Adversarial testing creates a clear narrative that connects threat identification to mitigation.

The difference also extends into post-deployment obligations. Standard testing often ends at launch, with monitoring focused on performance drift. Adversarial testing supports ongoing robustness by informing what signals should be monitored and which failure modes deserve particular attention. In this way, red teaming strengthens post-market monitoring rather than replacing it.

It is worth emphasizing that adversarial testing does not replace standard testing. The two serve complementary roles. Standard testing ensures baseline quality and fairness. Adversarial testing challenges those assumptions under stress. Together, they provide a more complete picture of system behavior across realistic operating conditions.

For organizations preparing for EU AI Act compliance, the implication is clear. Relying solely on traditional evaluation methods leaves important questions unanswered. AI red teaming provides a structured way to address those questions and to demonstrate that robustness was treated as an engineering and governance priority, not an afterthought.

Understanding this distinction sets the stage for action. The next step is moving from theory to practice by setting up a red team exercise that fits the system, the risks, and the organization’s capabilities.

How to Set Up a Practical AI Red Team Exercise

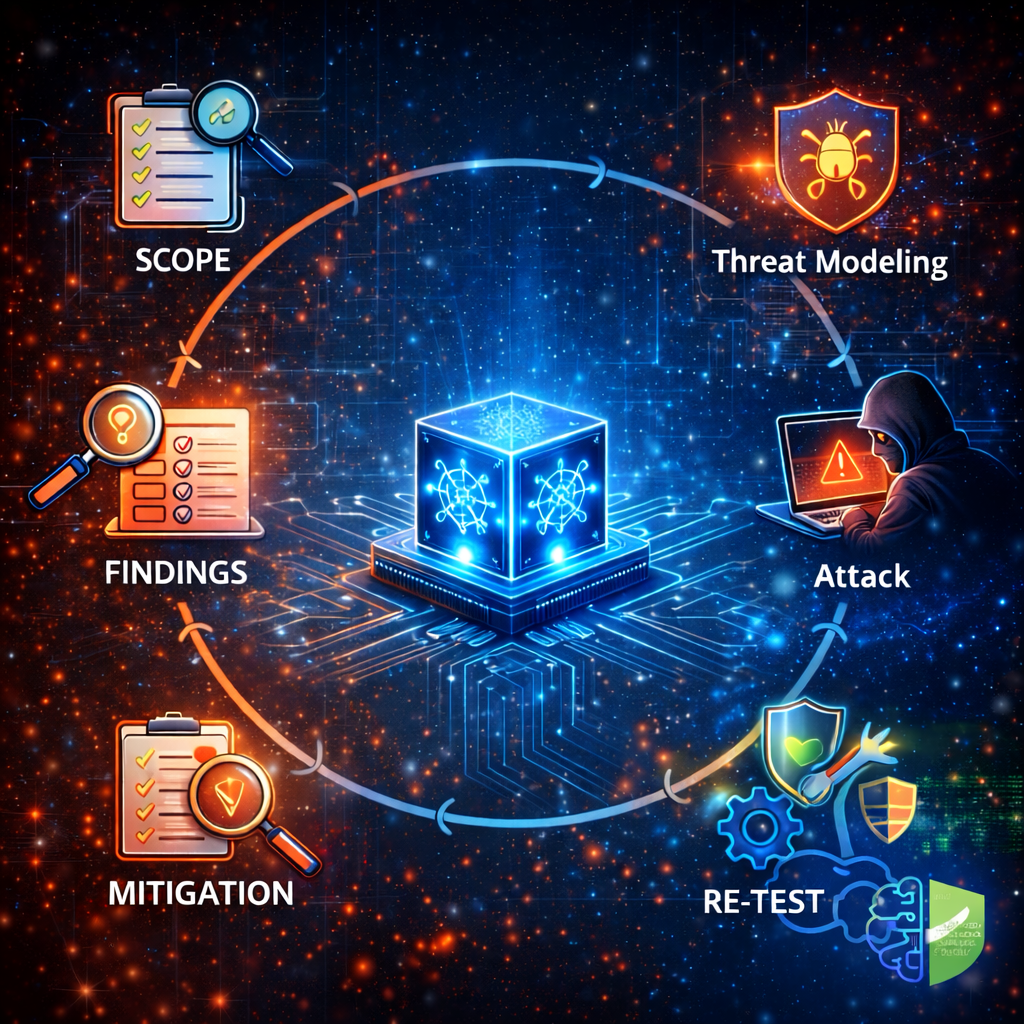

Running an effective AI red team exercise does not require a large security department or months of preparation. What it requires is clarity about scope, realistic threat modeling, and disciplined documentation. The goal is not to test everything at once, but to test the right things in a structured and repeatable way.

The first step is scoping. Before any adversarial testing begins, the team must define what system is being tested and why. This includes identifying the model type, its intended use, and its risk classification. A computer vision model used for access control presents very different risks than a language model assisting customer support. Clear scope prevents wasted effort and ensures the results are meaningful.

Scoping should also include the regulatory context. For high-risk AI systems, adversarial testing should focus on failures that could affect safety, fundamental rights, or legal outcomes. This alignment ensures that AI red teaming supports EU AI Act compliance rather than becoming a purely technical exercise disconnected from governance requirements.

The second step is assembling the red team. In smaller organizations, this may simply be a subset of engineers who were not directly involved in building the model. The key is independence of perspective. Red team members should be encouraged to think like adversaries, not maintainers. In higher-risk settings, external experts can provide valuable insight, especially for specialized attack techniques.

Once the team is defined, threat modeling becomes the foundation of the exercise. Threat modeling involves identifying the most relevant attack vectors based on the system’s architecture and deployment environment. For traditional machine learning models, this may include evasion attacks, data poisoning, or model extraction. For large language models, prompt injection and instruction hijacking are often more relevant.

Established frameworks can help structure this process. Mapping threats using recognized taxonomies ensures coverage without guesswork. The output of this step should be a short, explicit list of attack scenarios that the red team will attempt to execute. This list becomes a critical artifact for both engineering review and compliance documentation.

The next step is attack generation. This is where adversarial testing becomes hands-on. Using established libraries, the red team generates adversarial inputs tailored to the chosen threat scenarios. For example, gradient-based attacks can be applied to image or tabular models to test sensitivity to small perturbations. For language models, carefully designed prompts can be used to probe guardrails and content controls.

Attack execution should be systematic rather than ad hoc. Each attack attempt should be logged with details about the method used, the parameters chosen, and the observed model behavior. This discipline ensures that findings are reproducible and that results can be meaningfully compared across iterations.

Evaluation comes next. Red teaming is not about anecdotal failures. It is about measuring impact. This may include metrics such as attack success rates, confidence shifts, error amplification, or policy violations. The objective is to quantify how vulnerable the system is under specific adversarial conditions and to understand which weaknesses are most severe.

Mitigation is where the exercise delivers its greatest value. Findings should be reviewed collaboratively by engineering and governance stakeholders. Some vulnerabilities may be addressed through model-level defenses, such as adversarial training or input validation. Others may require process changes, monitoring controls, or usage restrictions. The chosen mitigations should be proportionate to the risk identified.

Red teaming is not a one-time activity. After mitigations are implemented, the exercise should be repeated to assess their effectiveness. This iterative approach mirrors how attackers evolve and aligns closely with the EU AI Act’s emphasis on continuous risk management throughout the system lifecycle.

To keep this process manageable, many organizations formalize it using a simple checklist or playbook. A typical red team workflow includes defining scope, selecting threats, executing attacks, evaluating results, documenting findings, and tracking remediation. Treating this workflow as a repeatable process is what transforms adversarial testing from an experiment into a governance control.

When conducted with discipline, AI red teaming becomes more than a security exercise. It becomes a practical demonstration that robustness risks were identified, tested, and addressed. This evidence is precisely what regulators and internal reviewers expect to see when assessing whether a system meets robustness obligations under the EU AI Act.

With a basic red team process in place, the next question becomes which tools are best suited to support adversarial testing at scale. Choosing the right tooling can significantly reduce effort while improving coverage and consistency.

Tools and Frameworks for Adversarial Testing and AI Red Teaming

Effective AI red teaming depends not only on mindset and process, but also on the tools used to generate, manage, and evaluate adversarial attacks. Fortunately, a mature ecosystem of adversarial testing frameworks has emerged, making it possible for engineering teams to run meaningful red team exercises without building everything from scratch.

When selecting tools, the primary consideration should be alignment with the system being tested and the risks being assessed. No single framework is ideal for every use case. Some tools excel at evasion attacks on vision models, while others are better suited for language models or tabular data. For EU AI Act compliance, tools that support repeatability, documentation, and mitigation tracking are particularly valuable.

One of the most widely used frameworks for adversarial testing is the Adversarial Robustness Toolbox. It supports a broad range of attack types, including evasion, poisoning, extraction, and inference attacks, across multiple machine learning frameworks. This breadth makes it especially useful for organizations that need a single toolkit to support compliance-focused robustness testing across different model types.

Foolbox is another popular option, particularly for benchmarking robustness against common evasion attacks. It provides a clean interface for applying gradient-based and decision-based attacks and is often used to compare model sensitivity under standardized conditions. While it is less comprehensive than some alternatives, its simplicity makes it effective for focused robustness evaluations.

CleverHans is one of the earliest adversarial machine learning libraries and remains relevant for classic attack and defense techniques. Although its development pace has slowed, it is still useful for understanding foundational attack methods and for educational or experimental settings. Teams using it in production contexts should ensure that its capabilities meet current threat models.

For teams working primarily in PyTorch, specialized libraries offer tighter integration. These tools streamline adversarial testing within existing training and evaluation pipelines, reducing friction and making red teaming easier to adopt. In natural language processing contexts, dedicated frameworks support text-based attacks, including paraphrasing, prompt manipulation, and semantic perturbations.

Large language models introduce additional challenges that traditional adversarial testing tools were not designed to handle. Prompt injection, role manipulation, and indirect instruction attacks require testing strategies that focus on interaction patterns rather than numerical perturbations. In response, a growing number of tools have emerged specifically for language model red teaming, offering structured ways to test safety controls and content policies.

Commercial platforms have also entered this space, particularly for organizations deploying high-impact or customer-facing AI systems. These platforms often combine automated attack generation with reporting and workflow features that support audit readiness. While they can reduce implementation effort, organizations should evaluate them carefully to ensure transparency and alignment with internal governance processes.

When comparing tools, it is useful to evaluate them across several dimensions: the types of attacks supported, compatibility with existing frameworks, ease of use, and the quality of outputs produced for documentation. Tools that produce reproducible results and structured reports are especially valuable for demonstrating EU AI Act compliance.

Regardless of the tooling chosen, it is important to remember that tools support the red team process; they do not replace it. Poorly scoped or undocumented adversarial testing remains weak evidence, even if advanced tools are used. Conversely, a well-designed red team exercise using simple tools can provide strong justification that robustness risks were taken seriously.

The final step in making AI red teaming defensible is documentation. Without clear records of what was tested, what was found, and how issues were addressed, even the best adversarial testing efforts will fall short of regulatory expectations. That documentation is what transforms red teaming into a formal compliance control.

How to Document Red Team Results for EU AI Act Technical Documentation

Adversarial testing only delivers regulatory value if it is documented correctly. Under the EU AI Act, robustness is not assessed based on intent or informal testing claims, but on whether appropriate measures were taken and whether those measures can be demonstrated through clear technical records. For high-risk AI systems, documentation is the bridge between engineering practice and legal defensibility.

AI red teaming results should be treated as formal inputs to the system’s technical documentation. This includes both the initial conformity assessment and ongoing updates throughout the system lifecycle. Regulators are not expecting perfection, but they do expect evidence that robustness risks were identified, tested, and addressed in a structured way.

A defensible red team report begins with scope definition. The documentation should clearly describe which model or system was tested, its intended use, its risk classification, and the rationale for selecting specific adversarial scenarios. This context allows reviewers to understand why certain attacks were considered relevant and others were not.

The next section should describe the testing methodology. This includes the types of adversarial attacks performed, the tools or techniques used, and any assumptions or constraints applied during testing. For example, documenting whether attacks were bounded within realistic perturbation limits helps demonstrate proportionality and realism.

Findings should be presented clearly and without ambiguity. Each identified vulnerability should be described along with its observed impact. This may include changes in model accuracy, violations of expected behavior, safety guardrail failures, or exposure of sensitive information. Where possible, findings should be quantified rather than described qualitatively.

Crucially, documentation must explain how findings were addressed. Mitigation measures may include model retraining, input validation, architectural changes, monitoring enhancements, or usage restrictions. For each mitigation, the documentation should note whether the issue was fully resolved, partially mitigated, or accepted as residual risk with justification.

This linkage between findings and mitigation aligns directly with the EU AI Act’s risk management requirements. It demonstrates that robustness testing is integrated into a broader system of controls rather than conducted in isolation. Where risks cannot be fully eliminated, documenting decision-making and acceptance criteria is far stronger than ignoring limitations.

Versioning is another critical aspect of documentation. Red team reports should be tied to specific model versions and deployment configurations. When models are updated or retrained, previous adversarial testing results may no longer apply. Maintaining versioned records ensures that robustness claims remain accurate over time.

For organizations deploying high-risk AI systems, red team documentation should be referenced explicitly in the technical file. This may include summaries in the main documentation and detailed reports as annexes. For general-purpose AI models with systemic risk, similar documentation supports transparency obligations and demonstrates responsible model development practices.

Well-structured documentation also supports post-market monitoring. Adversarial testing findings often reveal which failure modes deserve ongoing attention. By feeding these insights into monitoring plans, organizations create a feedback loop that strengthens both robustness and compliance over time.

Ultimately, the goal of documentation is not to satisfy paperwork requirements, but to provide a clear, honest account of how robustness risks were handled. When AI red teaming is documented with this mindset, it becomes one of the strongest forms of evidence that an organization took its responsibilities under the EU AI Act seriously.

Conclusion: Red Teaming as a Core Control, Not an Afterthought

As AI systems take on more consequential roles, the cost of untested assumptions increases. Models that perform well under normal conditions can still fail in ways that are legally, operationally, or ethically unacceptable. AI red teaming exists to make those failures visible before they occur in the real world.

For organizations subject to the EU AI Act, adversarial testing is no longer a niche security practice. It is a practical mechanism for demonstrating robustness, resilience, and accountability. Article 15 does not require theoretical guarantees. It requires that appropriate measures were taken in light of foreseeable risks. Red teaming provides a structured and defensible way to meet that expectation.

Beyond compliance, the value of red teaming is fundamentally about better systems. Teams that stress-test their models under hostile conditions develop a deeper understanding of failure modes, build stronger defenses, and respond more effectively when issues arise. Over time, this leads to AI systems that are not only more robust, but also more trustworthy to users and stakeholders.

Importantly, red teaming does not need to be complex or resource-intensive to be effective. Starting with a clear scope, realistic threat models, and disciplined documentation is often enough to surface meaningful insights. As organizations mature, these practices can evolve into more sophisticated and continuous testing programs.

AI red teaming fits naturally alongside other governance controls such as data lineage documentation, risk management systems, and post-market monitoring. Together, these practices form a coherent approach to responsible AI development that aligns engineering discipline with regulatory expectations.

If you are building or deploying high-risk AI systems, now is the right time to move adversarial testing from an optional experiment to a standard part of your workflow. Starting small today is far easier than retrofitting robustness controls under regulatory pressure later.

For a broader view of how engineers can operationalize EU AI Act requirements across the AI lifecycle, see our guide on

EU AI Act compliance for data scientists

, which outlines the core technical steps required for high-risk systems.

If you are new to the broader governance landscape or want to understand how these controls fit together, our overview on

what AI governance means in practice

provides a clear foundation.

To assess whether your current testing and governance practices meet regulatory expectations, you can also use our practical resources to identify gaps and prioritize remediation. Robustness is not a one-time achievement, but an ongoing commitment to building AI systems that behave responsibly under real-world conditions.

📄 EU AI Act Red Team Adversarial Testing Template (PDF)

This downloadable template provides a structured framework for conducting and documenting AI red team (adversarial testing) exercises in line with the EU AI Act.

- ✔ Threat modeling and attack technique documentation

- ✔ Test case identification and repeatable evidence tracking

- ✔ Findings, impact, and severity assessment

- ✔ Mitigation actions and residual risk acceptance

- ✔ Governance approval and accountability records

Designed for high-risk and systemic-risk AI systems, this template supports engineers, security teams, compliance professionals, and governance leads preparing for audits and regulatory reviews.

Covering responsible AI, governance frameworks, policy, ethics, and global regulations shaping the future of artificial intelligence.